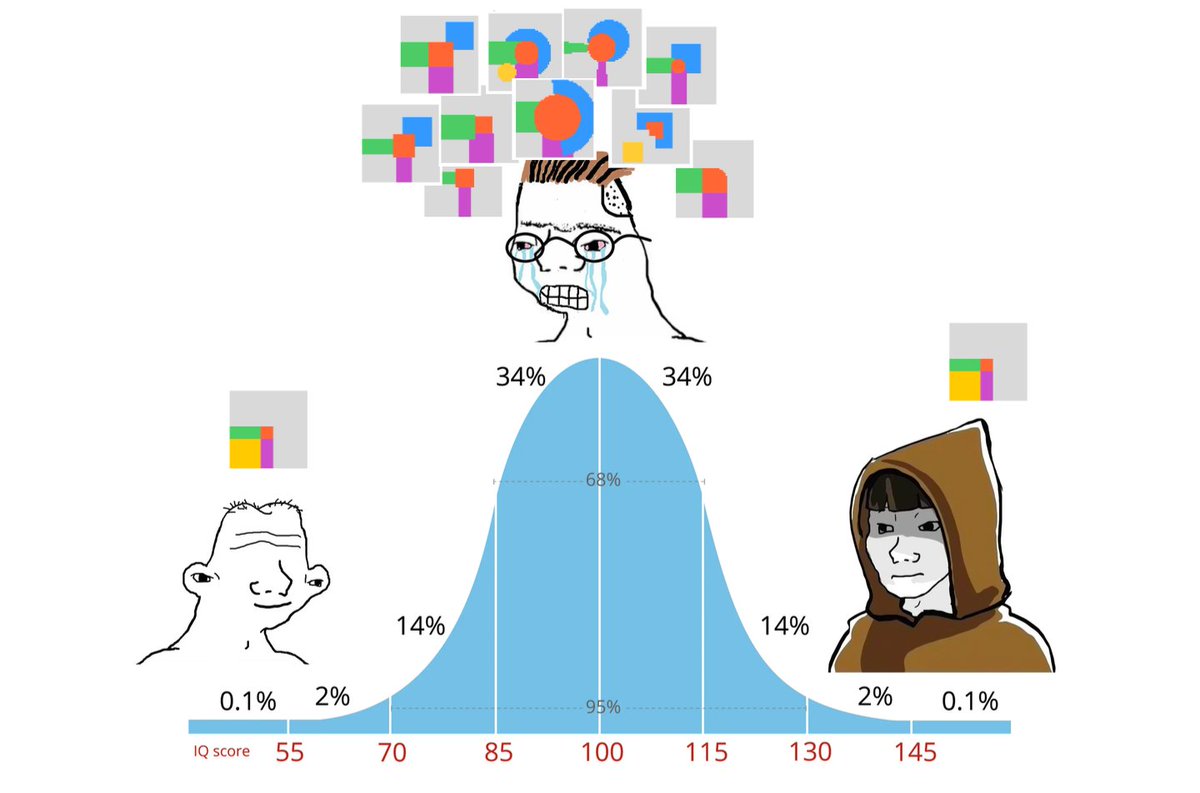

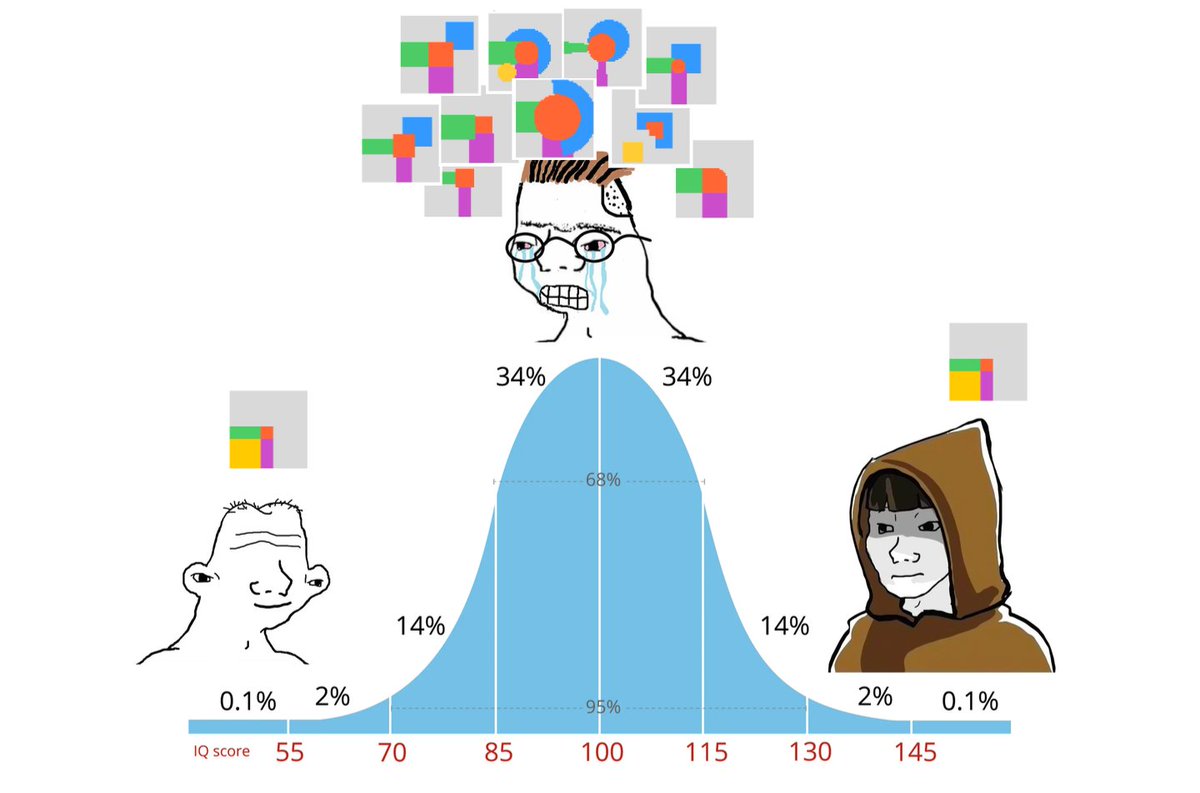

I was curious about how design apps differ in their edge / corner / rotate hit areas, so I wrote a script to move my mouse in a 50x50 grid and track what the cursor was.

David Luzar (dwelle)

2K posts

@dluzar

co-founder @excalidraw • exc/acc

I was curious about how design apps differ in their edge / corner / rotate hit areas, so I wrote a script to move my mouse in a 50x50 grid and track what the cursor was.

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

pretty interesting project that's as close as it gets to automatically translating manga i was wondering if anything like this existed because every step sounds trivial with today's tech. koharu uses different local models for detecting text boxes, ocr, translation, inpainting and rendering the translated text back. now, every step besides the last one works perfectly. for rendering it lacks either manual controls or a better auto-layout model. another option i considered was something like nano banana and passing the translation pairs directly. but the problem with all the frontier image models is they get tripped by even slightly risque content. another interesting direction for machine translation would be feeding entire chapters or even volumes of images and generating a script with speaker attribution and other important notes for the translation models. im sure this can improve the quality significantly compared to translating each page in isolation. github.com/mayocream/koha…