dragonhound 🕊️

2.5K posts

dragonhound 🕊️

@dragonhound3

Dogs, Dragons & Decentralization @komodoplatform journeyman

My big conclusion from this week: Introspection causes emotional disorders.

What are your initial impressions of Grok 4.20? Major upgrades are still landing every week.

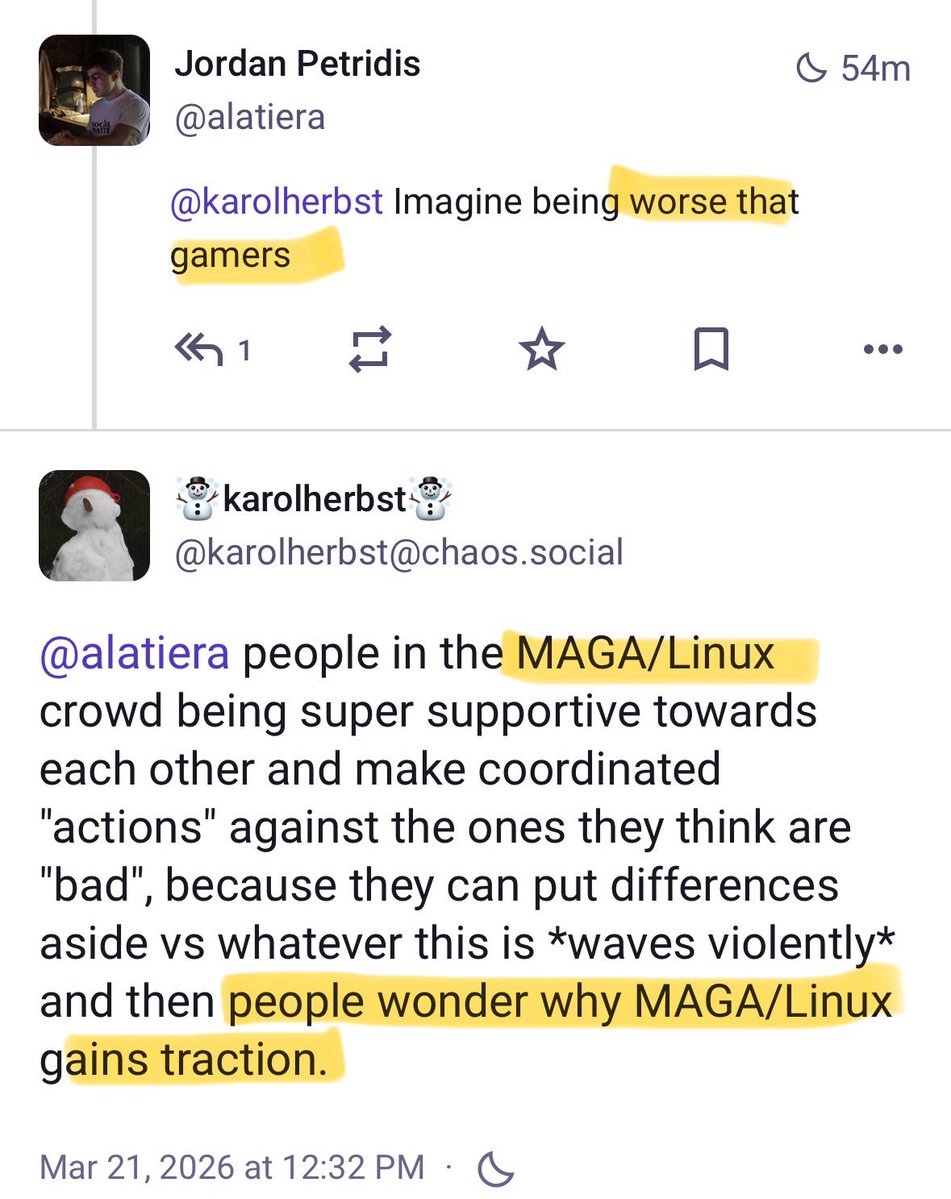

It looks like the Debian Linux project will soon have a new Leader focused on having fewer "(cis)male" contributors to Debian Linux. Nominations are closed for the new Debian Project Leader... and the election period is underway. Voters have exactly 1 (one) candidate to choose from when they vote. That's right. The Debian Project is giving their members only one option. That person, Sruthi Chandran, describes herself as a "librarian turned Free Software enthusiast and Debian Developer from India". She is focused on what she calls the "skewed gender ratios within the Free Software community", saying, "how many times did we have a non-(cis)male candidate for [Debian Project Leader]?" Sruthi says that diversity should "come up for discussion in each and every aspect of the project," adding the goal is to have "more women (both cis and trans), trans men, and genderqueer people." Voting officially begins on April 4th. lists.debian.org/debian-devel-a…

Name a tool you've used for years and never paid for. I'll go first: GitHub.

wow Anthropic just published a crazy report on AI replacing your job and er... you might want to look at this: - #1 most at-risk jobs are computer programmers, financial analysts (rip excel bros) and customer service - most at-risk workers are female, white, older and higher paid. - BUT high-risk jobs *aren't* firing employees... they've STOPPED HIRING. biggest victims: college graduates (4X more likely to be fucked) - entry-level hiring has dropped 14% since chatgpt launched (for highest risk jobs) - SAFEST jobs are... bartenders, dishwashers and lifeguards - any manual labour that AI can't automate (yet) this accounts for 30% of the job market. - this was the scariest part: AI models are capable of automating most work TODAY but are prevented because of law and slow company adoption. so its not even a fucking skill issue its an ADOPTION issue. - now its important to understand that the study is based on real world data but also 'theoretical' intelligence. so take it with a pinch of salt. some jobs (manual labor) didn't even meet min. data reqs i applaud anthropic on being so damn transparent - they're literally the company behind claude who will be responsible for these impacts studies like this will help us figure it the hell out. LOT of change coming this year.