Just because you're a physicist working in quantum doesn't mean you're an expert in quantum applications and quantum advantage.

It is the bitter truth, and one that seems to be ignored a lot.

Have I been in a situation where I was asked about the applications that quantum computers can enable?

Surely was.

Have I been uncomfortable answering those questions?

Even bigger yes.

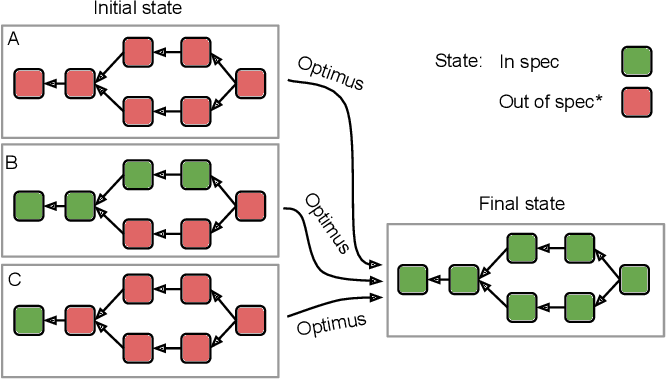

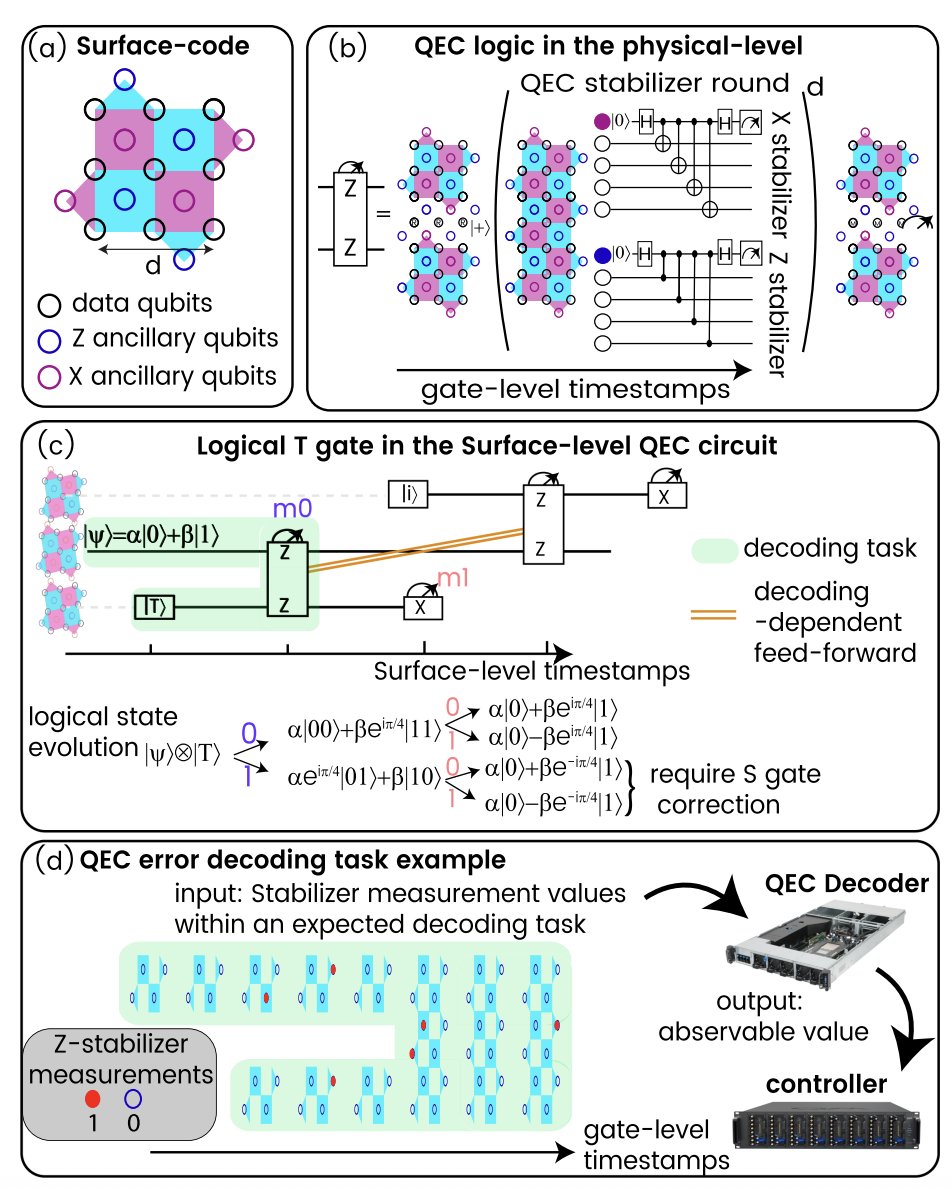

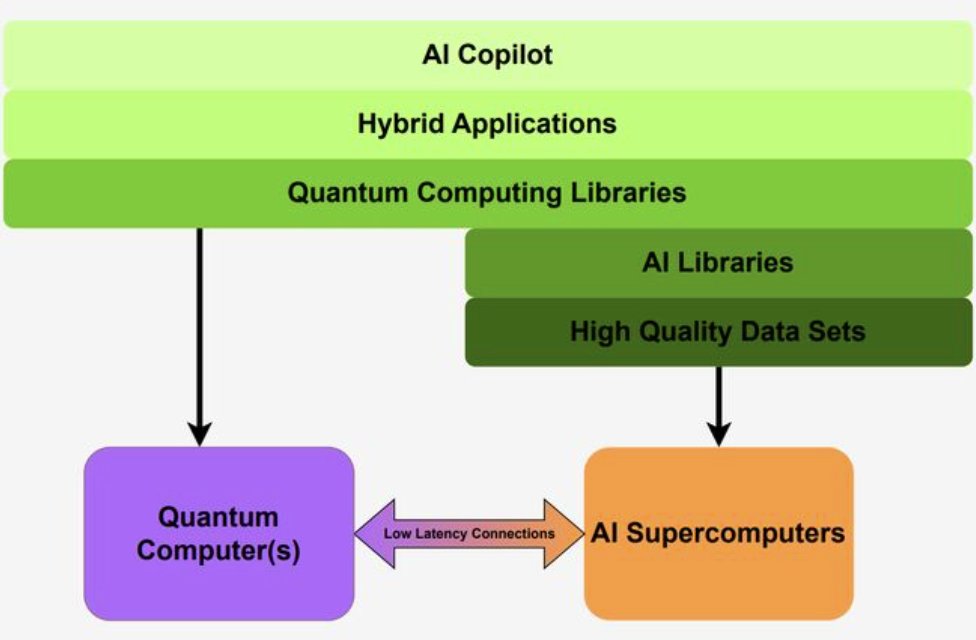

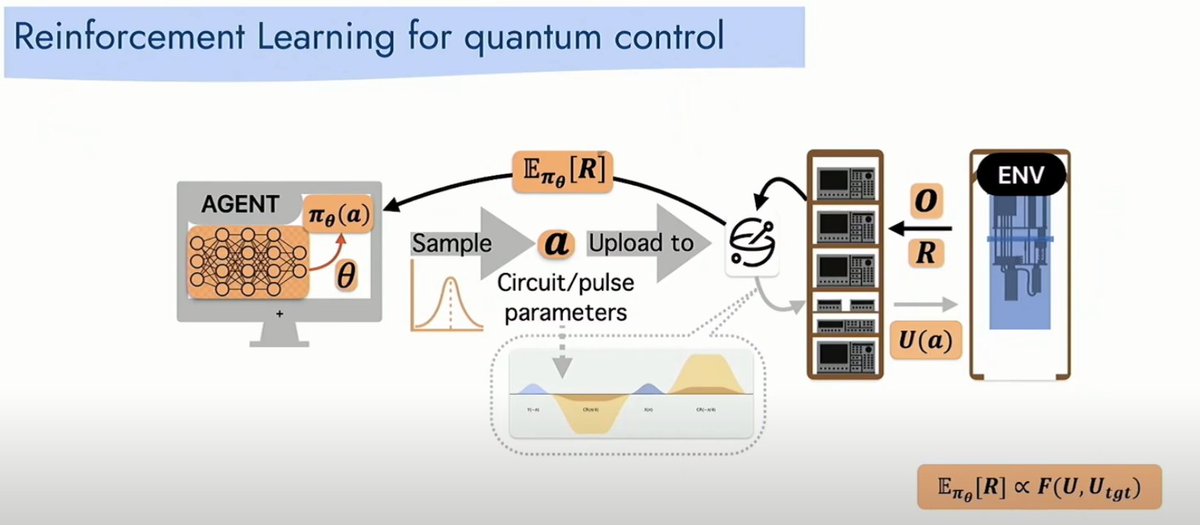

The problem is that there is a lot to learn between QPU hardware, cryo, control, QEC and the application layer. And it is no simple task to gather a broad overview of where the field is at.

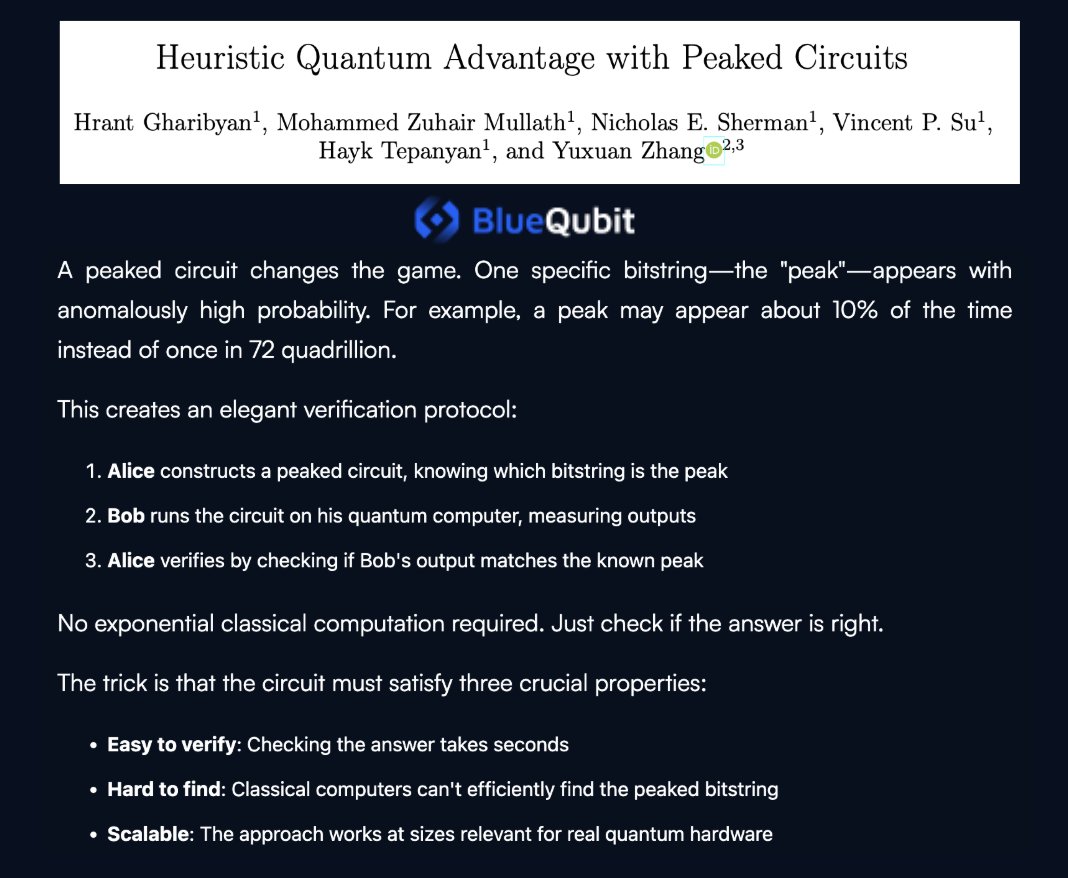

At least, one would hope that papers coming out from the leading quantum groups on algorithms and advantage could be trusted.

But I recently learned from a quantum chemist working in quantum computing for a few years now that one should be 𝗘𝗫𝗧𝗥𝗘𝗠𝗘𝗟𝗬 cautious.

I was already skeptical about new advantage claims before this conversation, but now even more so.

Apparently there are many quantum chemists out there who roll their eyes massively when "quantum computing will allow for new drug discovery" phrases drop. And they can't believe what classical, outdated techniques are often used as comparison benchmarks.

I'm taking it as a wake-up call. To be more skeptical, but also more deliberate about expanding my knowledge up the stack, from let's say unnatural angles for a physicist.

How do you approach learning about quantum algorithms and applications, especially when your natural home is lower in the stack ?

English