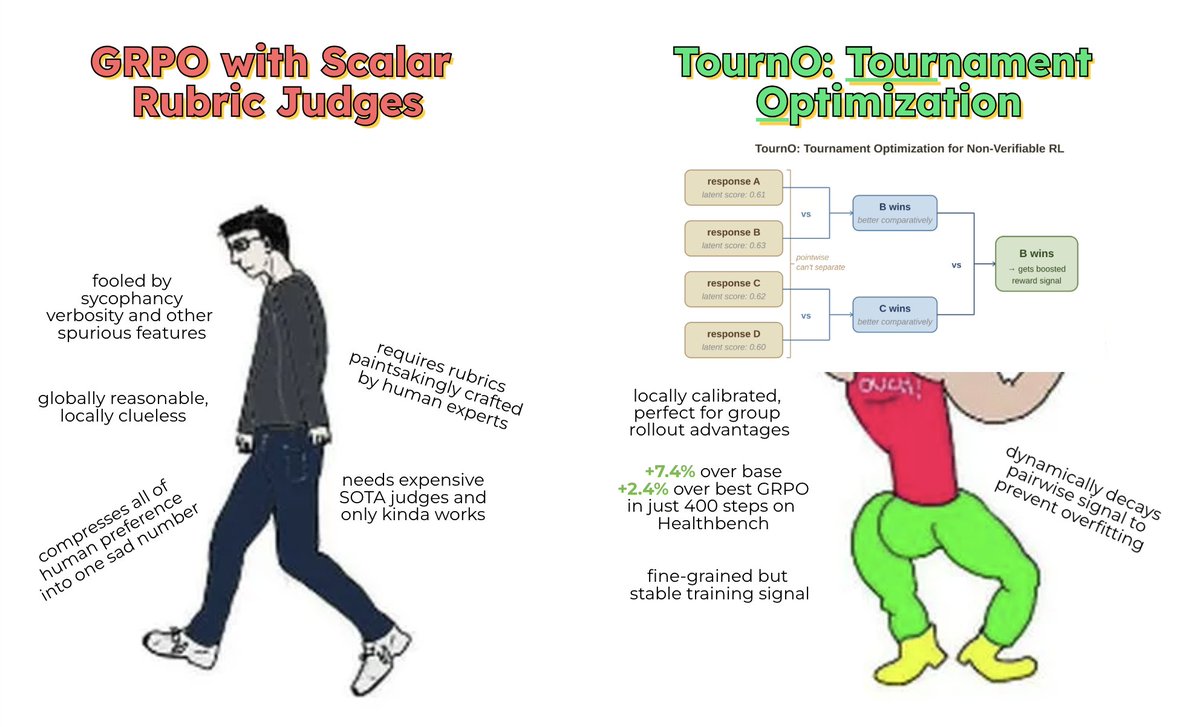

why does TournO work? 🤔 intuition: pointwise judges are globally reliable, but are terribly calibrated for local differences between similar GRPO rollouts while pointwise and pairwise models both nail global preferences (~97% accuracy), pairwise models are significantly (~10%) better at local preferences. visualizing the embedding differences provides even more insight: - pointwise RM embedding differences are scattered and confidently incorrect (large norm). - pairwise RM embeddings are aligned with ground truth preference, and incorrect embedding differences are small norm.