Egor Bogomolov retweetledi

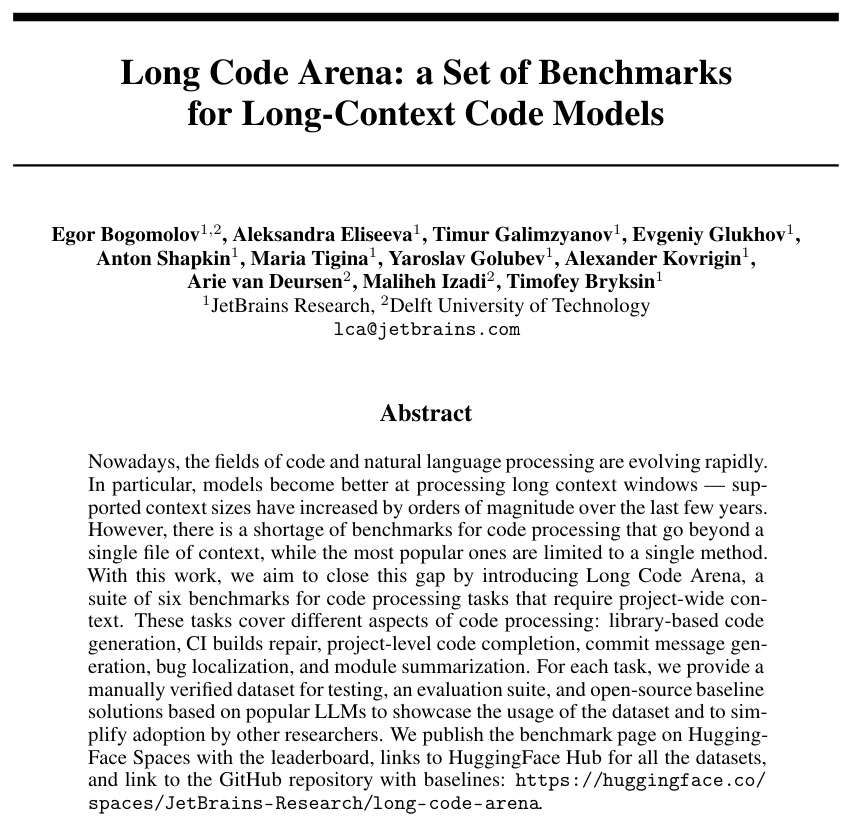

RL training and coding-agent experiments not scaling locally? IdeGYM fixes that – and it's now open source. jb.gg/rsrch-idegym

English

Egor Bogomolov

96 posts

@egor_bb

Leading ML Division @ JetBrains Research ML on source code, fancy stuff to make SE better.

The Best of N Worlds Aligning Reinforcement Learning with Best-of-N Sampling via max@k Optimisation