Max Key

140 posts

Max Key

@etechlead

Tech lead for humans and AI agents • Closing SDLC feedback loop

Katılım Eylül 2025

41 Takip Edilen13 Takipçiler

The Slog.

We all know about the slog.

We've been postponing a bit architectural refactoring because we know it's going to be a slog. But eventually the pressure builds and we heave a great sigh and begin the long arduous process of making a thousand dangerous changes and running the test suite as often as possible.

Along comes the AI and suddenly the slog doesn't seem like such a big problem anymore. We just tell the AI to slog through, and twenty minutes later it's done; and it's right!

And so off we go, confident that slogs are relegated to an ancient past. We'll never have to slog again!

And then comes some deep systematic flaw that we must correct. And the AI simply cannot deal with it without hours of constant babysitting and monitoring.

And there we are, slogging again.

English

@unclebobmartin Sounds less like software development and more like artificial selection

English

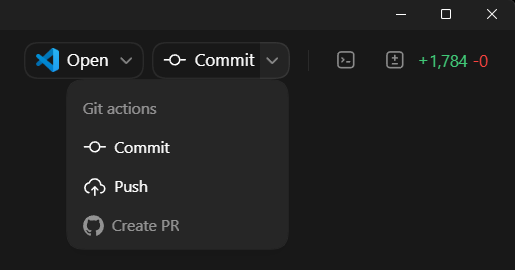

"Yes please" has become the most-used phrase in Codex since 5.4.

Can we get a button for that? /s

@thsottiaux @ajambrosino

English

you think codex is good now, but just wait until the singularity seven learn about colors

Max Zeff@ZeffMax

New: OpenAI saw the AI coding revolution coming years ago, but was beat to market by Anthropic. This is how OpenAI got in this position, and how a small Codex team spent the last year racing to build a billion-dollar competitor to Claude Code. (yes Codex now has >$1B in ARR)

English

Max Key retweetledi

@dexhorthy If you have profits, you show profits.

If you don’t, you show EBITDA.

If you don’t have that, you show revenue.

If there’s no revenue, you show users.

If no users, you show app downloads.

If nothing, you show token burn.

English

@BenoBilo61874 Large monoliths, microservices, event-driven architectures, async-heavy, lots of state machines.

Projects range from small internal tools to millions of LOCs, both greenfield and legacy, enterprise stuff mostly.

C#/.NET/TypeScript/Python

English

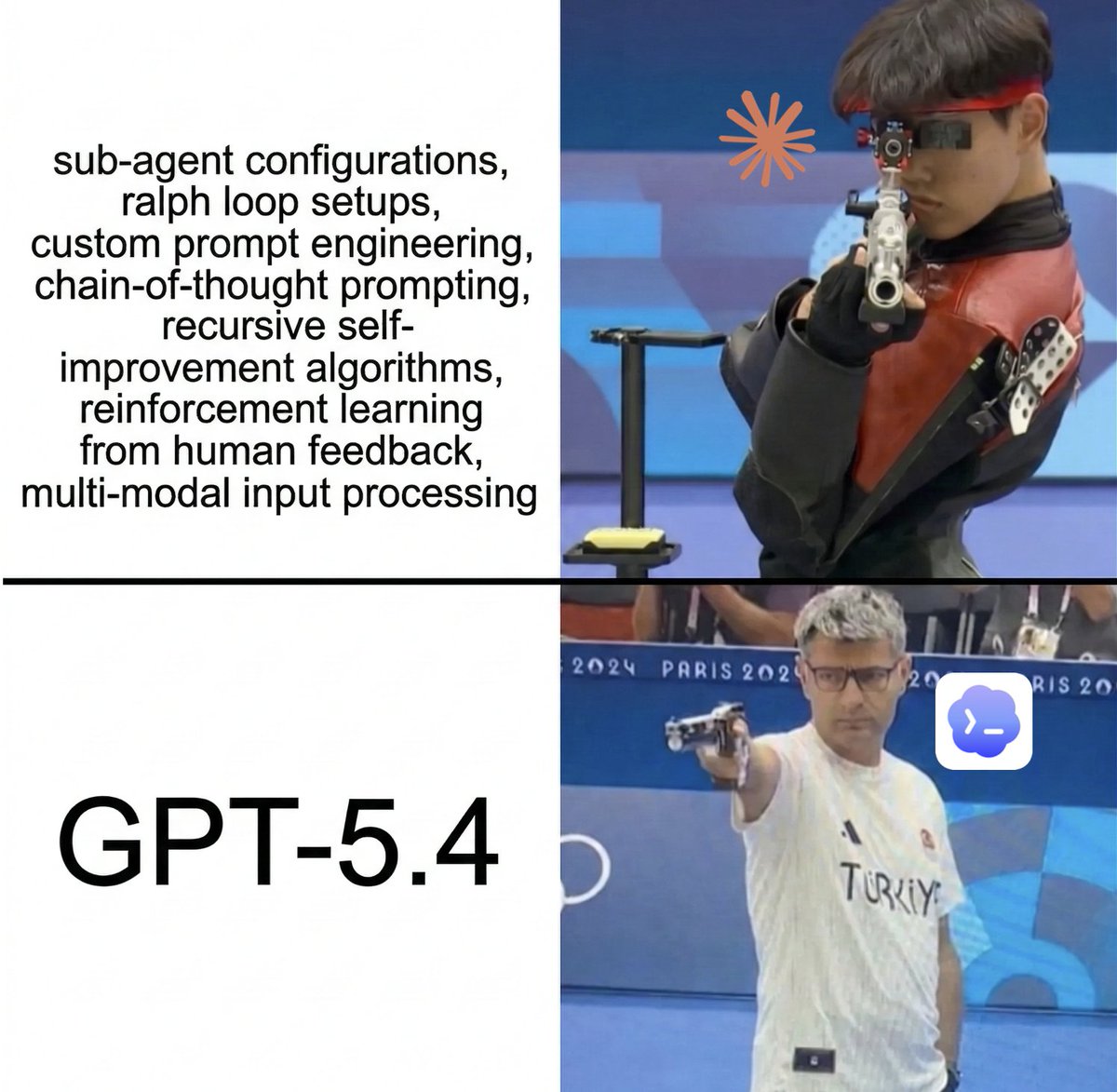

GPT-5.4, vibe review

tl;dr

Very good, almost a universal model for development.

As OpenAI promised, it feels like a hybrid:

● GPT-5.2 with its depth of reasoning and breadth of knowledge

● GPT-5.3 Codex with its speed, solid coding, and agentic behavior

Pros

🟢 Feels like 5.2 + 5.3 Codex

No need to juggle models or compensate for one's weaknesses with another's strengths.

One model, consistently good, just switch the reasoning level.

🟢 Speed - on high it runs at roughly the speed of 5.3 Codex xhigh, without losing quality.

On xhigh it feels snappier than 5.2 xhigh.

🟢 General knowledge - inherited from GPT-5.2 :) Codex models are likely distilled or lightweight fine-tunes of the "full" models, optimized for code - but their understanding of the world outside of IT is limited.

That makes them hard to use in domain-specific areas where you need intuition and domain knowledge, not just raw reasoning.

GPT-5.4 is noticeably better here compared to both 5.3 Codex and even 5.2.

That said, Gemini Pro models still lead here.

🟢 Investigative capabilities

GPT-5.4 got even better than 5.2 at tracking down bugs at the intersection of multiple subsystems, handling complex interdependencies, building long cause-and-effect chains - all while staying reliably on top of the available tools.

I recently migrated my infra toward an agent platform (so agents can handle devops themselves), and it did some genuinely non-trivial things during that migration.

🟢 More pleasant to talk to

Doesn't sound as mechanical as 5.2, though it's gotten chattier in return (that's the 5.3 Codex side coming through).

Cons

🔴 Overengineering (on simple tasks)

This existed in 5.2 too, but less often.

In GPT-5.4 the risk of the model going off into unnecessary abstractions on xhigh is higher - worth keeping an eye on what it's proposing as a solution.

🔴 1M context - wait, how did this end up in the cons? GPT-5.4's effective context, based on OpenAI's own benchmarks, is still around its native 272k tokens.

Everything beyond that is "stretching" the model's attention - and quality degrades noticeably, at a 1.5x+ price premium.

The 272k+ context is experimental, off by default - and I wouldn't recommend it.

Native context with periodic compaction works much better.

🔴 UI/design - still not its strength

At least they've promised to address this in future releases.

(but honestly, UI work belongs in specialized tools anyway)

Notes

⚪️ The model prefers Plan-Act

5.3 Codex was more suited to interactive work where it was basically your tool. 5.4 is more about planning, context gathering, then executing against a finished plan - closer to 5.2 in that sense.

⚪️ /fast mode in the agent

Speeds up token output by 1.5x, but at 2x cost/limits.

I turn it on when I need to interactively discuss or work through something without losing flow while the model thinks.

For executing medium-to-large plans it doesn't really matter - those run for tens of minutes or hours anyway, and inference speed becomes irrelevant.

Verdict

For development use, GPT-5.4 is currently my SOTA.

Other models now get used in pretty specific cases:

● Opus 4.6 / Gemini 3.1 Pro Preview for building UI from scratch

● GPT-5.2 xhigh occasionally as a second opinion on architecture, planning, and tech debt

These are testing criteria explained: x.com/etechlead/stat…

English

"Probation period" is the new benchmark

Greg Brockman@gdb

Benchmarks? Where we’re going, we don’t need benchmarks.

English

Max Key retweetledi

@unclebobmartin @danielbmarkham When agents are able to create software of any complexity as a black box, we will still need to have long-form thinking and attention to detail to express our intentions in full

English

@danielbmarkham I’m not convinced that’s true. The form may just have changed, the way it has changed every decade since it started.

English

We are losing something precious. Programming has given millions of people of an entire generation or two the ability to think abstractly using shared tools of math-based logic, in-depth and at-scale, no matter what their culture, religion, language, etc. With LLM-based coding, those days are gone. I won't miss programming, but we should all mourn so many millions slowly losing this precious shared trait. I'm afraid there's an old saying we are going to experience yet again "You don't really miss something until it's gone."

English

I plan with 5.4 xhigh and execute with 5.4 high - works great so far.

Codex 5.3 seems to be obsolete

Tibo@thsottiaux

@chribjel GPT-5.4 is the best model across the board

English