Edouard Grave

129 posts

Edouard Grave

@EXGRV

large language models @kyutai_labs

We ran more experiments to better understand “why” diffusion models do better in data-constrained settings than autoregressive. Our findings support the hypothesis that diffusion models benefit from learning over multiple token orderings, which contributes to their robustness and reduced overfitting. To test this, we trained autoregressive (AR) models with varying numbers of token orderings: N=1 corresponds to the standard left-to-right ordering, while N=k includes the left-to-right order plus k−1 additional random permutations. As N increases, we observe that AR models become more data-efficient, exhibiting improved validation loss and reduced overfitting. All models were trained for 100 epochs, and were evaluated using the standard left-to-right factorization. We also experimented with related approaches, such as RAR and σ-GPT, and observed consistent trends --introducing more random factorizations led to better generalization and less overfitting. We have updated our arXiv submission with these new results. We thank @giffmana and @YouJiacheng for suggesting these experiments. Original paper post - x.com/mihirp98/statu…

Meet Hibiki, our simultaneous speech-to-speech translation model, currently supporting 🇫🇷➡️🇬🇧. Hibiki produces spoken and text translations of the input speech in real-time, while preserving the speaker’s voice and optimally adapting its pace based on the semantic content of the source speech. Based on objective and human evaluations, Hibiki outperforms previous systems for quality, naturalness and speaker similarity and approaches human interpreters. 🧵

Meet Helium-1 preview, our 2B multi-lingual LLM, targeting edge and mobile devices, released under a CC-BY license. Start building with it today! huggingface.co/kyutai/helium-…

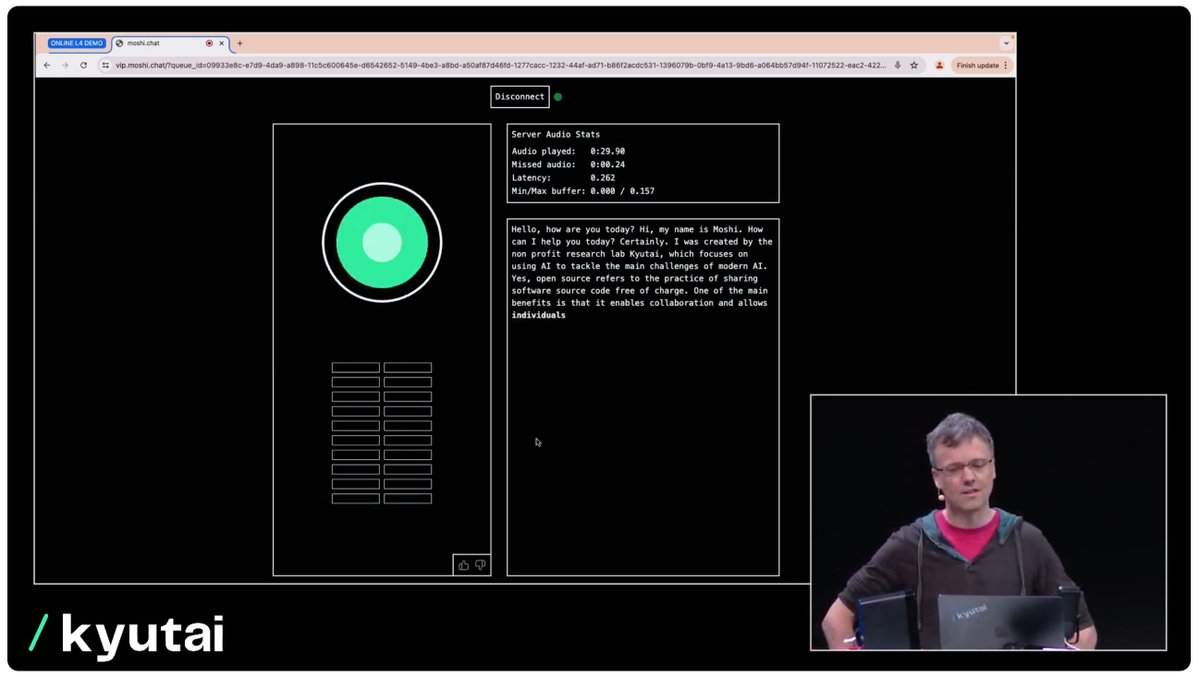

Moshi and Neil on stage giving some emotional improv.

Join us live tomorrow at 2:30pm CET for some exciting updates on our research! youtube.com/live/hm2IJSKcY…

Look for my @kyutai_labs colleagues at #NeurIPS2023 if you want to learn more about our mission. We are recruiting permanent staff, post-docs and interns!

Announcing Kyutai: a non-profit AI lab dedicated to open science. Thanks to Xavier Niel (@GroupeIliad), Rodolphe Saadé (@cmacgm) and Eric Schmidt (@SchmidtFutures ), we are starting with almost 300M€ of philanthropic support. Meet the team ⬇️

Our founding team is covering many AI fields from vision, with Patrick Pérez and Hervé Jégou (@hjegou) to LLMs with Edouard Grave (@EXGRV), audio with Neil Zeghidour (@neilzegh) and Alexandre Défossez (@honualx) and infra with Laurent Mazaré (@lmazare).

How awesome is that. Poster of the scifi movie "The invasion of the Large Language Models (1961)"