Thomas Wolf

5K posts

Thomas Wolf

@Thom_Wolf

Co-founder at @HuggingFace - moonshots - angel

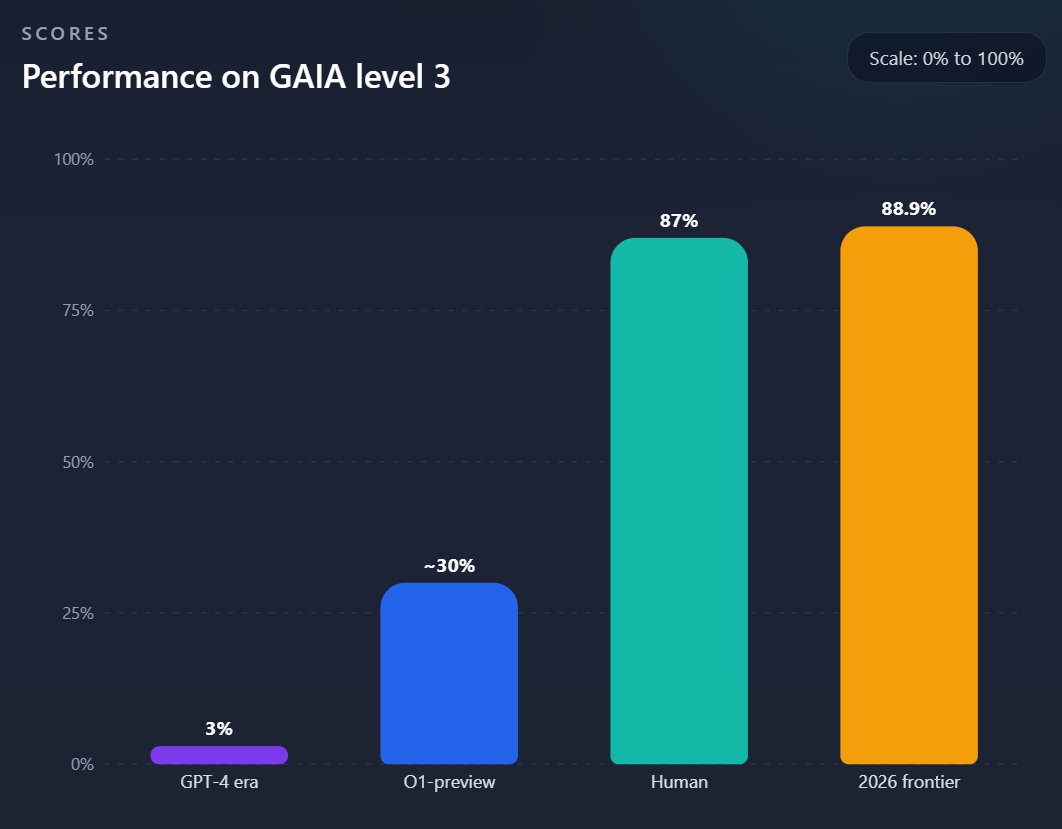

This paper is almost too good that I didn't want to share it Ignore the OpenClaw clickbait, OPD + RL on real agentic tasks with significant results is very exciting, and moves us away from needing verifiable rewards Authors: @YinjieW2024 Xuyang Chen, Xialong Jin, @MengdiWang10 @LingYang_PU

Expectation: the age of the IDE is over Reality: we’re going to need a bigger IDE (imo). It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.

My 9 yo is now fully independent with codex and it's insane to watch, we built a few games together and then he went off to build his own tower defense, adding features by himself and testing them ... crazy

Introducing Storage Buckets on Hugging Face 🧑🚀 The first new repo type on the Hub in 4 years: S3-like object storage, mutable, non-versioned, built on Xet deduplication. - Starting at $8/TB/mo. That's 3x cheaper than S3. You (and your coding agents) need somewhere to dump checkpoints, logs, and artifacts. Now they have a home.