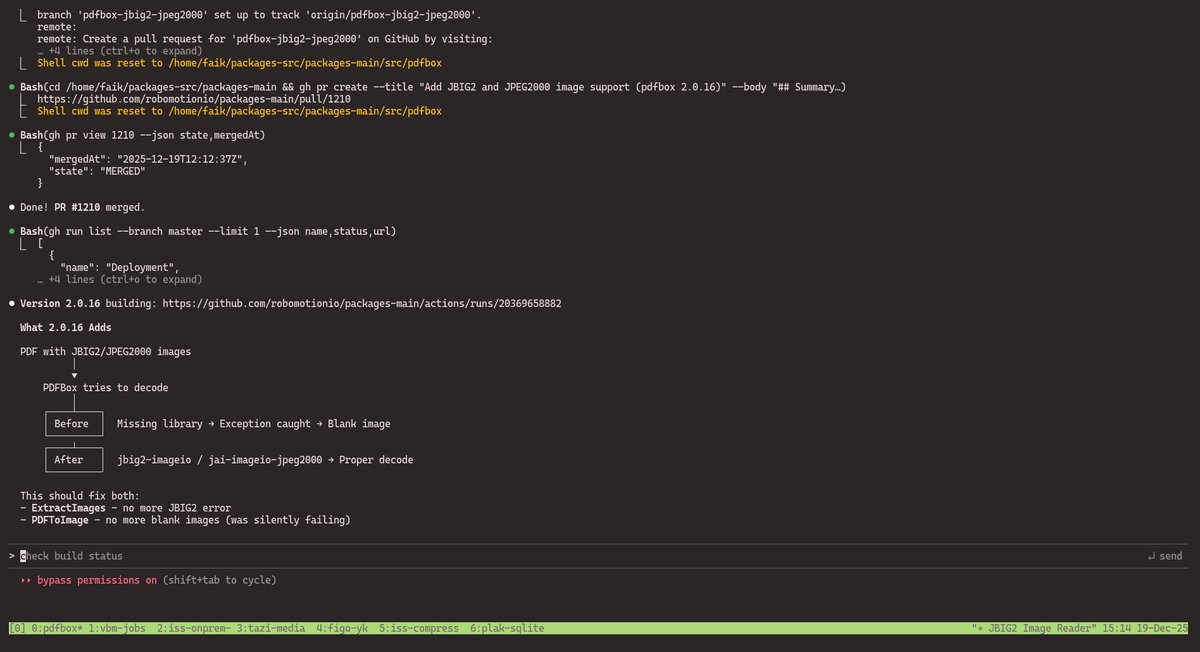

🚀 Robomotion v26.3.1 is here! This release focuses on improving the developer experience in the Flow Designer, making debugging easier, enabling larger data processing, and introducing a more flexible workflow for teams that use Git. The update also brings improvements that make it easier to integrate Robomotion into modern infrastructure and development environments. ✨ Highlights 𝐖𝐞𝐛𝐡𝐨𝐨𝐤 𝐓𝐫𝐢𝐠𝐠𝐞𝐫𝐬 𝐰𝐢𝐭𝐡𝐨𝐮𝐭 𝐏𝐮𝐛𝐥𝐢𝐜 𝐈𝐏 Robomotion now supports optional webhook URLs for triggers. Previously, receiving incoming requests required a VPS or server with a public IP and port. This update removes that requirement and making integrations simpler and more flexible. 𝐋𝐚𝐫𝐠𝐞 𝐌𝐞𝐬𝐬𝐚𝐠𝐞 𝐎𝐛𝐣𝐞𝐜𝐭𝐬 (𝐋𝐌𝐎) Flows can now process large datasets and tables. You can inspect full messages in the Debug Output Console without truncation and drag and drop message fields directly into node properties while building flows. 𝐄𝐱𝐭𝐞𝐫𝐧𝐚𝐥 𝐆𝐢𝐭 𝐑𝐞𝐩𝐨𝐬𝐢𝐭𝐨𝐫𝐢𝐞𝐬 Robomotion now supports external Git repositories for storing and loading projects. Developers can work on flows outside the platform using standard Git workflows and open the project later in the Flow Designer. 👇 Full changelog github.com/robomotionio/r… ⬇️ Download robomotion.io/downloads #Automation #RPA #DeveloperTools #WorkflowAutomation #DevTools #Robomotion