Fred Brunel

28K posts

Fred Brunel

@fbrunel

CTO & Co-Founder @ https://t.co/B9hLbvlgCl

so... I audited Garry's website after he bragged about 37K LOC/day and a 72-day shipping streak. here's what 78,400 lines of AI slop code actually looks like in production. a single homepage load of garryslist.org downloads 6.42 MB across 169 requests. for a newsletter-blog-thingy. 1/9🧵

There are a handful of things that will remain hardwired into your memory until the end of days: How to ride a bike. Your first love. The sound of a 90s boot sequence.

One of the greatest pieces of media ever created

I have so much gratitude to people who wrote extremely complex software character-by-character. It already feels difficult to remember how much effort it really took. Thank you for getting us to this point.

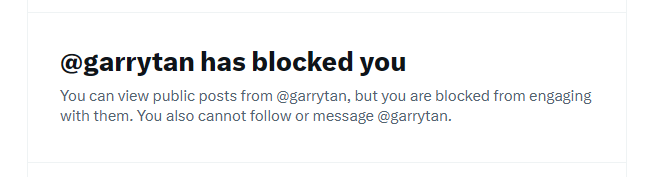

AI is making CEOs delusional