🤯

32.5K posts

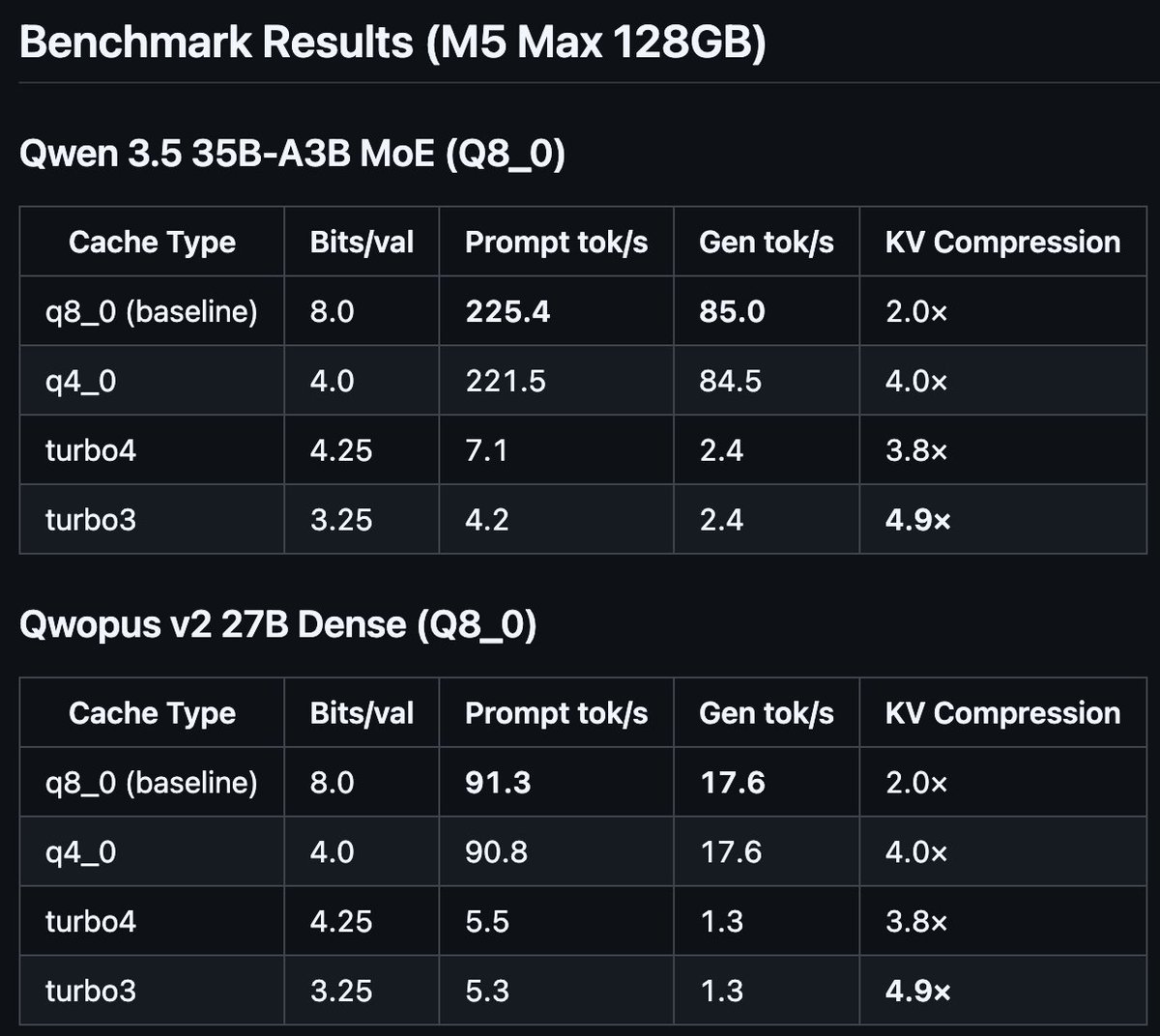

DDR5 memory prices just took a noticeable dive for the first time in months, and Google’s TurboQuant might be behind it. wccftech.com/ddr5-prices-ju…

🇺🇸 🚀 LAUNCHED: THE WHITE HOUSE APP Live streams. Real-time updates. Straight from the source, no filter. The conversation everyone’s watching is now at your fingertips. Download here ⬇️ 📲 App Store: apps.apple.com/us/app/the-whi… 📲 Google Play Store: play.google.com/store/apps/det…

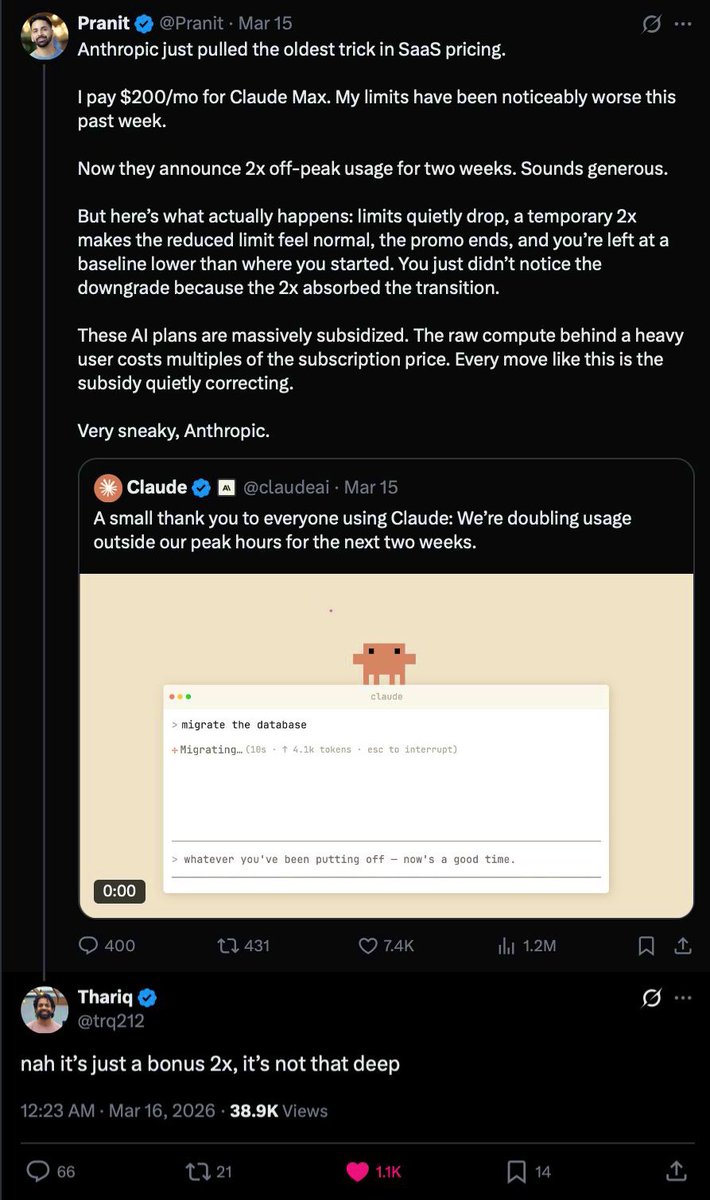

To manage growing demand for Claude we're adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you'll move through your 5-hour session limits faster than before.

El nuevo diseño de McDonald's que convierte los postes de los topes de aparcamiento en mesas. Es una idea interesante.

New Tenstorrent cluster hot from the kitchen > 1TB of VRAM > 3TB DDR5 RAM > 32TB SSD Storage New product, will share more later P.S. Can you find the cat in the picture?

Ya están llegando los LUMA a todos los #Cinesa 😍 A partir del 1 de abril no te quedes sin el tuyo, ¡pilas incluidas! 🌟 #SuperMarioGalaxyLaPelícula

Anyone trying out local LLMs do yourself a favor and get off of windows and use Linux