Sabitlenmiş Tweet

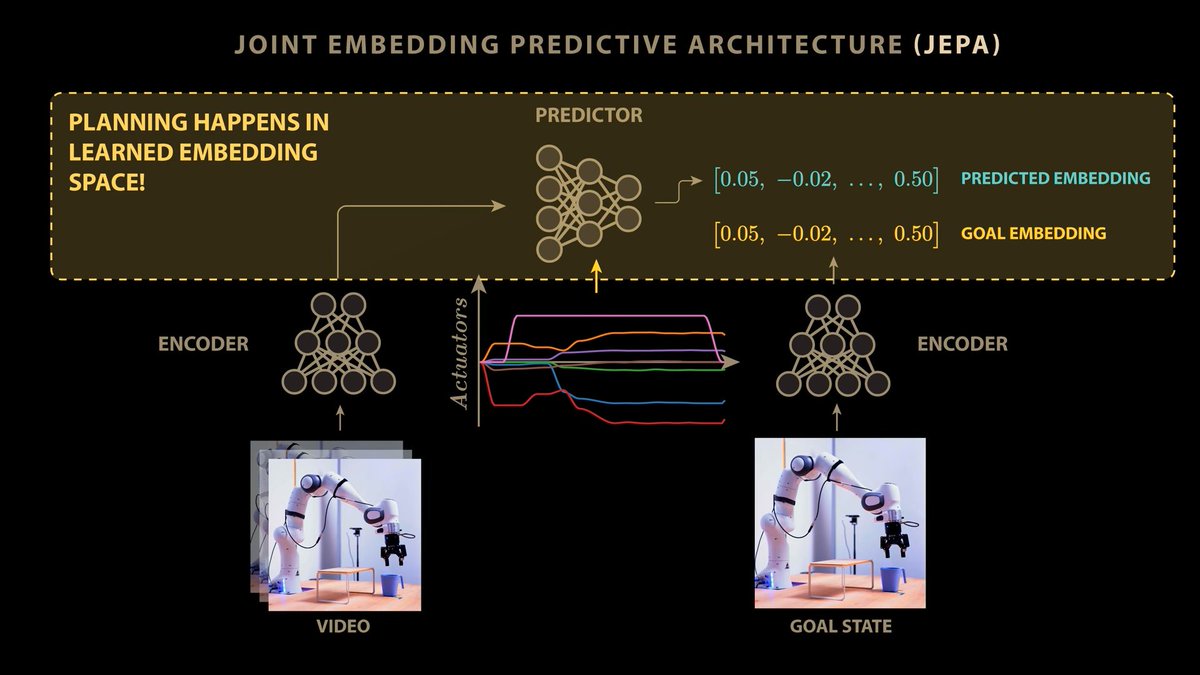

So proud of my latest goal : Second place at @LDO_Space and @telespazio T-Tec with horus 🥈☝️

Leonardo Space@LDO_Space

The award ceremony for the seventh edition of #T-TeC was held today in Rome, at the headquarters of the Italian Space Agency (@ASI_spazio). The contest is an #OpenInnovation competition organised by #Leonardo and @telespazio to foster technological innovation in the #Space sector among the younger generation of students and researchers from universities around the world. Discover more: lnrdo.co/4ncB1c0 #Leonardo4Innovation

English