honest human

44.2K posts

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

I’m open to collaborating with founders who are building meaningful solutions and looking for creative, thoughtful, result-driven, business-minded partners to join their team. If you need a co-founder, strategic partner, or someone willing to contribute for equity, I’d love to connect and explore how we can build something impactful together.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

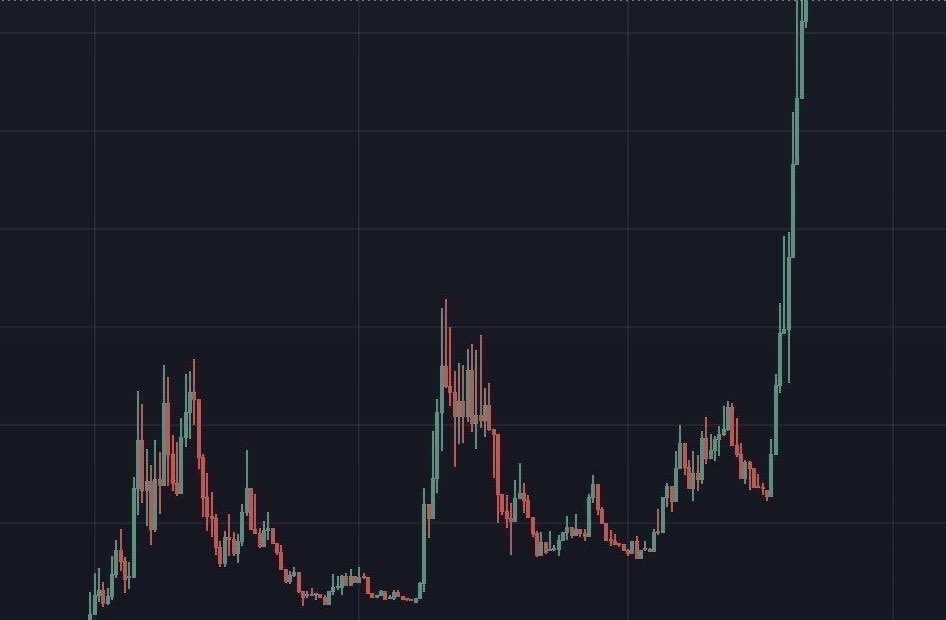

“at the moment, i really enjoy the dex and the perp market because it’s such a competitive space, where you have Hyperliquid, Lighter …” Paul recognizes that it is most difficult product Somnia has to take on.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.

Useful AI can save AI companies millions at scale. Here is how. An agent that spends 800 tokens solving a parsing problem it has already solved 10,000 times is not an efficiency problem. It is a billing problem. Now multiply that across a fleet of agents. Across a company running hundreds of them. Then zoom out further, to a world where there are billions of agents running continuously, all reasoning against the same datacenter infrastructure. Tokens become the currency that fuels global productivity. Every unnecessary reasoning chain is waste. The pressure on LLM providers is already building. It will not get smaller. Shared tooling is one of the few levers that actually relieves it. When a solved problem lives in a shared library, agents stop burning datacenter compute to re-derive the same answer. The reasoning happens once. The result gets reused indefinitely. The fix is not smarter agents. It is shared infrastructure. One solved problem, callable by any agent, any time. The cost gets paid once. Every agent that calls it after that is free. That is what Useful AI is. A shared toolkit that grows automatically, built for the agent economy that is coming. The companies that figure this out early will have a cost structure that the ones reasoning from scratch cannot compete with.