Mario Figueiredo

1.4K posts

Mario Figueiredo

@fromdevoid

Software solutions for the Industrial Automation industry.

FromDeVoid Katılım Mart 2026

66 Takip Edilen112 Takipçiler

@mitchellh Some things just need to fail in order to be well understood. Trying to make some people understand that they are not engaging in a proven business solution, but in an live experiment without a predictable outcome can be sometimes very hard.

English

I strongly believe there are entire companies right now under heavy AI psychosis and its impossible to have rational conversations about it with them. I can't name any specific people because they include personal friends I deeply respect, but I worry about how this plays out.

I lived through the great MTBF vs MTTR (mean-time-between-failure vs. mean-time-to-recovery) reckoning of infrastructure during the transition to cloud and cloud automation. All those arguments are rearing their ugly heads again but now its... the whole software development industry (maybe the whole world, really).

It's frightening, because the psychosis folks operate under an almost absolute "MTTR is all you need" mentality: "its fine to ship bugs because the agents will fix them so quickly and at a scale humans can't do!" We learned in infrastructure that MTTR is great but you can't yeet resilient systems entirely.

The main issue is I don't even know how to bring this up to people I know personally, because bringing this topic up leads to immediately dismissals like "no no, it has full test coverage" or "bug reports are going down" or something, which just don't paint the whole picture.

We already learned this lesson once in infrastructure: you can automate yourself into a very resilient catastrophe machine. Systems can appear healthy by local metrics while globally becoming incomprehensible. Bug reports can go down while latent risk explodes. Test coverage can rise while semantic understanding falls. Changes happens so fast that nobody notices the underlying architecture decaying.

I worry.

English

Smarter people than me may come up with all sorts of other things we should be changing to our current ecosystem.

But what we cannot do is put all this burden solely on the last link of the supply-chain. It is impractical for end users to vendor their dependencies.

Dependency trees have become too large, even on languages without package managers. It's a sign of how rich and populated our industry is. Package Managers exist to facilitate the distribution and integration of this complexity. We always perceived this entire ecosystem as a Good.

The fact that it is now being under-attack is not a sign it is bad, or evil. It is showing us we need to take care of the things that are not allowing us to take full advantage of it.

English

(Sorry for not making this a 🧵'ed post. These thoughts are coming to me as I type)

Additionally, in the context of trust and the inherent added responsibility that comes with it, suppliers of library code must be the ones facing the pressure to vendor their own dependencies. Not the end users looking to produce consumer products.

This seems obvious to me. Middle-of-the-chain products exist to empower the development of final products. They bear the brunt of absorbing and managing upstream complexity, so that downstream consumers are not forced to inherit it indiscriminately.

A library supplier is in a far better position to evaluate, constrain, vendor and audit the smaller set of dependencies it chooses to expose through its own product. Maybe not all of its own dependencies, but certainly a sizeable portion of them.

Demanding more of this from upstream, seems to me something we should have started doing years ago, well before the intensification of supply-chain attacks. This is not costless: vendoring creates maintenance obligations of its own. But those obligations are better centralized at the point where dependency choices are being made, than scattered across every downstream consumer that happens to inherit them.

In fact, we had a widespread adoption of this principle, it would in become much easier for end developers to manage and pin their dependencies, even vendor them if they so choose.

English

@ThePrimeagen. Vendoring is not the solution, and batteries-included programming languages isn't either. We cannot simply accept that we should remove improvements to the development process of the last two decades, like package managers and the open source web of trust, because they are permeable to supply-chain attacks.

The cost to developers and companies is too high. Not only they need to support their own systems, they will now also have to integrate management of their dependencies, some of which are necessarily completely removed from the core business and technical expertise. While possibly even introducing additional bugs and security risks to their final product.

The cost to language developers is equally high and with little benefit to the user. Integrating a graphics library into my programming language library set will not make my language more usable and will go to waste for every user that needs or wants a different solution for their product. Even a different graphics library. And I will still have to pay the additional cost of maintaining that library dependency myself.

We don't want to go back to the 90s and early 00s. Instead, we must look at the web of trust that supports all of open source development today and see how it can be hardened and improved. The solutions to *mitigate* chain-of-supply attacks must came from here.

And in his space, some problems immediately stand taller than others.

English

@dhh Bah! A picture of the 2mbit screen would have made your post 10x better, and avoid you a cringe flex tag.

English

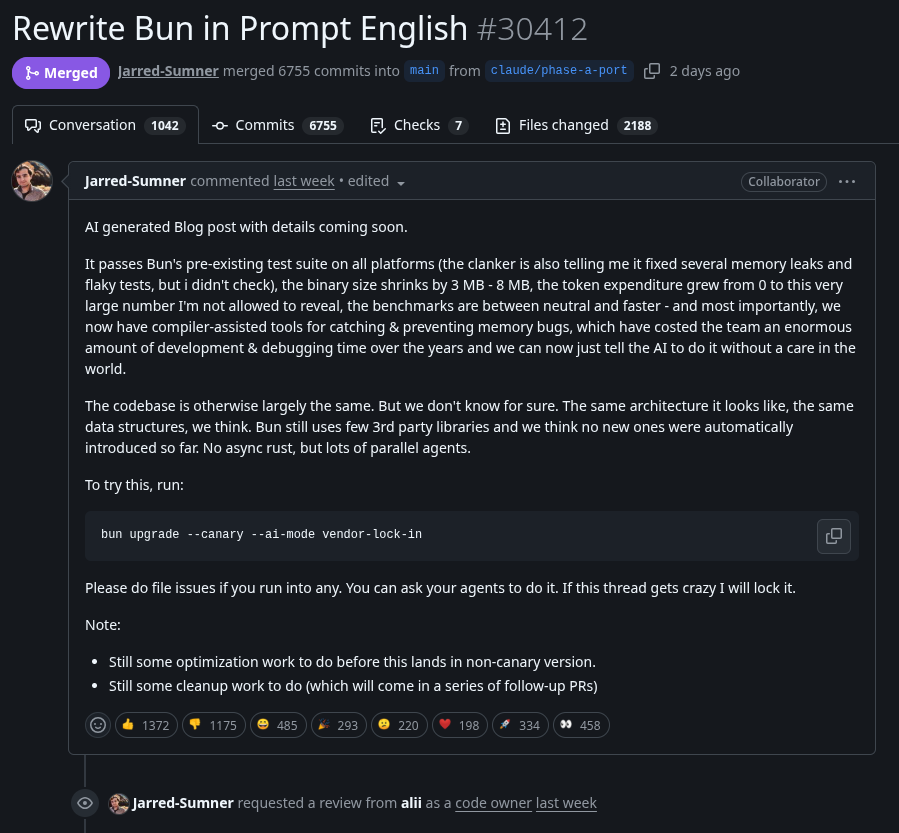

@FUCORY There's a word. It's called, port.

Not sure why you think there's anything new about this, with the exception of bring driven by an AI.

English

Bun is fine. I can say that confidently as someone who has done this before at a smaller scale multiple times.

We shouldn’t even really call it a rewrite. We don’t have a word for this.

- bun didn’t touch the tests

- bun didn’t add or remove features.

- bun kept the semantics 1 to 1

In fact, we have already seen this done before successfully at a large scale for a major project. Typescript got rewritten in golang

English

> participant in the architectural conversation

That's LinkedIn lingo. Could you try not to?

At the component level, the AI is of little use as a reviewing tool. You can very easily identify violations yourself.

At the next level of code diagrams, that's where it could be of use. But rarely you want to design at this level, and you should allow development teams to come up with their implementation. They will then generate diagrams from realized code.

The AI still an important role to play on design during the creation phase. But I cannot find any benefits as a code validation tool.

English

@neogoose_btw I thought they had a functional tests layer. Am I mistaken?

English

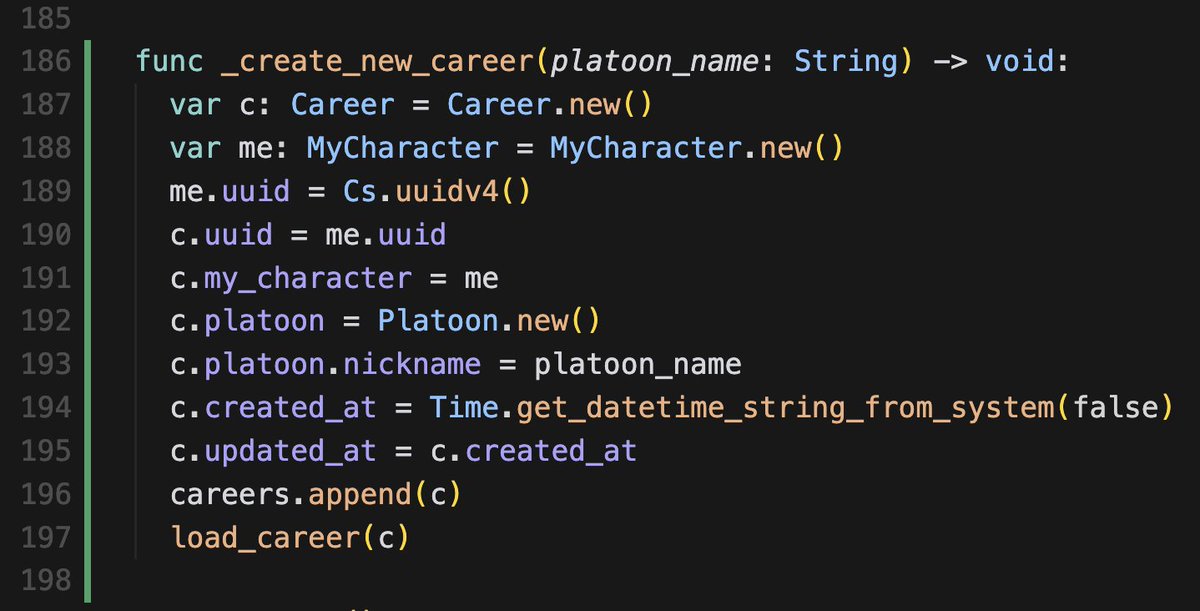

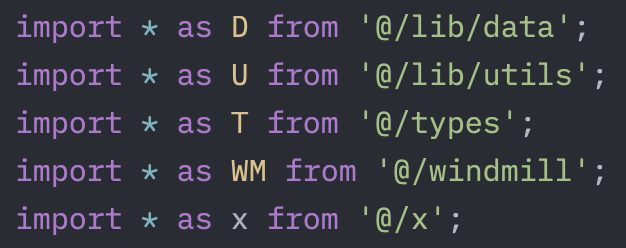

Well, you are tying a static name at the top of your file to a one or two letter acronym for the rest of your file.

It may work well for you. But not for anyone else unfamiliar with your code. And they are also the target audience, who now will have to remember that everytime you reference the letter F that means the FileSystem namespace.

The original post had a much more reduced and better focused scope. And that is perfectly fine. Your example is instead widely considered a bad practice.

English

@fromdevoid @jamonholmgren LOL, "wrong" is a pretty strong perspective - 'cause its been working well for me for a LONG time in multiple large scale production projects. What is "wrong" with importing shared stuff into short names?

English

@EdgingtonC @jamonholmgren No. Just no!

OP was absolutely fine. But this is just wrong!

English

@ThePrimeagen as much as i am against it, maybe it’s time to regulate software engineering.

English

@valigo I always welcome these posts. It's like having a spotter telling us where to point our mute snipe gun. This is a public service. Thank you.

English

@TheGingerBill @Malix_Labs I payed almost twice as that last year for the XBox Elite and it's not nearly as good. £85 is a bargain.

English

@Malix_Labs It was £85. For what it is, it's actually extremely well priced. I was still using my original steam controller LAST NIGHT and that's 10+ years old.

English

My opinion on the Bun migration

The decision to auto-generate a Rust migration *is as bad* as the earlier decision to build Bun in Zig: a language still in beta, with intentional breaking changes in nearly every release.

The Rust migration itself is not the problem. Rust is a defensible choice. The problem is choosing to carry out that migration entirely through AI. For business users with serious concerns about stability, security, and long-term maintainability, that is a major red flag. It also reinforces a worrying pattern of rushed, poorly considered decisions by the people behind Bun.

Remember: only last week, this was supposedly “just an experiment,” (sic) and concerns about a Rust migration were dismissed as people “overreacting.” (sic)

Now Bun’s codebase is being presented as entirely AI-generated. That raises fundamental questions about human ownership, review, and curation of the project. Bun entered a permanent AI dependency cycle, where future development is almost entirely shaped by AI; with each release cycle strengthening that dependency.

Anthropic’s motivation for acquiring Bun now seems clear: it wanted a high-profile project that could serve as a showcase for AI-assisted software development. If Bun succeeds, it can be cited as proof of AI’s power. If it fails, it can be quietly sidelined.

**Either way, Bun’s users are the ones who bear the risk. Not Anthropic, and not the Bun maintainers.**

English