Beatriz(✱,✱)

11.1K posts

@fury_beatriz

just a chill guy exploring, learning, building web3 — no rush, brick by brick. music & films. Contributor @axisrobotics

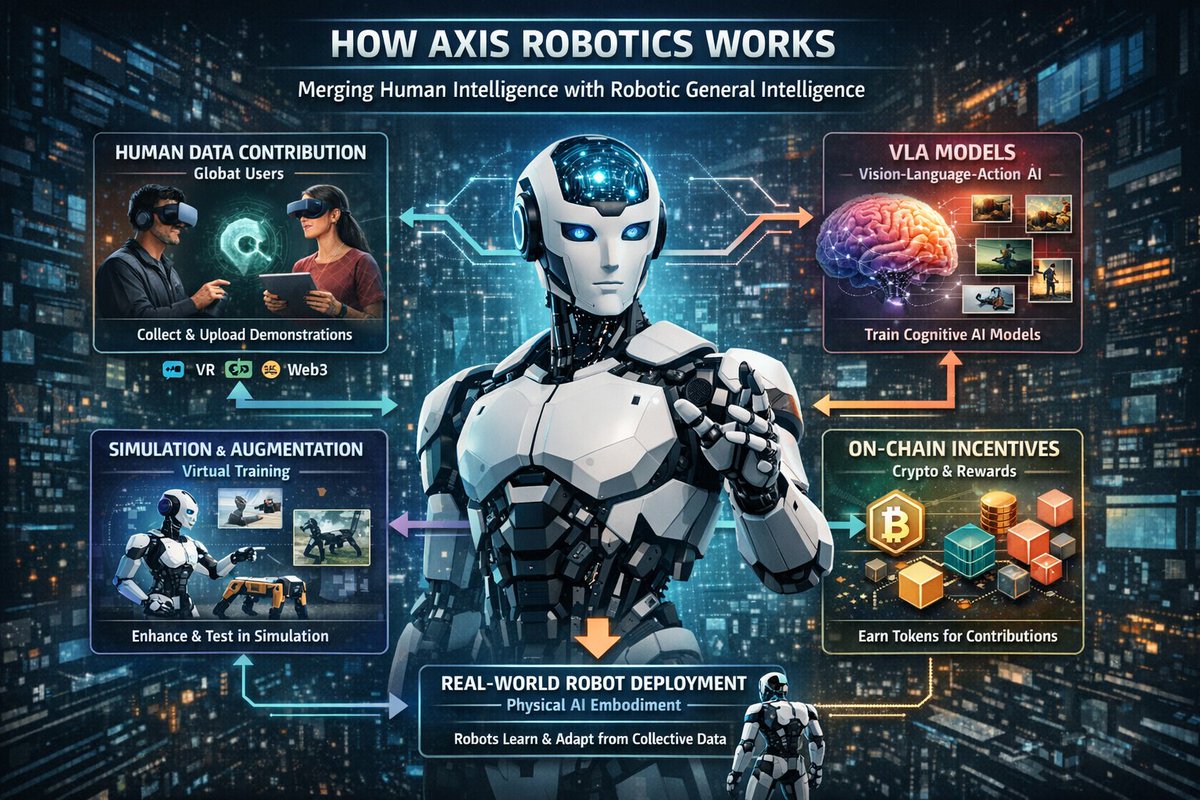

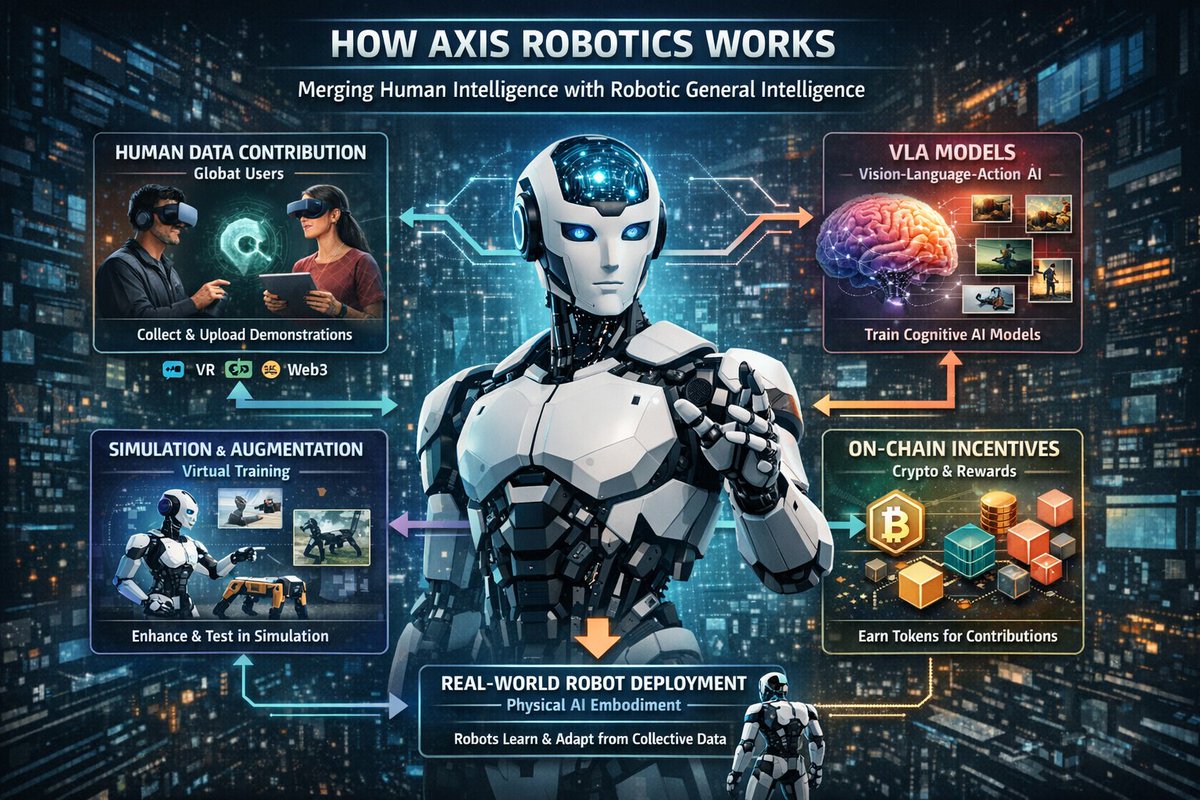

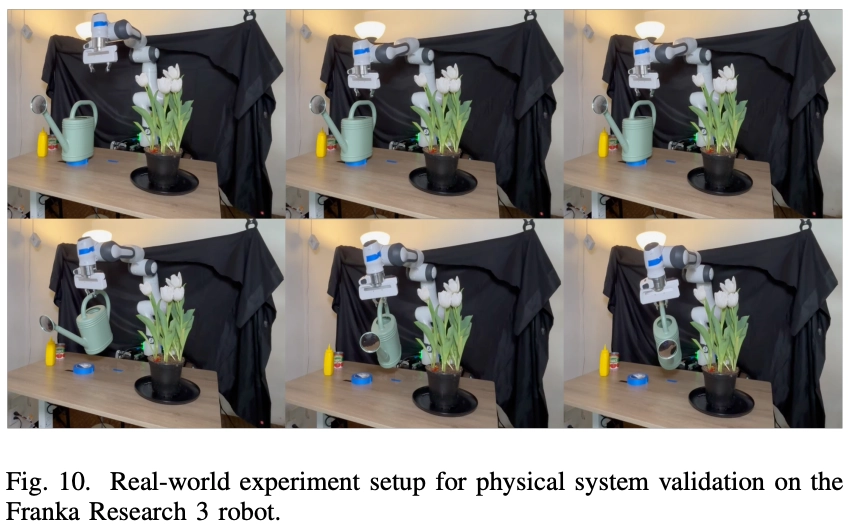

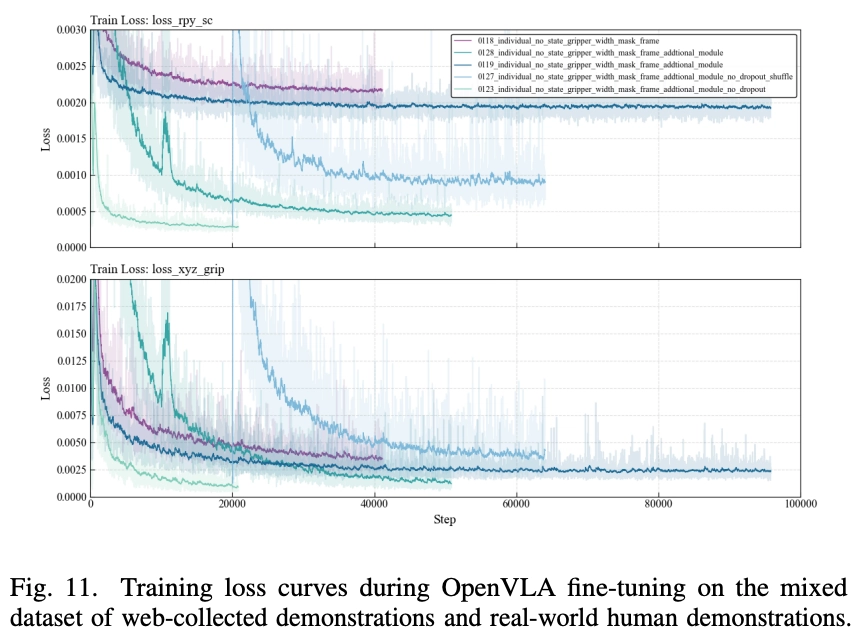

The Unseen Magic🪄 When you open your browser, use a keyboard or gamepad to control a robot arm on the Axis platform, and easily complete a task, it might feel like a lightweight web game. But that is just the tip of the iceberg🧊 For Physical AI, the current bottleneck is acquiring large-scale, high-quality demonstration data (data trajectories) that actually generalize to the real world. Today, we want to show you the data magic happening in the Axis Robotics backend after you complete a task on the frontend. 🪄 The Unseen Magic 1: Data Cleaning & Refinement When humans control robots via a webpage, we aren't perfect. Our hands shake. We hesitate. We pause. If you feed this raw data to an AI model, the physical robot will move like it's glitching. The moment you click "Task Complete," our backend data cleaning pipeline kicks in: - Hesitation Removal: We automatically detect and delete the frames where you paused or hesitated. - High-Frequency Smoothing: We apply mathematical filters to turn jerky human hand motions into smooth robot trajectories. - Upsampling: Web browsers record slowly (6-8 Hz). We instantly upsample your data to 20 Hz—the exact frequency a real robot needs to react in real-time. The Result: Rough, raw inputs are polished into silky-smooth, physically plausible demonstrations (Fig. 1). 🪄 The Unseen Magic 2: Realistic Augmentation A lightweight web frontend is perfect for accessibility, but it lacks the photorealistic rendering and visual diversity required to train robust Vision-Language-Action (VLA) models. So, we send your single, clean web demonstration directly to our powerful GPU servers. Here, we use an NVIDIA Isaac Sim backend to replay your actions and generate photorealistic, domain-randomized rollouts: Physics & Domain Randomization: We automatically generate diverse variations in object mass, friction, textures, and lighting (Fig. 2). Your single demonstration is multiplied into a highly diverse, multimodal dataset ready for policy learning. 🤖 From Simulation to Reality Through our end-to-end training-to-deployment loop, this refined and augmented dataset is used to fine-tune downstream VLA architectures (like OpenVLA). The most exciting part? We deploy the resulting policy directly onto a physical Franka Research 3 (FR3) robotic arm in the real world. We successfully evaluate its ability to perform tasks like pick-and-place, pouring, and manipulating articulated objects, validating our entire pipeline. Axis Robotics Platform is a unified infrastructure that bridges accessible web-based teleoperation, GPU-accelerated realistic augmentation, and sim-to-real deployment. We built this massive backend complexity so that scalable, high-quality data collection could become universally accessible.