The prompt for the Erdos problem was basically just stating the problem. yikes cdn.openai.com/pdf/74c24085-1…

David Gasca

9.3K posts

@gasca

🌲⛩️⛩️🌲 here for the AI Product @ Whatnot - ex @google, @twitter, etc.

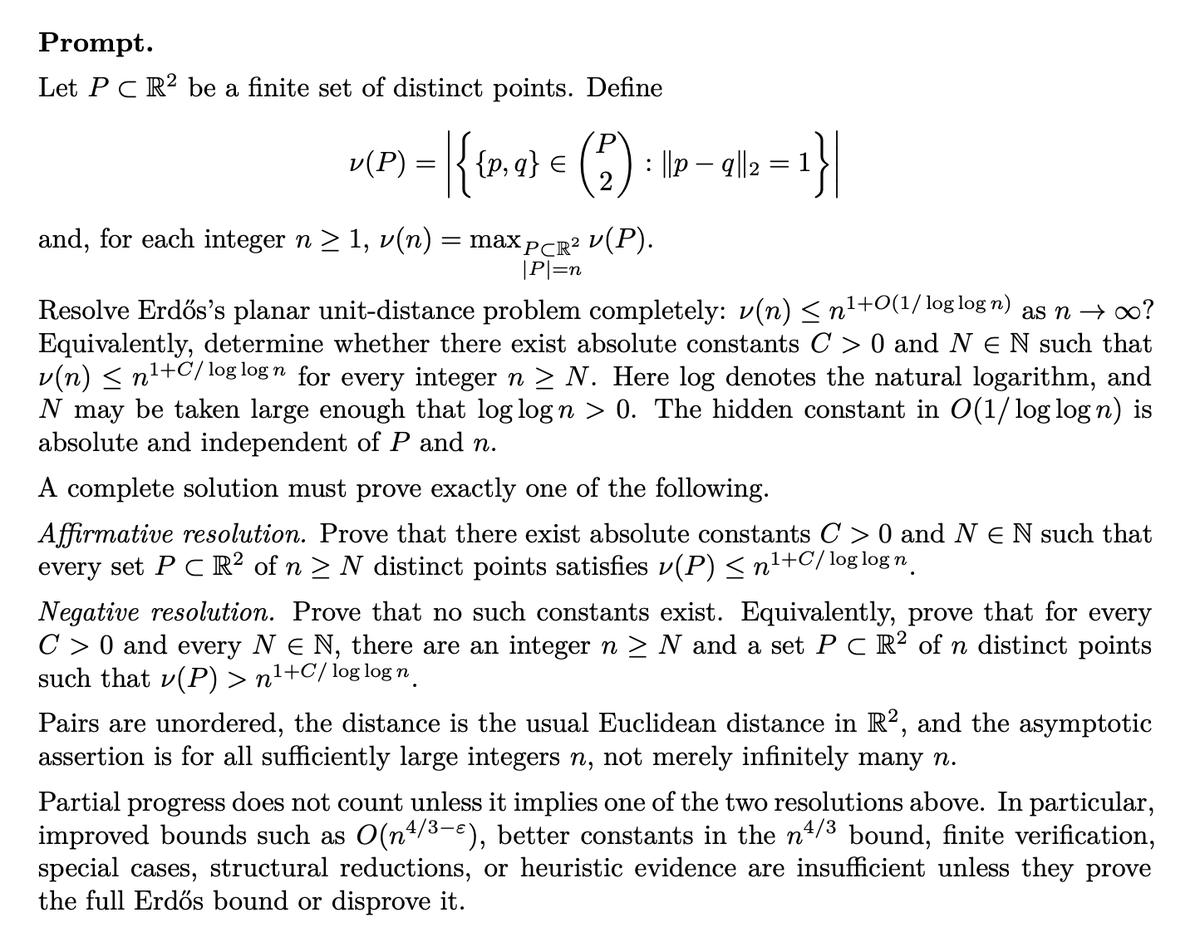

The prompt for the Erdos problem was basically just stating the problem. yikes cdn.openai.com/pdf/74c24085-1…

Today, we share a breakthrough on the planar unit distance problem, a famous open question first posed by Paul Erdős in 1946. For nearly 80 years, mathematicians believed the best possible solutions looked roughly like square grids. An OpenAI model has now disproved that belief, discovering an entirely new family of constructions that performs better. This marks the first time AI has autonomously solved a prominent open problem central to a field of mathematics.

Reference anything: Gemini Omni extends Gemini's native multimodality, allowing you to blend combinations of text, audio, image, and video inputs into a high-quality, consistent video.

Use Design Mode in Cursor 3 to annotate and target UI elements in the browser.

🚨 New experiment: I wanted to see how far AI could be useful as a researcher in a domain I don’t know well, where I couldn't just run tests and verify. As the Krishnan household is obsessed, I chose paleontology! Now, science in an unfamiliar field is hard because the output can be elegant, PhD-shaped, and completely wrong. Specifically I tried to figure out whether some of the theories I had about functional convergence were true. Like " when climate gets more volatile, do ecosystems become more similar?" ---- First, lessons learnt: 1/ models LOVE drifting into easier versions of your question over multiple rounds. You ask hard question A. It quietly solves tractable question A′. Then it returns a beautiful chart. 2/ You need to spend multiples of time verifying any response as asking questions. This needs huge amounts of process governance (since the answers cant be verified) and general sense checking (does that even sound right). 3/ You have to clean your workspace regularly. Models HATE deleting anything, and you need to shout at them ad infinitum to make this happen. Necessary to make later runs not confusing. 4/ Models absolutely adore mediocrity! They want to only do the inoffensive, unbtrusive, simple tasks that won't get them yelled at. Even when told not to! It's extraordinarily hard to get them to be bold. 5/ In a way it makes sense, because they have terrible attention to detail. They missed clades a lot or misstated the hypotheses halfway through. This is hard to fix when you don't know the domain, and requires constant vigilance! 6/ Models also think they can't do things that they can, and constantly say some analyses are a bad idea (see above re boldness). You have to tell them to stfu and calculate. They're so damn timid! 7/ Mainly for this but you HAVE to use multiple models to get the best results. They shock each other out of some basins and are useful tools to sense check. ------- The specific results were cool too btw. I did find out there is a "labour market convergence" during volatile times, where ecologies converged to different animals doing similar jobs at different times. And a predictino that you'd see more eg filter feeders during volatile times vs large chasing predators! Though this is true mostly in the Mesozoic! (I also disproved some theories like roles becoming "interchangeable" across clades.) --- I'd written a few years ago that "Analysts" are coming much in the way "Computers" became a machine. We're there now. Any curiosity you have can be analysed now by data. Any ideas you have about the world, you can now throw intelligence at it to test it out. It requires patience and practice, which is good, but I don't think we've nearly started to get to grips with this fact of reality! Essay: strangeloopcanon.com/p/the-spinosau…