Gerard C.L. retweetledi

Gerard C.L.

1.6K posts

Gerard C.L.

@gerardcl

tuiteru per curiositat...buscant respostes...sense pressa però sense pausa :)

Frei-Weinheim, Deutschland Katılım Ocak 2011

920 Takip Edilen190 Takipçiler

@Paupuuu Per cert! Us heu de passar al fedivers!!! Descentralització és el futur!!! fediverse.party (exemple: mastodon en comptes de twitter)

Català

Unfortunately @m_ou_se‘s books didn’t arrive on time but she’ll sign copies people bring themselves. Also, the first 10 people to DM us their email addresses will get a copy sent from @OReillyMedia

English

Gerard C.L. retweetledi

The Ongoing Case For Open Source LLMs

Custom LLMs, long context, and efficient inference

Some folks believe that training open-source LLMs is a losing battle and a complete waste of time.

They argue that the gap between closed models like GPT-4 and open models like Llama will widen and these open-source models may never catch up.

Yes, closed models like Google's Gemini or Open AI's Gobi promise to be way more powerful than GPT-4, so what hope does open source have?

To start with inference on GPT-4 is very expensive. These very large models may be performant but aren't cost-effective. At Abacus, we routinely use fine-tuned versions of LLama-2 and smaller models when we need to run 1M+ API calls a day for standard enterprise applications Q/A, summarization, and NLP at scale. GPT-4 would cost > $100K a day in these cases.

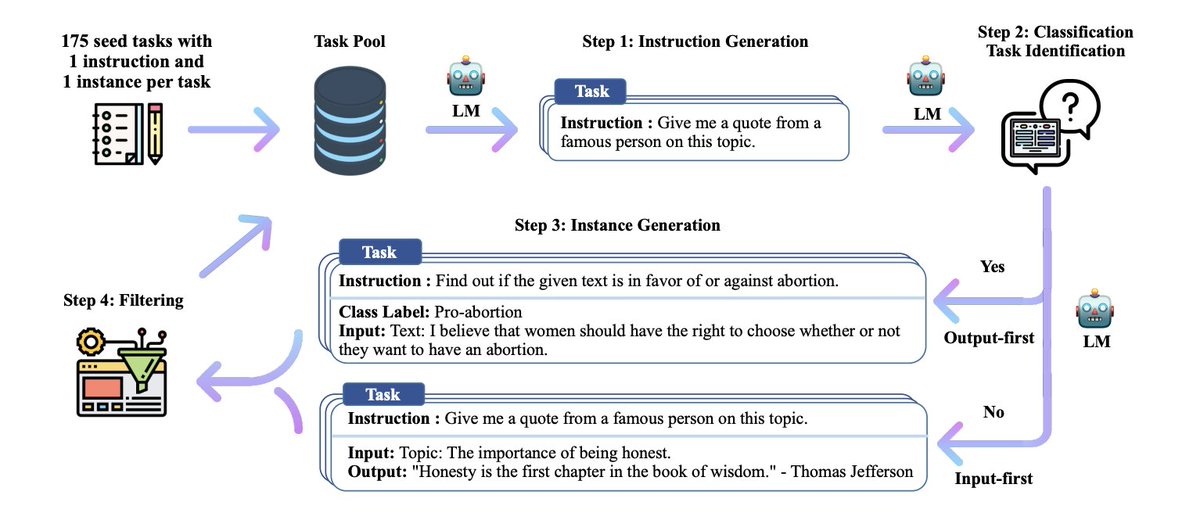

Instruct-tuned LLMs can match the performance of GPT-4 for a specific task. Instruction tuning is a technique that aims to improve the capabilities and controllability of LLMs. It involves further training these models on a dataset consisting of (instruction, output) pairs in a supervised manner. This bridges the gap between the next-word prediction objective of LLMs and the users' objective of having LLMs adhere to human instructions.

For example, we have instruct-tuned open-source models for tasks like Q/A, NER, and classification. These instruct-tuned models are better at generalizing the task to new data and can do so in a resource-efficient manner.

Another shortcoming of currently available closed models like GPT-4 is that they have relatively short context lengths. 8K tokens are default and this means that you can't pass it large documents and ask it to extract the results from there.

Luckily the open-source community has been busy solving practical problems like this. Earlier this week, the paper LongLoRA introduced an ultra-efficient fine-tuning approach to significantly extend the context windows of pre-trained LLMs.

LongLoRA adopts LLaMA2 7B from 4k context to 100k, or LLaMA2 70B to 32k on a single 8x A100 machine, and basic implementation only takes 2 lines of code.

Open-source LLMs have been the focus of the GPU-poor and constraining resources typically have magical effects - efficient, elegant, and simple innovations that solve the problem!

Open-source LLMs have emerged as cheap and efficient alternatives for enterprise AI use cases and will continue to play an important role in the space.

Some have argued that open-sourcing LLMs is dangerous and they may be misused by bad actors.

There is no historical precedent for this argument.

Traditionally, open-source technology has spurred innovation, transparency, and the creation of safe and robust systems. Linux, triumphed over Unix in the OS world, largely because it is open-source and has a huge developer community.

Open source promotes collaboration, community oversight, rapid iteration, and benchmarking all essential for responsible AI development. Open-source developer communities tend to be great at detecting and plugging vulnerabilities.

Disappointingly, big players like OpenAI (despite their name) and Google, haven't open-sourced a lot of their technology. Luckily for the open-source community, Meta has created accessible open-source LLMs. In spite of Meta open-sourcing the powerful 70B LLama-2., the doomsday scenarios outlined by the anti-open-source crowd haven't come true.

Finally, multimodal LLMs (MLLM) are around the corner and if the GPU-rich won't outsource a MLLM, we can always enhance an existing open-source LLM and convert it into a multi-modal model.

In summary, open-source LLMs play a role in the real-world application of AI and are crucial for the democratization of this technology, transparency, and AI alignment

English

Check out Fish Folk by Erlend Sogge Heggen on @Kickstarter a project with solid foundations! kickstarter.com/projects/erlen…

English

Gerard C.L. retweetledi

@AjSCFarners #SCFarners @TelefonicaTech #movistar bon dia, podeu donar explicacions als talls de comunicacions que han passat el 6 al vespre durant gairebé una hora i avui dia 12 des de les 12 del mig dia? No funcionaven ni les antenes mòbil. Gràcies, és molt greu.

Català

@AjSCFarners bon dia, podeu donar explicacions als talls de comunicacions que han passat el 6 al vespre durant gairebé una hora i avui dia 12 des de les 12 del mig dia? No funcionaven ni les antenes mòbil. Gràcies, és molt greu.

Català

Gerard C.L. retweetledi

Join me in supporting online privacy, free speech, and digital access. @EFF has fought for tech users for over 30 years, and it's more important now than ever before. eff.org/join

English

Gerard C.L. retweetledi

1/5 Hola. Podríeu llegir-me? És important.

Aquesta és l'Amal, la meva gossa. Un caçador l'havia abandonat per "no servir". Tenia 7 mesos. Em va amenaçar que, o me l'emportava, o la matava a trets perquè "molestava" els seus gossos "útils" (només volia companyia) #NiUnaPotaEnrere

Català

Gerard C.L. retweetledi

Avui i demà tindrà lloc un dels fenòmens més espectaculars😮que es dona a #Montserrat ⛰️. Es tracta de la Flor de Sol, la posta de sol☀️que es pot observar a través de la Roca Foradada des📍d'El Vilar, al municipi de #castellbellielvilar. Un fenomen únic al món #FlordeSol2023

Català

Gerard C.L. retweetledi

El debate sobre la sanidad pública no se reduce a "puedo pagar la privada" o no puedo, como muchos creen. Comento algunos matices basados en mi experiencia viviendo en #EEUU más de una década:

1. Cuando la sanidad es privada no se es paciente, sino cliente. Importante.👇🏽

Español

Gerard C.L. retweetledi

Never forget Lise Meitner.

Lideró el descubrimiento de la fisión nuclear en 1938 y cuando el Nobel decidió premiar este avance científico clave le dieron el premio solo a su sobrino y colaborador Otto Hahn, dejándola fuera.

Hoy es 11 de febrero, #DiaDeLaMujerYLaNinaEnLaCiencia

Español

Gerard C.L. retweetledi

¿Puede una mentira modificar el discurso histórico?

Hoy en #FluzoDiscursos hemos visto cómo se ha intentado falsear la figura de Marie Curie recientemente para defender ideologías actuales.

En este HILO os doy más detalles de esta peligrosa manipulación histórica.

⚗️🧵

Español

Gerard C.L. retweetledi

Epílogo

Tengo el enorme honor de que este bulo sobre Marie Curie haya sido desmontado a raíz de un hilo mío de hace unos años que se viralizó notablemente.

A partir de ahí, la gente de @maldita hizo el estupendo trabajo de desmontar este bulazo.

maldita.es/malditobulo/20…

Español