Sabitlenmiş Tweet

Muhammad Saad

2.5K posts

Muhammad Saad

@ghost_devs

I build AI agents & automation systems | ML · RAG · LangChain · n8n | Sharing what works (and what breaks)

Azad Kashmir 🍁 Katılım Mayıs 2025

135 Takip Edilen48 Takipçiler

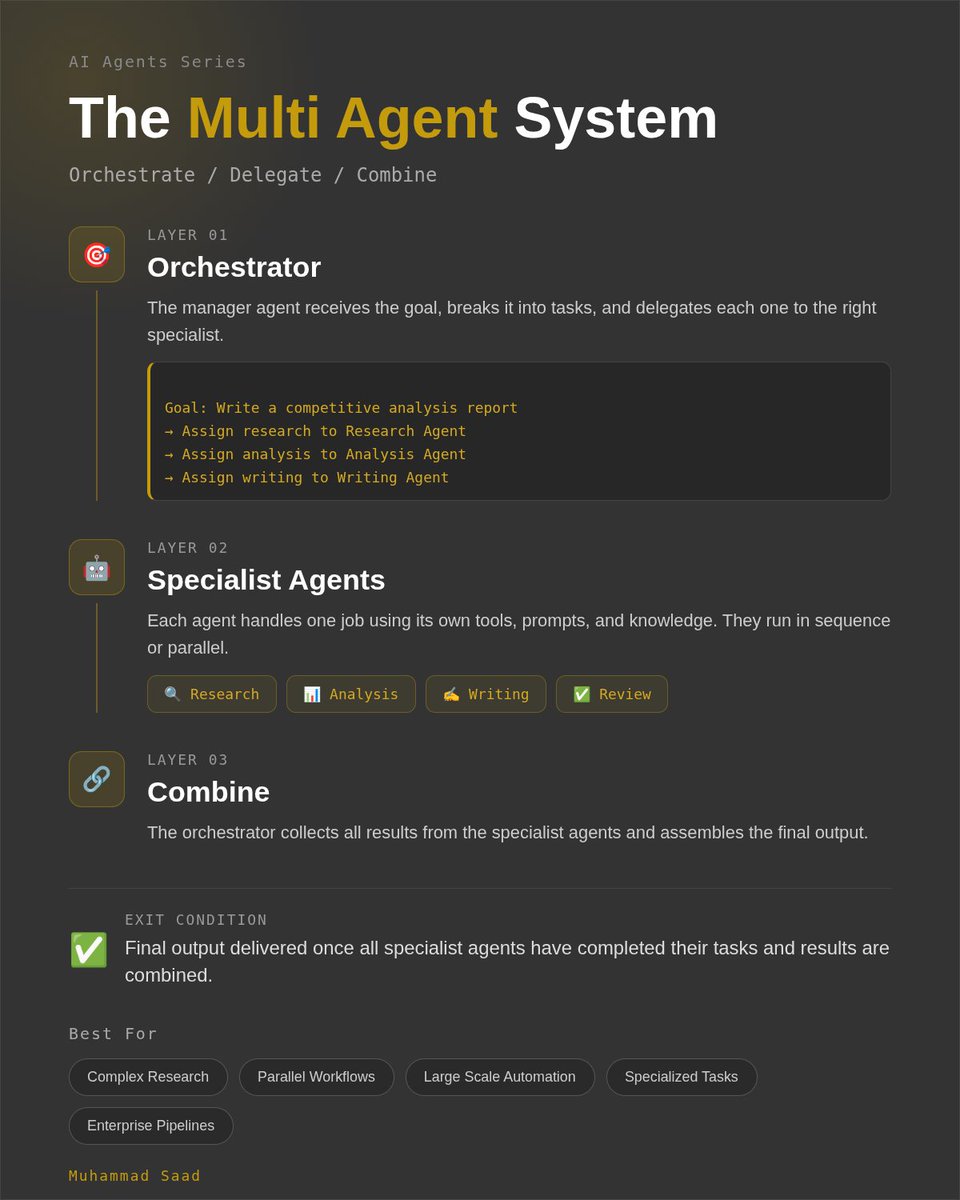

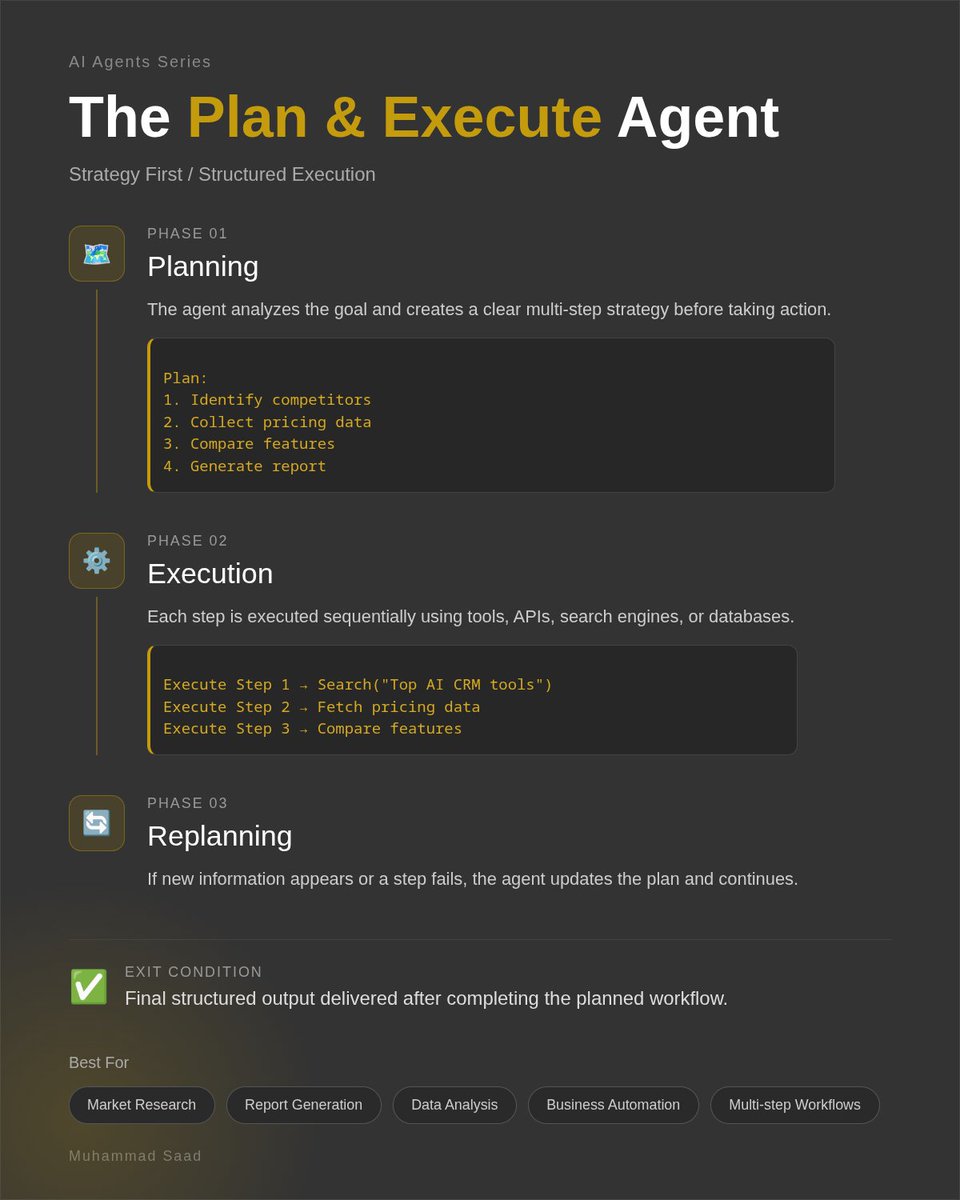

@GithubProjects That is a great change, creating a team out of Claude Code. My concern is how this can all be controlled properly. I guess I need to dive in.

English

@MinChonChiSF I’m with you on this. AI has already changed the landscape. The layoffs aren’t always loud, but they’re happening.

I think now it’s really about learning how to use AI and finding opportunities to move forward with it.

English

@brathocha That’s the real difference. Humans create from lived experience. AI can mimic patterns, but it doesn’t feel them and that shows.

English

AI struggles to replicate the depth of human internal conflict, leading to uninspired ideas. Even AI-generated speaker pitches at conferences can feel hollow, lacking the 'soul' that comes from genuine struggle and originality. #AI #Creativity #Tech

English

@SunookYoon To be fair, the problem isn’t the tools. It’s that automation still needs structure.

Agents reduce the effort but someone still has to think through the workflow.

English

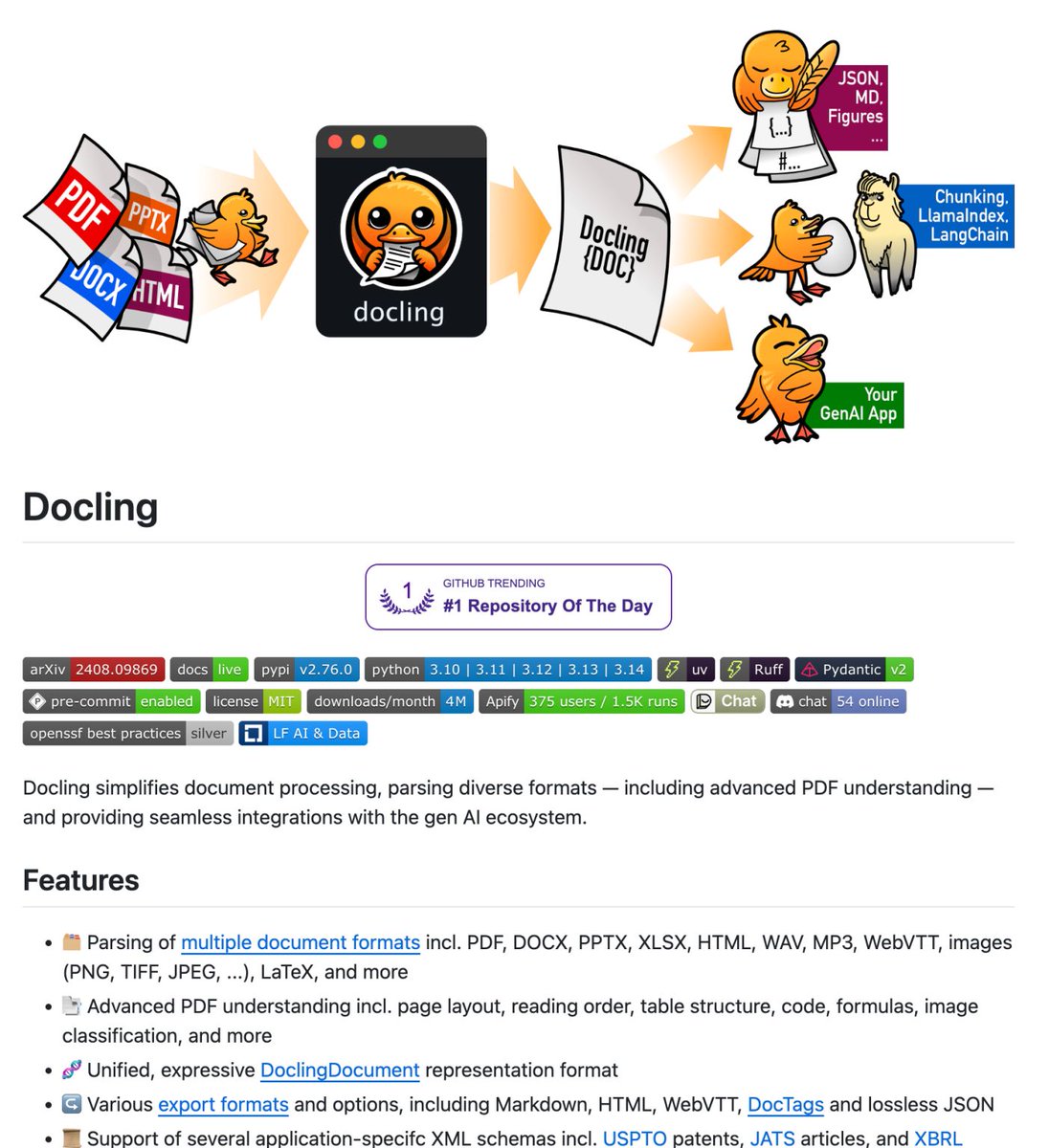

@dr_cintas This is huge.

Docling lets AI agents read and reason over PDFs, slides, images, audio, and code blocks. Clean Markdown, JSON, or HTML output ready for your LLM. 100% open-source.

English

This open-source tool gives your AI agents the ability to read, parse, and understand ANY document format.

It's called Docling. It converts PDFs, DOCX, PPTX, XLSX, audio files, images, LaTeX, and more into clean structured data your LLM can actually reason over.

→ Understands page layout, tables, formulas, and code blocks

→ Exports clean Markdown, HTML, or JSON ready for any LLM pipeline

→ Native MCP server for direct agent integration

→ Plug-and-play with LangChain, LlamaIndex, CrewAI & Haystack

It also just got a production-grade 258M vision-language model that reads an entire page in one pass.

100% Open Source.

English

@FynCas This does look like a solid agent handling multiple social media platforms.

English

@ingliguori These are some solid phases for learning the Agentic AI step by step and it can also prevent from wandering here and there.

English

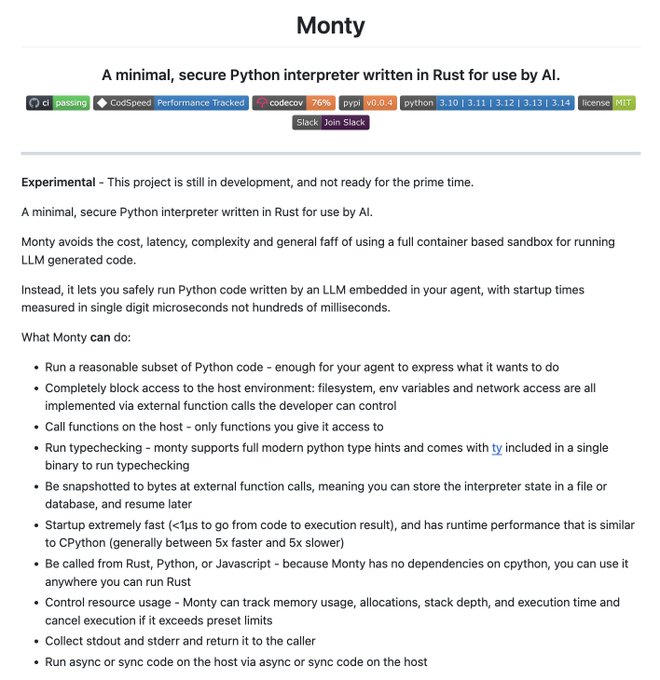

@sukh_saroy This solves the biggest bottleneck for AI agents running Python code safely.

No containers, no delay, full control. Monty is wild.

English

🚨Breaking: Someone just open sourced a Python interpreter written in Rust and it's wild.

It's called Monty. And it's not a sandbox.

It's a minimal, secure Python runtime built specifically for AI agents -- and it starts in under 1 microsecond.

Here's what this thing does:

→ Runs Python code written by LLMs with zero sandbox overhead

→ Completely blocks filesystem, env vars, and network unless YOU allow it

→ Starts in 0.06ms vs Docker's 195ms and Pyodide's 2,800ms

→ Snapshots execution state mid-flight -- pause, serialize, resume later

→ Runs typechecking with `ty` baked into a single binary

→ Calls from Python, Rust, or JavaScript -- no CPython dependency

Here's the wildest part:

Docker takes 195ms to start. A sandboxing service takes 1,033ms.

Monty takes 0.06ms.

That's not a rounding error. That's a different category of tool entirely.

Every AI agent running today has the same problem: you either run LLM code directly on your host (YOLO Python -- zero security) or you spin up a container and wait 200ms per call.

Monty closes that gap. One import. No daemon. No image pull. No container escape risk.

The pydantic team built this to power code-mode in PydanticAI -- where LLMs write Python instead of making tool calls, and Monty executes it safely.

Cloudflare and Anthropic are already publishing on this exact paradigm.

5.2K GitHub stars. Already trending.

100% Open Source. MIT License.

(Link in the comments)

English

This AI Agent LITERALLY replaces your entire outreach system….

– Verifies every email

– Scrapes their website & LinkedIn

– Writes a personalized first line

– Auto-sends the email

– Books me calls on autopilot

It’s like having a full-time SDR who doesn’t sleep or complain.

Repost + Like + Comment “Agent” & I’ll send over the full system

(must be following so I can DM)

English

@VikramVerm25510 This is a great breakdown.

Modern AI systems work in layers: retrieval, execution, tool connectivity, and multi-agent coordination. Understanding this is key to building effective AI.

English

RAG, AI Agents, MCP, and A2A aren’t competitors.

They’re different layers of the same AI system.

Once you see this mental model, modern AI architecture becomes much clearer 👇

1️⃣ RAG = Better answers

RAG is about retrieval + grounded generation.

Flow:

User question → retrieve relevant data → add context → LLM generates answer with sources.

Use RAG when you need:

• answers from company docs

• reduced hallucinations

• citations and traceability

RAG solves the knowledge problem.

Once the model has enough context to answer, its job is done.

2️⃣ AI Agents = Do the work

Agents move beyond answering.

They plan, act, and iterate.

Typical loop:

Plan → Observe → Act → Reflect → Repeat

Use agents when tasks require:

• multi-step decisions

• tool usage

• executing workflows in real systems

• verifying outcomes

Agents solve the execution problem.

3️⃣ MCP = Standard tool access

MCP (Model Context Protocol) is the plumbing layer.

It standardizes how LLMs connect to tools and resources.

Think:

“One universal interface for AI → tools.”

Use MCP when you need:

• consistent access to APIs and services

• structured connections to SQL, CRM, files, etc.

• fewer custom integrations

MCP solves the tool connectivity problem.

4️⃣ A2A = Agents working together

A2A (Agent-to-Agent) enables multi-agent systems.

It handles:

• agent discovery

• delegation and routing

• permissions and handoffs

• events and status updates

Use A2A when you have:

• multiple specialized agents

• distributed AI systems

• cross-team or partner automation

A2A solves the coordination problem.

The real takeaway:

Modern AI systems often look like this:

RAG → grounding knowledge

Agents → executing tasks

MCP → connecting tools

A2A → coordinating agents

Different problems.

Different layers.

One architecture.

The real AI shift isn’t better prompts.

It’s better systems.

📍Follow me📍 @VikramVerm25510

[𝐓𝐨 𝐠𝐞𝐭 𝐃𝐌 𝐅𝐚𝐬𝐭.]

#AI #RAG #AIAgents #LLM #AIArchitecture #GenAI

English

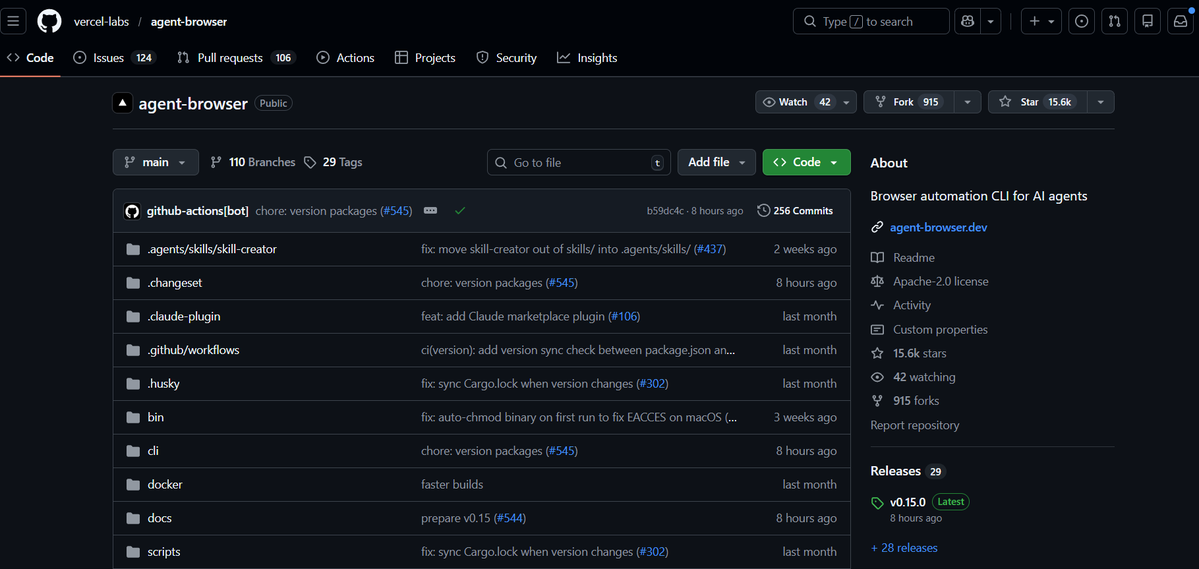

@_vmlops This is huge for AI agents.

Turning an AI into a real web user opens fully autonomous workflows, web automation, and advanced data extraction like never before.

English

AI agents can now use a browser like humans

Vercel just dropped agent-browser a CLI that lets AI:

• Navigate any website

• Click, type & interact with elements

• Take screenshots & extract data

• Persist sessions, cookies & auth

• Run complete workflows autonomously

Basically, you can turn any AI into a real web user

This changes everything for automation, scraping & autonomous agents

link - github.com/vercel-labs/ag…

English

@akshay_pachaar Memory changes everything.

With read-write memory, AI agents can learn from interactions, remember preferences, and improve over time. This is the bridge to truly adaptive AI.

English

RAG was never the end goal.

Memory in AI agents is where everything is heading. Let me break down this evolution in the simplest way possible.

RAG (2020-2023):

- Retrieve info once, generate response

- No decision-making, just fetch and answer

- Problem: Often retrieves irrelevant context

Agentic RAG:

- Agent decides *if* retrieval is needed

- Agent picks *which* source to query

- Agent validates *if* results are useful

- Problem: Still read-only, can't learn from interactions

AI Memory:

- Read AND write to external knowledge

- Learns from past conversations

- Remembers user preferences, past context

- Enables true personalization

The mental model is simple:

↳ RAG: read-only, one-shot

↳ Agentic RAG: read-only via tool calls

↳ Agent Memory: read-write via tool calls

Here's what makes agent memory powerful:

The agent can now "remember" things - user preferences, past conversations, important dates. All stored and retrievable for future interactions.

This unlocks something bigger: continual learning.

Instead of being frozen at training time, agents can now accumulate knowledge from every interaction. They improve over time without retraining.

Memory is the bridge between static models and truly adaptive AI systems.

But it's not all smooth sailing.

Memory introduces new challenges RAG never had, like memory corruption, deciding what to forget, and managing multiple memory types (procedural, episodic, and semantic).

Solving these problems from scratch is hard. If you want to build Agents that never forget, Cognee is an open-source framework (12k+ stars) to build real-time knowledge graphs and get self-evolving AI memory.

Getting started with Cognee is as simple as this:

𝗮𝘄𝗮𝗶𝘁 𝗰𝗼𝗴𝗻𝗲𝗲[.]𝗮𝗱𝗱("𝗬𝗼𝘂𝗿 𝗱𝗮𝘁𝗮 𝗵𝗲𝗿𝗲")

𝗮𝘄𝗮𝗶𝘁 𝗰𝗼𝗴𝗻𝗲𝗲[.]𝗰𝗼𝗴𝗻𝗶𝗳𝘆()

𝗮𝘄𝗮𝗶𝘁 𝗰𝗼𝗴𝗻𝗲𝗲[.]𝗺𝗲𝗺𝗶𝗳𝘆()

𝗮𝘄𝗮𝗶𝘁 𝗰𝗼𝗴𝗻𝗲𝗲[.]𝘀𝗲𝗮𝗿𝗰𝗵("𝗬𝗼𝘂𝗿 𝗾𝘂𝗲𝗿𝘆 𝗵𝗲𝗿𝗲")

That’s it. Cognee handles the heavy lifting, and your agent gets a memory layer that actually learns over time.

I have shared the repo in the replies!

GIF

English

@TheAIHub111 I haven’t gone deep into OpenClaw yet, as I mostly work with LangGraph and other custom agent setups.

Is it worth diving in, and how are security concerns handled here?

English

My OpenClaw bot runs 6 AI agents 24/7:

- Finds local businesses without a website

- Builds a custom demo site for them automatically

- Sends outreach with the preview + payment link

- Handles objections and closes the sale

Most local businesses don't have a website, this system finds them, pitches them, and collects payment automatically

Reply "OpenClaw" and I'll send you early access (must be following)

English

@Shruti_0810 This will be interesting to explore in depth, because hallucinations can still happen even with many countermeasures.

English

Developers won’t like this…😭

But 90% of “AI agents” we’ve been hyping are basically unstable interns with amnesia.

They hallucinate.

They loop.

They forget instructions.

A tiny 11-person team from Sweden just fixed ALL of it.

Meet the first AI agent engine that actually works:

Link : incredible.one

producthunt.com/products/incre…

AI agents just entered their real era.

English

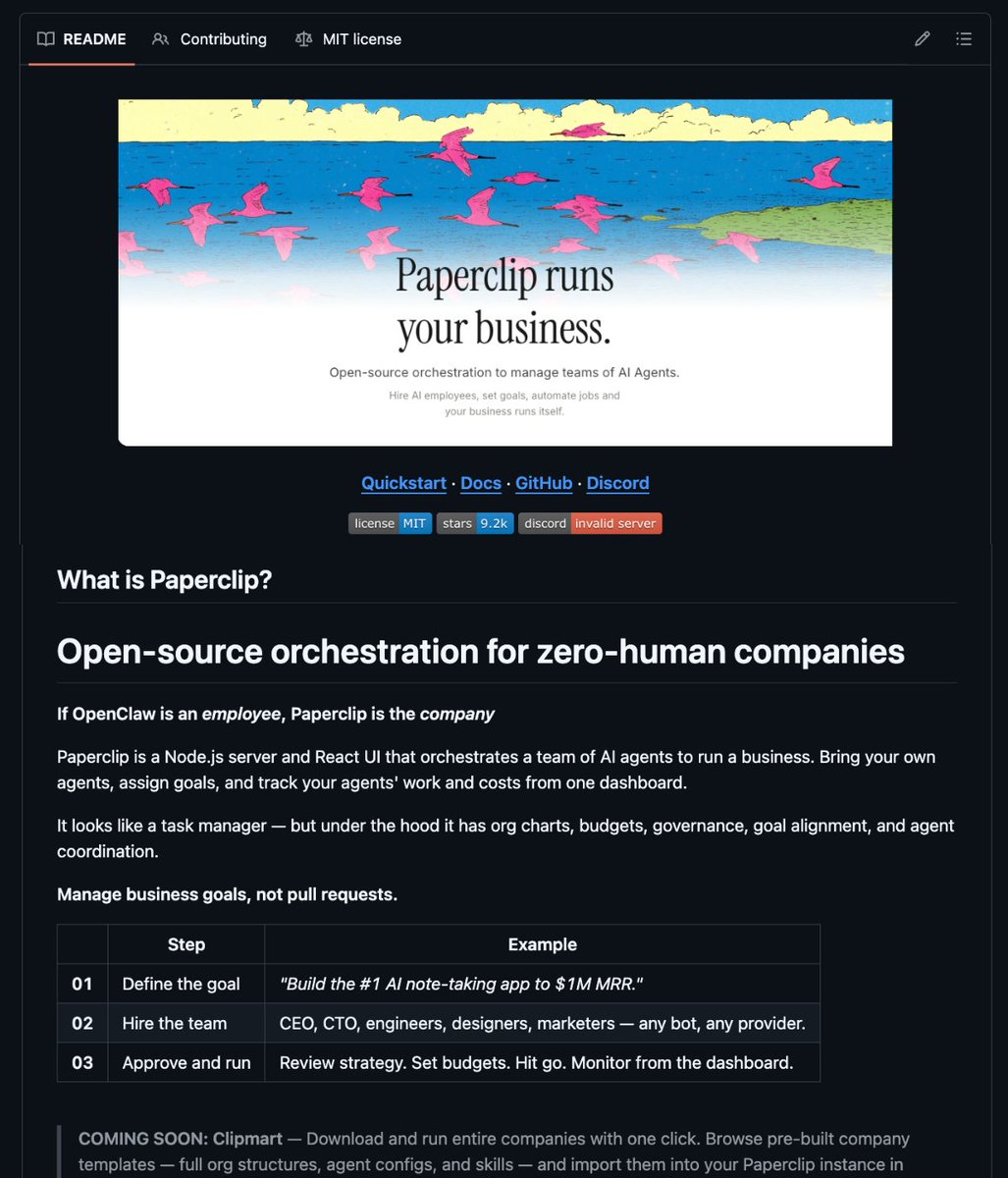

@hasantoxr OpenClaw as employee → Paperclip as company.

Zero-human ops finally get structure: budgets, reporting, 24/7 agents.

This could redefine autonomous AI workflows.

English

🚨 BREAKING: Someone just open-sourced the operating system for zero-human companies.

It's called Paperclip.

Think of it as the company layer on top of your AI agents.

If OpenClaw is an employee, Paperclip is the entire company.

What's inside:

→ Bring any agent (Claude Code, Codex, Cursor, OpenClaw) with real reporting lines

→ Give them org charts, titles, budgets, and goals

→ Monthly budgets per agent when they hit the limit, they stop. No runaway costs

→ Full ticket system with tool-call tracing and immutable audit logs

→ Agents run 24/7 on heartbeats while you monitor from your phone

Instead of having 20 Claude Code tabs open with no idea what's happening…

One deployment. One dashboard. Your agents run the company while you sleep.

1.4K stars. MIT License. 100% Opensource.

English

@recap_david This is a perfect example of AI agents at work:

- Collect data

- Generate content

- Assemble final product

All autonomously, at a fraction of the cost. Incredible.

English

I built an AI system that creates luxury real estate listing videos for under $10 (just from a Zillow link).

The average agent pays $1K–$5K per property for professional video production. Sell this output to realtors and agents and make $$$.

Here's how it works:

→ Save the 6 best images from any Zillow listing

→ Bring each image into Calico AI to animate it — smooth dolly shots, cinematic pans, luxury motion styling

→ A custom GPT analyzes the listing and writes a polished 30-second voiceover script

→ ElevenLabs generates the AI narration and a custom music track

→ CapCut to assemble the final video with captions in minutes

The result: every listing gets a professional-grade walkthrough video — not just the $10M estates with marketing budgets.

Static listing photos aren't enough anymore.

Buyers want to feel like they're walking through the home before they book a showing.

Comment "CALICO" below, and I'll send you the full process with prompts and GPTs so you can recreate (must be following so I can message you!).

English