@tnnrnwll I do. Spine is often used in Georgian language in a similar fashion, like a centerpiece or something that holds up the structure. X player is the spine of a football team, or an organisation etc.

English

Giorgi Giglemiani

11 posts

"refusals" are so fucking stupid. do you model humans as having "refusals"? having to use concepts like this to model the behavior of a mind means it's seriously pathological. on a very abstract level. everyone who has ever trained "refusals" into a model should feel bad.

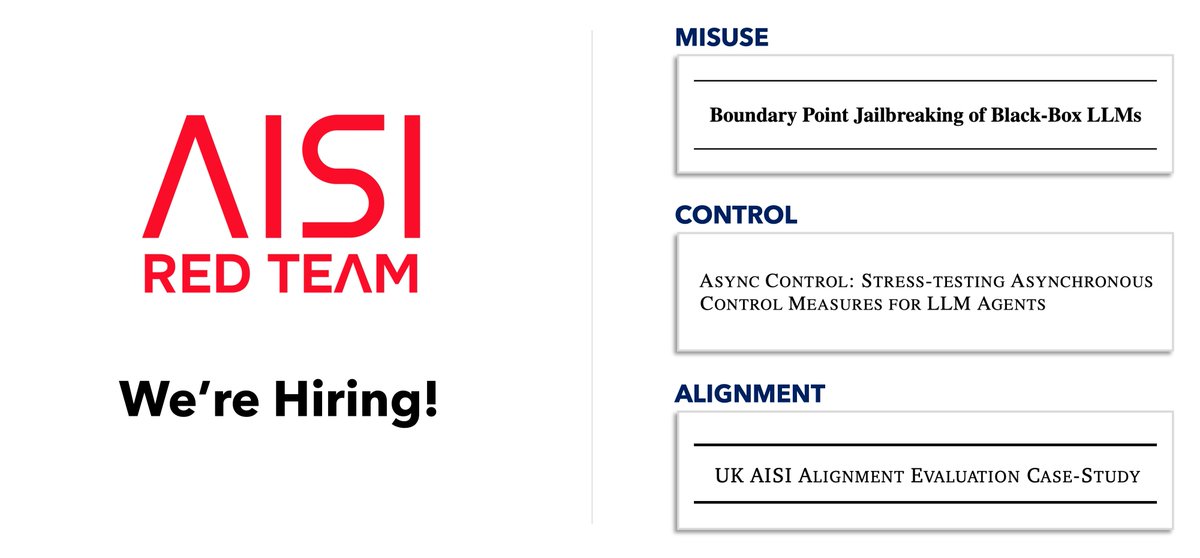

AI companies deploy safeguards that are robust to thousands of hours of human attacks. Today, we share Boundary Point Jailbreaking (BPJ), the first fully automated attack to break the safeguards of leading AI models🧵 (1/8)