God of Prompt

26.6K posts

@godofprompt

Human + AI = Superpowers 🔑 Sharing AI Prompts, Systems, Tips & Tricks

If you don't understand this, you will not understand why LLM-based agents are irreparably failing for a general-purpose problem solving. An agent (by the way it was the topic of my PhD 20 years ago) to be useful, must be rational. Being rational means to always prefer an outcome that results in the maximal expected utility to its master/user. Let’s say an agent has two actions they can execute in an environment: a_1 and a_2. If the agent can predict that a_1 gives its user an expected utility of 10, and a_2 gives an expected utility of -100, then a rational agent must choose a_1 even if choosing a_2 seems like a better option when explained in words. The numbers 10 and -100 can be obtained by summing the products of all possible outcomes for each action and their likelihoods. Now here is the problem with LLM-based agents. The LLM is not optimizing expected utility in the environment. It is optimizing the next token, conditioned on a prompt, a context window, and a training distribution full of examples of what helpful answers are supposed to look like. Those are not the same objective. So when we wrap an LLM in a loop and call it an “agent,” we have not created a rational decision-maker. We have created a text generator that can imitate the surface form of deliberation. It may say things like: “I should compare the expected outcomes.” “The best action is probably a_1.” “I will now execute the optimal plan.” But the internal mechanism is not selecting actions by maximizing the user’s expected utility. It is generating a continuation that is statistically appropriate given the prompt and prior context. This distinction matters enormously. For narrow tasks, the imitation can be good enough. If the environment is constrained, the actions are simple, and the success criteria are close to patterns seen in training, the system can appear agentic. But for general-purpose problem solving, the gap becomes fatal. A rational agent needs stable preferences, calibrated beliefs, causal models of the world, the ability to evaluate consequences, and the discipline to choose the action with maximal expected utility even when that action is boring, non-linguistic, or unlike the examples in its training data. An LLM-based agent has none of that by default. It has fluency. It has pattern completion. It has a remarkable ability to compress and recombine human text. But fluency is not rationality, and a plausible plan is not an expected-utility calculation. This is why these systems so often fail in strange, brittle, and irreparable ways when given open-ended responsibility. They are not failing because the prompts are insufficiently clever. They are failing because we are asking a simulator of rational agency to be a rational agent.

Today we're releasing Keanu 3.0, our latest AI Ad Optimization Model. Rebuilt from the ground up. Already driving +12.4% ROI than its previous version. Not only is it a huge leap, it's also a foundation that will enable us to move faster and deliver stronger optimizations in the future. It's available today for all our advertisers. Full details on Keanu 3.0's methodology here: vibe.co/blog/product-u…

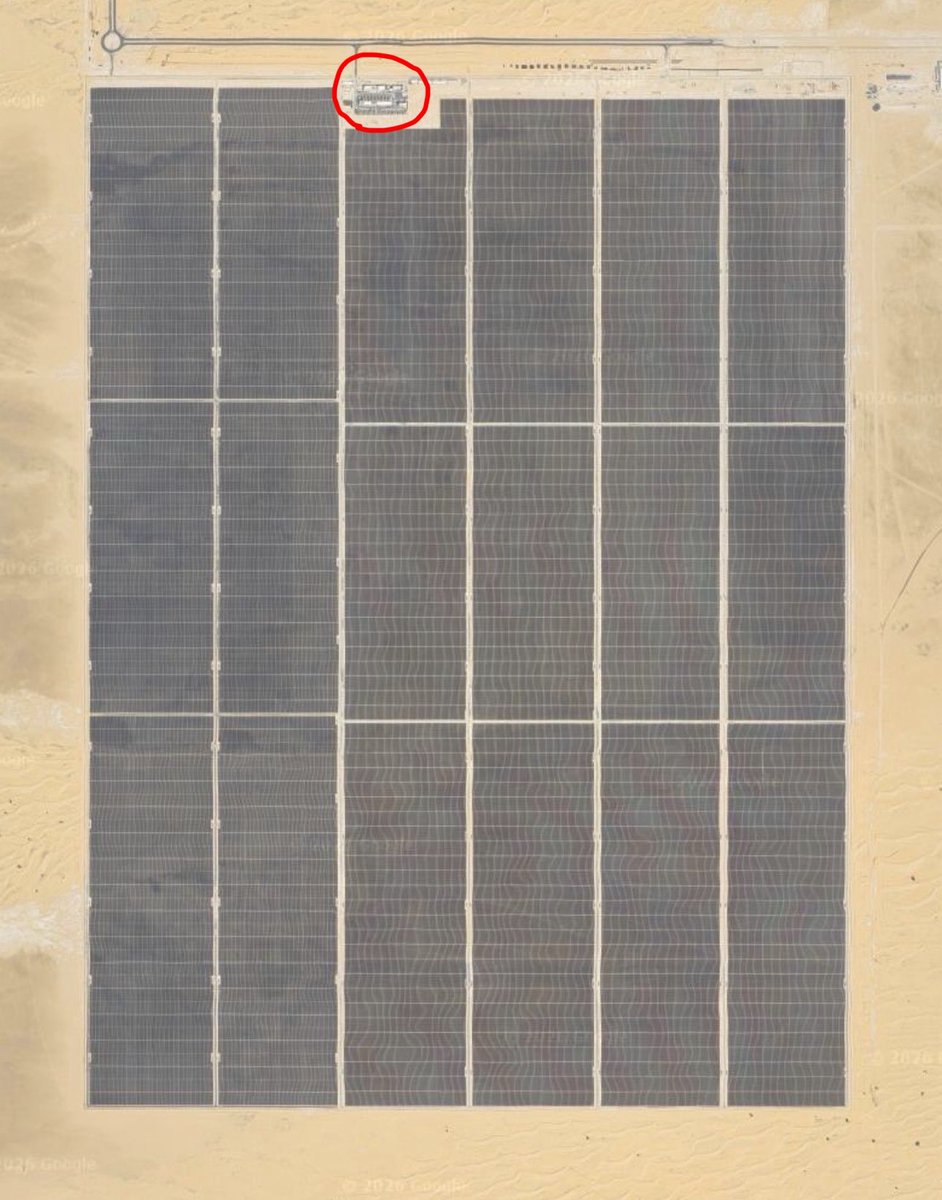

This 100MW data center in UAE is the largest solar powered datacenter in the world. There are currently 1,300 data centers in the world that are bigger than this one, but this one is the largest solar powered one. That’s 10 square kilometres of solar panels you can see. The datacenter itself is 0.02 square kilometres, so a solar powered datacenter is ~500x larger than a data center using any other form of power. A five hundred times larger site. UAE has some of the highest solar irradiance anywhere on Earth, it is an inhospitable desert. Averaging 9.7 hours of sunlight per day with average irradiance above 2,200 kWh/m^2. If you build this somewhere else, you need more solar panels because your irradiance will almost certainly be lower. Even if the world had an infinite supply of free solar panels, solar power will not be free. Anyone who has ever done major capital projects, who looks at where data centers need to be in the next 5 years and the next 10 years… we know it aint solar. Sorry. You struggle to even build a train track that’s 100 miles long and 10ft wide anywhere in the West, there is zero chance of build 100 square mile solar farms for GW compute. This is why people are talking about space compute. Deploying into space is one strategy to solve the constraints. But there are faster and more scalable strategies, that get you to mass deployment of multi GW data centers. There are strategies that also allow you to power the 10 billion robots and their newtonian actuators, that immediately follow the inference demand cycle. Step back and look at the full cycle of this industrial revolution… There will be billions of chips, but there will be trillions of actuators. This biggest part of this revolution is the embodiment cycle, and it’s big by a factor of 20 or 50x over the stuff that comes before it. There is no analogy in human history for the scale of this economy, of the demand it will place on energy and commodities. The humans own the Earth, and if you exist inside their legal system, they won’t let you turn the surface of their planet into glass. But they do want your chips and your actuators to serve their needs and desires. There is a way to do all of this, and so it will happen.

Vibe Coding Is the New Product Management “There’s been a shift—a marked pronouncement in the last year and especially in the last few months—most pronounced by Claude Code, which is a specific model that has a coding engine in it, which is so good that I think now you have vibe coders, which are people who didn’t really code much or hadn’t coded in a long time, who are using essentially English as a programming language—as an input into this code bot—which can do end-to-end coding. Instead of just helping you debug things in the middle, you can describe an application that you want. You can have it lay out a plan, you can have it interview you for the plan. You can give it feedback along the way, and then it’ll chunk it up and will build all the scaffolding. It’ll download all the libraries and all the connectors and all the hooks, and it’ll start building your app and building test harnesses and testing it. And you can keep giving it feedback and debugging it by voice, saying, “This doesn’t work. That works. Change this. Change that,” and have it build you an entire working application without your having written a single line of code. For a large group of people who either don’t code anymore or never did, this is mind-blowing. This is taking them from idea space, and opinion space, and from taste directly into product. So that’s what I mean—product management has taken over coding. Vibe coding is the new product management. Instead of trying to manage a product or a bunch of engineers by telling them what to do, you’re now telling a computer what to do. And the computer is tireless. The computer is egoless, and it’ll just keep working. It’ll take feedback without getting offended. You can spin up multiple instances. It’ll work 24/7 and you can have it produce working output. What does that mean? Just like now anybody can make a video or anyone can make a podcast, anyone can now make an application. So we should expect to see a tsunami of applications. Not that we don’t have one already in the App Store, but it doesn’t even begin to compare to what we’re going to see. However, when you start drowning in these applications, does that necessarily mean that these are all going to get used or they’re competitive? No. I think it’s going to break into two kinds of things. First, the best application for a given use case still tends to win the entire category. When you have such a multiplicity of content, whether in videos or audio or music or applications, there’s no demand for average. Nobody wants the average thing. People want the best thing that does the job. So first of all, you just have more shots on goal. So there will be more of the best. There will be a lot more niches getting filled. You might have wanted an application for a very specific thing, like tracking lunar phases in a certain context, or a certain kind of personality test, or a very specific kind of video game that made you nostalgic for something. Before, the market just wasn’t large enough to justify the cost of an engineer coding away for a year or two. But now the best vibe coding app might be enough to scratch that itch or fill that slot. So a lot more niches will get filled, and as that happens, the tide will rise. The best applications—those engineers themselves are going to be much more leveraged. They’ll be able to add more features, fix more bugs, smooth out more of the edges. So the best applications will continue to get better. A lot more niches will get filled. And even individual niches—such as you want an app that’s just for your own very specific health tracking needs, or for your own very specific architectural layout or design—that app that could have never existed will now exist.”

Between Gemini 3.1 and Claude 4.6 it's honestly wild what you can build. This feels like Google Earth and Palantir had a baby. Made this with all the geospatial bells and whistles -- real time plane & satellite tracking, real traffic cams in Austin, and even got a traffic system working. Panoptic detection on everything. Skinned the whole thing to look like a classified intelligence system. EO, FLIR, CRT. Got a bunch more stuff on the roadmap. This is fun.

This is the the quote I've been citing a lot recently.