Sabitlenmiş Tweet

Konstantinos Grevenitis 💻

1.9K posts

Konstantinos Grevenitis 💻

@grevenitisk

- IT solutions architect @ https://t.co/Mis2RIUo6p - Sci-fi enthusiast - Movies addict - Master holder trying for PhD - Pizza lover - https://t.co/vKwrCWQXqk…

Thessaloniki, Greece Katılım Kasım 2017

1.8K Takip Edilen403 Takipçiler

Konstantinos Grevenitis 💻 retweetledi

⚡ MODUL4R M6 General Assembly in full swing!

The #MODUL4R team met in #Thessaloniki, Greece for the project’s M6 General Assembly Meeting taking place at @thessinnozone on 14 & 15 June.

A big thank you to all of the partners & a special shout out to @EngAtlantis for hosting.

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

StructuredConcurrency is feeling more complete and stable. Anyone want to kick the tires and provide feedback? @marcgravell maybe? The goal is to provide better structure for "top-level" async loops like those commonly found in protocol layers.

github.com/StephenCleary/…

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

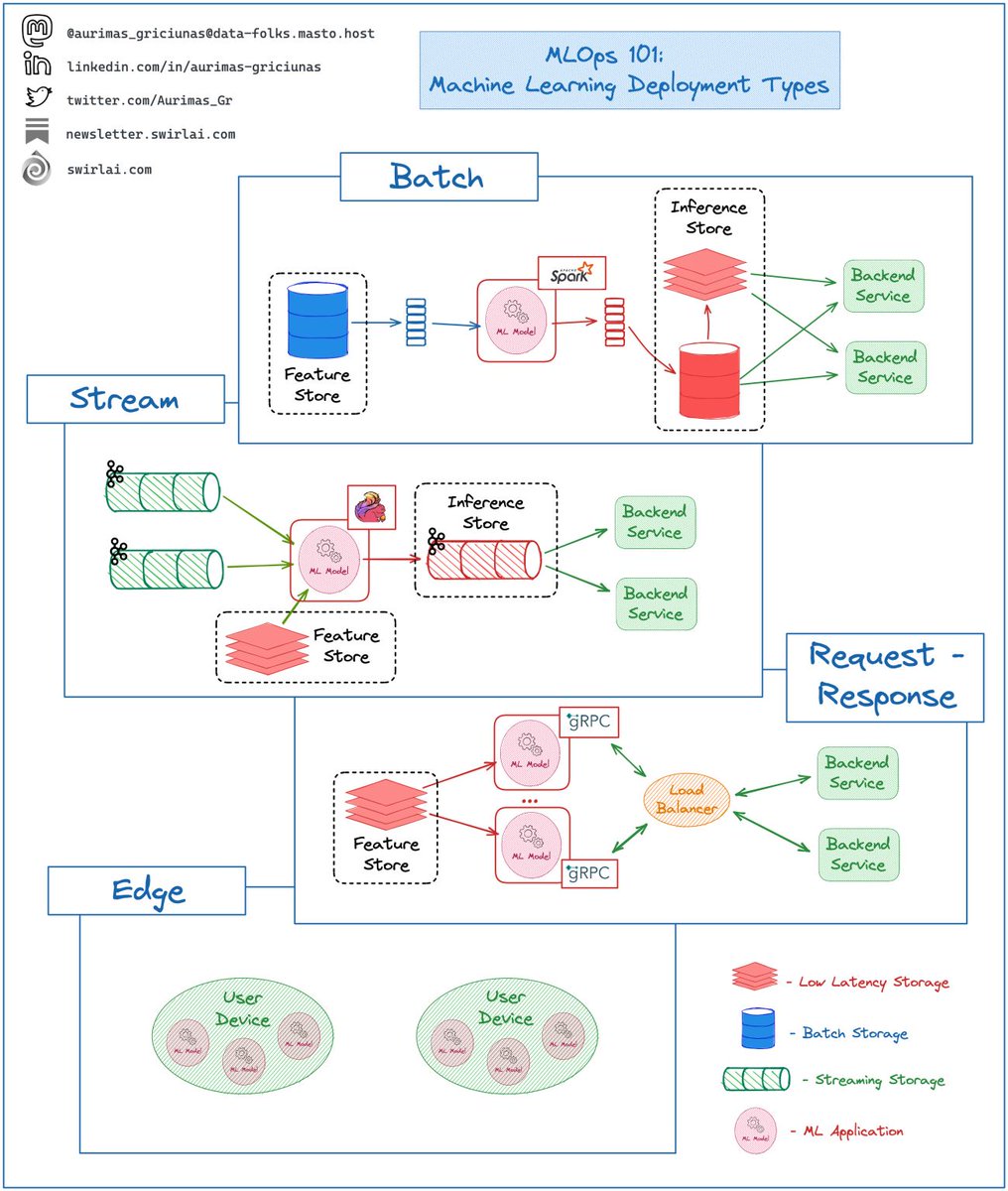

What are the four main 𝗠𝗮𝗰𝗵𝗶𝗻𝗲 𝗟𝗲𝗮𝗿𝗻𝗶𝗻𝗴 𝗗𝗲𝗽𝗹𝗼𝘆𝗺𝗲𝗻𝘁 𝗧𝘆𝗽𝗲𝘀?

Even if you will not work with them day to day, the following are the four ways to deploy a ML Model you should know and understand as a MLOps/ML Engineer.

➡️ 𝗕𝗮𝘁𝗰𝗵:

👉 You apply your trained models as a part of ETL/ELT Process on a given schedule.

👉 You load the required Features from a batch storage, apply inference and save the results to a batch storage.

👉 It is sometimes falsely thought that you can’t use this method for Real Time Predictions.

👉 Inference results can be loaded into a real time storage and used for real time applications.

➡️ 𝗘𝗺𝗯𝗲𝗱𝗱𝗲𝗱 𝗶𝗻 𝗮 𝗦𝘁𝗿𝗲𝗮𝗺 𝗔𝗽𝗽𝗹𝗶𝗰𝗮𝘁𝗶𝗼𝗻:

👉 You apply your trained models as a part of Stream Processing Pipeline.

👉 While Data is continuously piped through your Streaming Data Pipelines, an application with a loaded model continuously applies inference on the data and returns it to the system - most likely another Streaming Storage.

👉 This deployment type is likely to involve a real time Feature Store Serving API to retrieve additional Static Features for inference purposes.

👉 Predictions can be consumed by multiple applications subscribing to the Inference Stream.

➡️ 𝗥𝗲𝗾𝘂𝗲𝘀𝘁 - 𝗥𝗲𝘀𝗽𝗼𝗻𝘀𝗲:

👉 You expose your model as a Backend Service (REST or gRPC).

👉 This ML Service retrieves Features needed for inference from a Real Time Feature Store Serving API.

👉 Inference can be requested by any application in real time as long as it is able to form a correct request that conforms API Contract.

➡️ 𝗘𝗱𝗴𝗲:

👉 You embed your trained model directly into the application that runs on a user device.

👉 This method provides the lowest latency and improves privacy.

👉 Data in most cases is generated and lives inside of device significantly improving the security.

What types of deployments are you mostly working on? Let me know in the comments! 👇

--------

Follow me to upskill in #MLOps, #MachineLearning, #DataEngineering, #DataScience and overall #Data space.

Also hit 🔔to stay notified about new content.

𝗗𝗼𝗻’𝘁 𝗳𝗼𝗿𝗴𝗲𝘁 𝘁𝗼 𝗹𝗶𝗸𝗲 💙, 𝘀𝗵𝗮𝗿𝗲 𝗮𝗻𝗱 𝗰𝗼𝗺𝗺𝗲𝗻𝘁!

Join a growing community of Data Professionals by subscribing to my 𝗡𝗲𝘄𝘀𝗹𝗲𝘁𝘁𝗲𝗿.

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

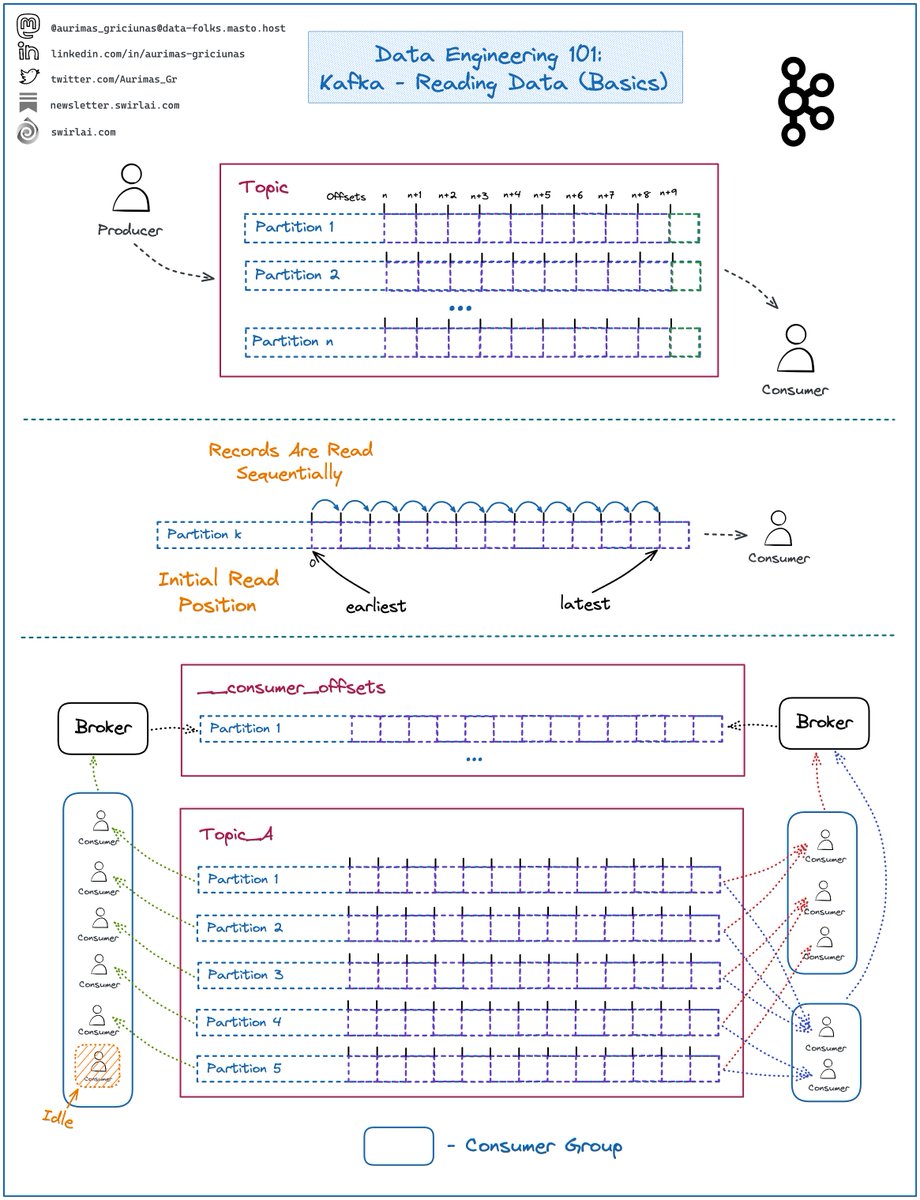

Some thoughts on 𝗥𝗲𝗮𝗱𝗶𝗻𝗴 𝗗𝗮𝘁𝗮 from 𝗞𝗮𝗳𝗸𝗮.

Kafka is an extremely important 𝗗𝗶𝘀𝘁𝗿𝗶𝗯𝘂𝘁𝗲𝗱 𝗠𝗲𝘀𝘀𝗮𝗴𝗶𝗻𝗴 𝗦𝘆𝘀𝘁𝗲𝗺 to understand, last time we covered Writing Data.

𝗦𝗼𝗺𝗲 𝗿𝗲𝗳𝗿𝗲𝘀𝗵𝗲𝗿𝘀:

➡️ Clients writing to Kafka are called 𝗣𝗿𝗼𝗱𝘂𝗰𝗲𝗿𝘀.

➡️ Clients reading the Data are called 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿𝘀.

➡️ Data is written into 𝗧𝗼𝗽𝗶𝗰𝘀 that can be compared to tables in Databases.

➡️ Messages sent to 𝗧𝗼𝗽𝗶𝗰𝘀 are called 𝗥𝗲𝗰𝗼𝗿𝗱𝘀.

➡️ 𝗧𝗼𝗽𝗶𝗰𝘀 are composed of 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀.

➡️ Each 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻 is a combination of and behaves as a write ahead log.

➡️ Data is written to the end of the 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻.

➡️ Each 𝗥𝗲𝗰𝗼𝗿𝗱 has an 𝗢𝗳𝗳𝘀𝗲𝘁 assigned to it which denotes its order in the 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻.

➡️ 𝗢𝗳𝗳𝘀𝗲𝘁𝘀 start at 0 and increment by 1 sequentially.

𝗥𝗲𝗮𝗱𝗶𝗻𝗴 𝗗𝗮𝘁𝗮:

➡️ Data is read sequentially per partition.

➡️ 𝗜𝗻𝗶𝘁𝗶𝗮𝗹 𝗥𝗲𝗮𝗱 𝗣𝗼𝘀𝗶𝘁𝗶𝗼𝗻 can be set either to earliest or latest.

➡️ Earliest position initiates the consumer at offset 0 or the earliest available due to retention rules of the 𝗧𝗼𝗽𝗶𝗰 (more about this in later episodes).

➡️ Latest position initiates the consumer at the end of a 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻 - no 𝗥𝗲𝗰𝗼𝗿𝗱𝘀 will be read initially and the 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 will wait for new data to be written.

➡️ You could codify your consumers independently, but almost always the preferred way is to use 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽𝘀.

𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽𝘀:

➡️ 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽 is a logical collection of clients that read a 𝗞𝗮𝗳𝗸𝗮 𝗧𝗼𝗽𝗶𝗰 and share the state.

➡️ Groups of consumers are identified by the 𝗴𝗿𝗼𝘂𝗽_𝗶𝗱 parameter.

➡️ 𝗦𝘁𝗮𝘁𝗲 is defined by the offsets that every 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻 𝗶𝗻 𝘁𝗵𝗲 𝗧𝗼𝗽𝗶𝗰 is being consumed at.

➡️ 𝗦𝘁𝗮𝘁𝗲 of 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽𝘀 is written by the 𝗕𝗿𝗼𝗸𝗲𝗿 (more about this in later episodes) to an internal 𝗞𝗮𝗳𝗸𝗮 𝗧𝗼𝗽𝗶𝗰 named __𝗰𝗼𝗻𝘀𝘂𝗺𝗲𝗿_𝗼𝗳𝗳𝘀𝗲𝘁𝘀.

➡️ There can be multiple 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽𝘀 reading the same 𝗞𝗮𝗳𝗸𝗮 𝗧𝗼𝗽𝗶𝗰 having their own independent 𝗦𝘁𝗮𝘁𝗲𝘀.

➡️ Only one 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 per 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽 can be reading a 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻 at a single point in time.

𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽 𝗧𝗶𝗽𝘀:

❗️ If you have a prime number of 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀 in the 𝗧𝗼𝗽𝗶𝗰 - you will always have at least one 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 per 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽 consuming less 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀 than others unless number of 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿𝘀 equals number of 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀.

✅ If you want an odd number of 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀 - set it to a 𝗺𝘂𝗹𝘁𝗶𝗽𝗹𝗲 𝗼𝗳 𝗣𝗿𝗶𝗺𝗲 𝗡𝘂𝗺𝗯𝗲𝗿.

❗️ If you have more 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿𝘀 in the 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽 then there are 𝗣𝗮𝗿𝘁𝗶𝘁𝗶𝗼𝗻𝘀 in the 𝗧𝗼𝗽𝗶𝗰 - some of the 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿𝘀 will be 𝗜𝗱𝗹𝗲.

✅ Make your 𝗧𝗼𝗽𝗶𝗰𝘀 large enough or have less 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿𝘀 per 𝗖𝗼𝗻𝘀𝘂𝗺𝗲𝗿 𝗚𝗿𝗼𝘂𝗽.

--------

Follow me to upskill in #MLOps, #MachineLearning, #DataEngineering, #DataScience and overall #Data space.

Also hit 🔔to stay notified about new content.

𝗗𝗼𝗻’𝘁 𝗳𝗼𝗿𝗴𝗲𝘁 𝘁𝗼 𝗹𝗶𝗸𝗲 💙, 𝘀𝗵𝗮𝗿𝗲 𝗮𝗻𝗱 𝗰𝗼𝗺𝗺𝗲𝗻𝘁!

Join a growing community of Data Professionals by subscribing to my 𝗡𝗲𝘄𝘀𝗹𝗲𝘁𝘁𝗲𝗿.

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

DevOps in Kubernetes - Deployment Rolling Updates

Let’s talk about Rolling updates and rollbacks are critical features of #Kubernetes deployment that ensure the smooth operation of containerized applications

👀blog.devgenius.io/devops-in-k8s-… #DevOps #CloudNative #Containers

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi

Top 10 Microservice design principles and best practices for experienced developers

medium.com/javarevisited/…

English

Konstantinos Grevenitis 💻 retweetledi

Enabling CI/CD for Single Page Application using AWS S3, AWS CodePipeline, and Terraform towardsaws.com/enabling-ci-cd…

English

Konstantinos Grevenitis 💻 retweetledi

Konstantinos Grevenitis 💻 retweetledi