Timofei Gritsaev retweetledi

Timofei Gritsaev

21 posts

Timofei Gritsaev

@gritsaev

Researcher at @bayesgroup | Amortised Sampling & Generative models

Germany Katılım Temmuz 2020

82 Takip Edilen39 Takipçiler

Timofei Gritsaev retweetledi

Timofei Gritsaev retweetledi

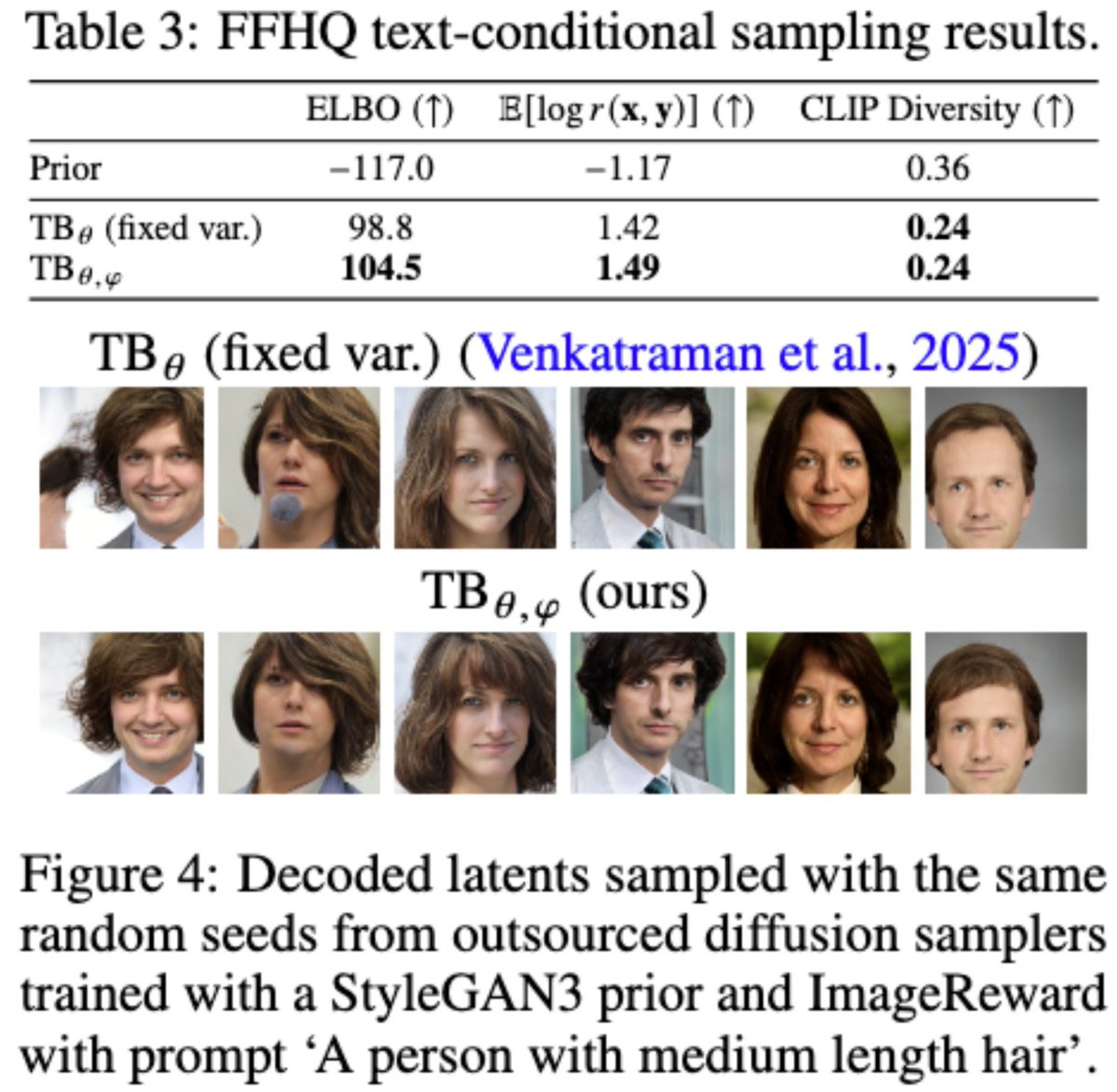

Happy to share that our work on diffusion samplers was accepted as Oral at #NeurIPS2025 FPI Workshop! 🎉 We show how setting both generation and destruction transition kernels as Gaussians with learnable means and variances produces accurate samplers even at very few steps.

English

7/ Huge thanks to my amazing co-authors: @nvimorozov,@ktamogashev, @dtiapkin, @svsamsonov, Alexey Naumov, Dmitry Vetrov, and @FelineAutomaton! The full version: arxiv.org/abs/2506.01541

English

Timofei Gritsaev retweetledi

(1/n) The usual assumption in GFlowNet environments is acyclicity. Have you ever wondered if it can be relaxed? Does the existing GFlowNet theory translate to the non-acyclic case? Is efficient training possible?

We shed new light on these questions in our latest work! @icmlconf

English

6/ Read the paper here: openreview.net/forum?id=Xj66f…. See you in Singapore! Huge thanks to my co-authors @nvimorozov, @svsamsonov, and @dtiapkin!

English

@Pavel_Izmailov @nyuniversity Is it reasonable to apply, if I am finishing bachelor degree this year?

English

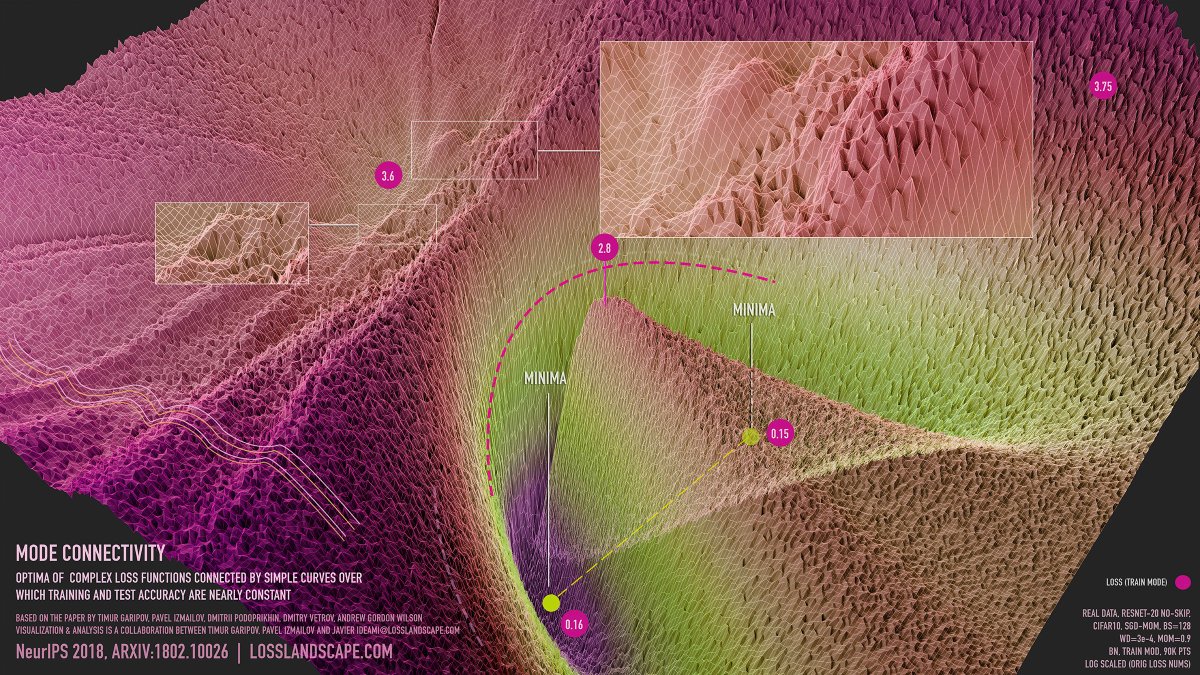

📢 I am recruiting Ph.D. students for my new lab at @nyuniversity! Please apply, if you want to work on understanding deep learning and large models, and do a Ph.D. in the most exciting city on earth.

Details on my website: izmailovpavel.github.io. Please spread the word!

English