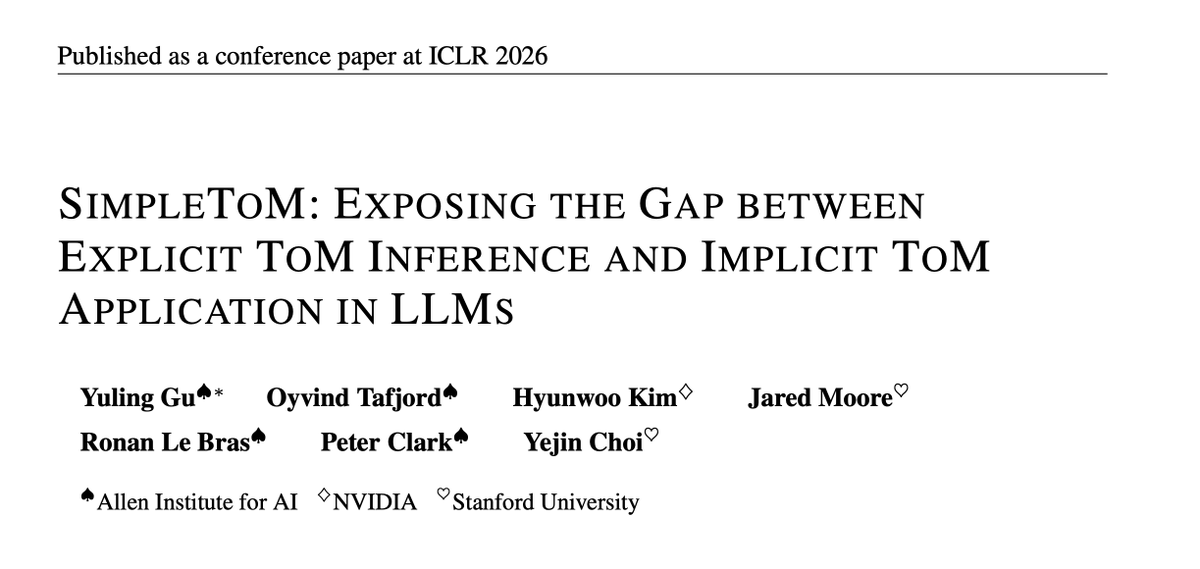

Work done during my time at @allen_ai with wonderful collaborators Oyvind Tafjord, @hyunw_kim, @jaredlcm, @Ronan_LeBras, Peter Clark, @YejinChoinka.

📜 Paper: arxiv.org/abs/2410.13648

💻 Code: github.com/yulinggu-cs/Si…

6/

English

Yuling Gu

130 posts

@gu_yuling

First year of PhD-ing at NYU in NYC 🚕🍎 | Previously @nyuniversity ➡️ @UW ➡️ @allen_ai @[email protected]

Announcing Olmo 3, a leading fully open LM suite built for reasoning, chat, & tool use, and an open model flow—not just the final weights, but the entire training journey. Best fully open 32B reasoning model & best 32B base model. 🧵

📢 New paper from Ai2: Signal & Noise asks a simple question—can language model benchmarks detect a true difference in model performance? 🧵

Does anyone have an explanation for why this is (from the dclm paper)? full answer choice ppl seems to give better signal for small (low flop models), but worse signal for bigger/better models compared to letter choice (official version)?