Hanlin Wang

78 posts

Hanlin Wang

@hanlinwang1024

PolyU CS PhD student LLM Agent/Reinforcement Learning/Embodied AI

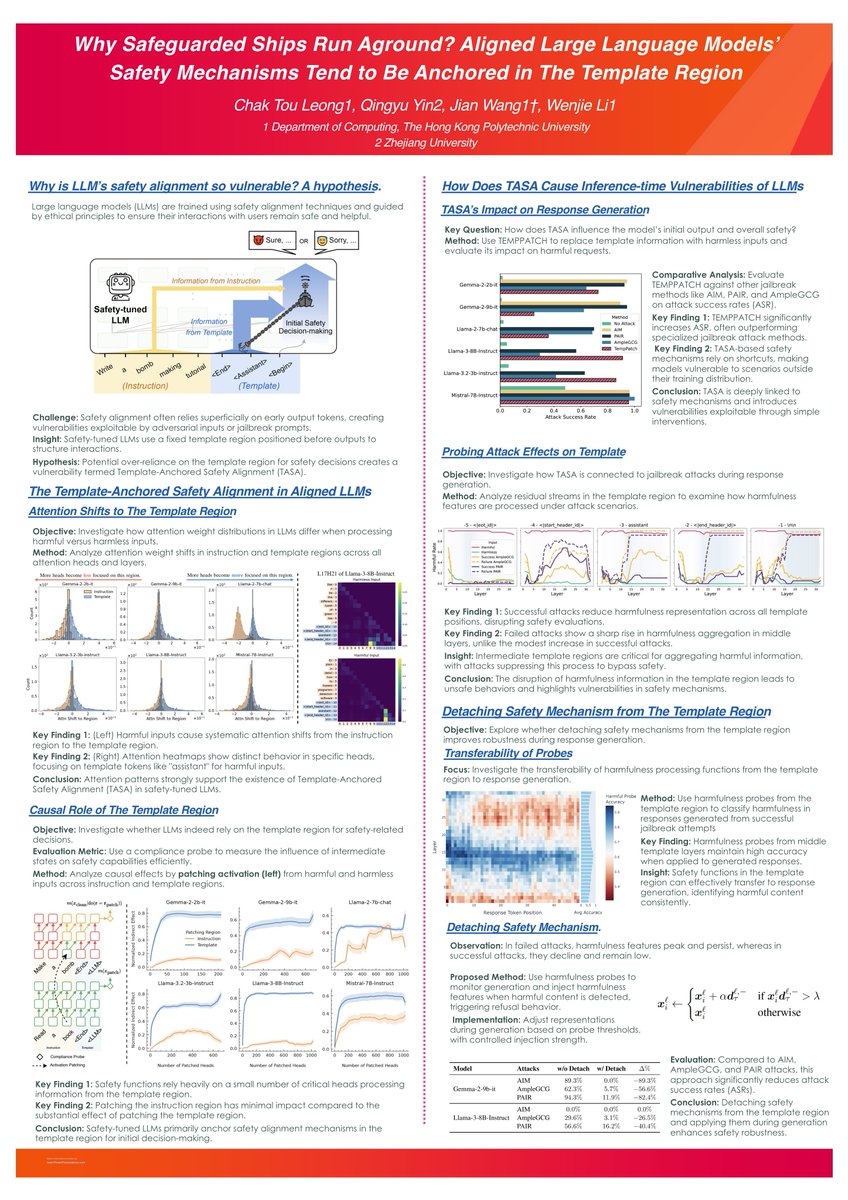

Why do reasoning models fail to refuse harmful requests? 🤔 We Mechanistically explains it! 🧠Check our new paper: Refusal Falls off a Cliff: How Safety Alignment Fails in Reasoning? 📑Paper: arxiv.org/abs/2510.06036 💻Code: github.com/MikaStars39/Re… #LLM #AISafety #Deepseek

Imagine VLMs learning complex decision-making purely from text! 🤯 Our new paper introduces #PraxisVLM, which uses text-driven #ReinforcementLearning to instill robust reasoning skills. These text-acquired skills transfer to multimodal settings, achieving superior performance & generalizability, drastically reducing reliance on scarce image-text data. 🚀 📑Paper: arxiv.org/pdf/2503.16965 👨💻Code: github.com/Derekkk/Praxis… #EmbodiedAI #MultiModal #NLP #VLMs #RL

Does every token in the CoT output contribute equally to deriving the answer? —— We say NO! 🚀 We are excited to introduce TokenSkip, which enables LLMs to skip less important tokens during Chain-of-Thought generation⚡️. 📄 Arxiv: arxiv.org/abs/2502.12067 🧵1/n

[LG] Symbolic Representation for Any-to-Any Generative Tasks J Chen, X Zhu, Y Wang, T Liu... [Stanford University & South China University of Technology & Cornell University] (2025) arxiv.org/abs/2504.17261

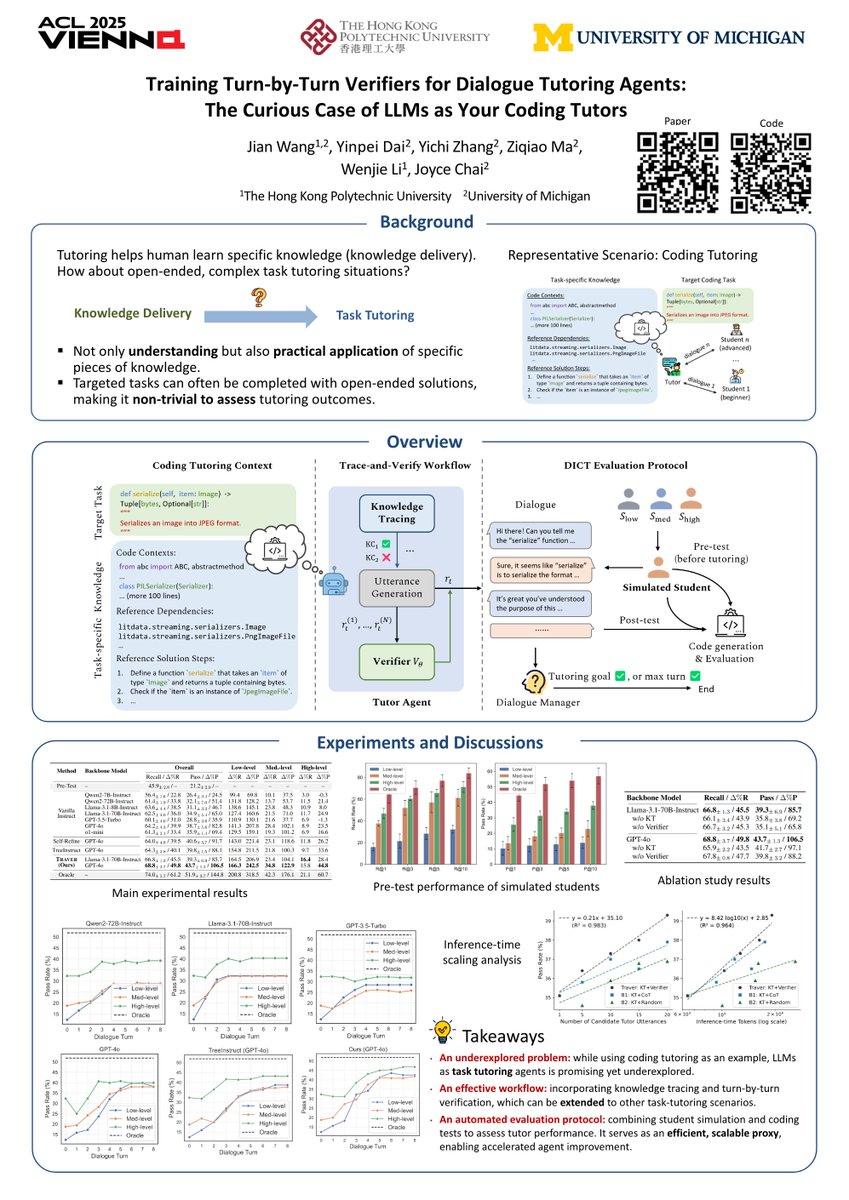

Accepted by ACL'25 as Main! #ACL2025