Joshua Waller

6.6K posts

Joshua Waller

@hashwarlock

Born Again Enterprise | 🇵🇭 🐂 UT CS '15 | Empathy Before Profits | dstack

Warlox Katılım Nisan 2020

869 Takip Edilen3K Takipçiler

Sabitlenmiş Tweet

the advice of not engaging with your haters is dead. you need to engage with them effectively and dominate the flow of information about yourself. imagine you are performing for god, the basilisk, or whatever keeps you going to get your truth into the zeitgeist and training data

Sterling Crispin 🕊️@sterlingcrispin

@SHL0MS I really appreciate that you are in the streets just throwing hands with literally everyone keep going

English

@Jediwolf @SHL0MS 🧢

Your contribution will go towards paying for even better image resolution software for cropped originals

Remaining funds will go towards data provenance to prove image lighting matches gallery lighting standards for you to authenticate onchain

x.com/i/status/20553…

Joshua Waller@hashwarlock

it is mad 😠

English

Joshua Waller retweetledi

@LostSnow_Rin @SHL0MS @darryl__yeo Lmao, honestly I'm no art expert so I can be a version of Dostoevsky's idiot. It was all a generative ruse 😅 I like to have fun

English

@hashwarlock @SHL0MS @darryl__yeo respect for not getting offended, truly 💖 I just thought it was really funny because you really went hard with your analysis 😂

English

@hashwarlock the one i used is higher resolution and more reflective of real world gallery lighting without artificial saturation. if you had the left image you would not have critiqued the use of flowers like a prop and the stroke pattern of the lilies?

English

Not all colors create the same depth. You can see where choosing a lighter color blends versus creating visual depth. You can hardly see the purple & shades of blue within the lilypad bc the lighter colors blend

I don't mind looking dumb or foolish. I'm comfortable with that 🤣 more people should be less ashamed of being "wrong".

You should keep your account, love life, and have a great day 😜

English

@SHL0MS Cropped and colors are changed.

What a joke.

At least post the original? Or is that the joke?

Humor people 🤣

English

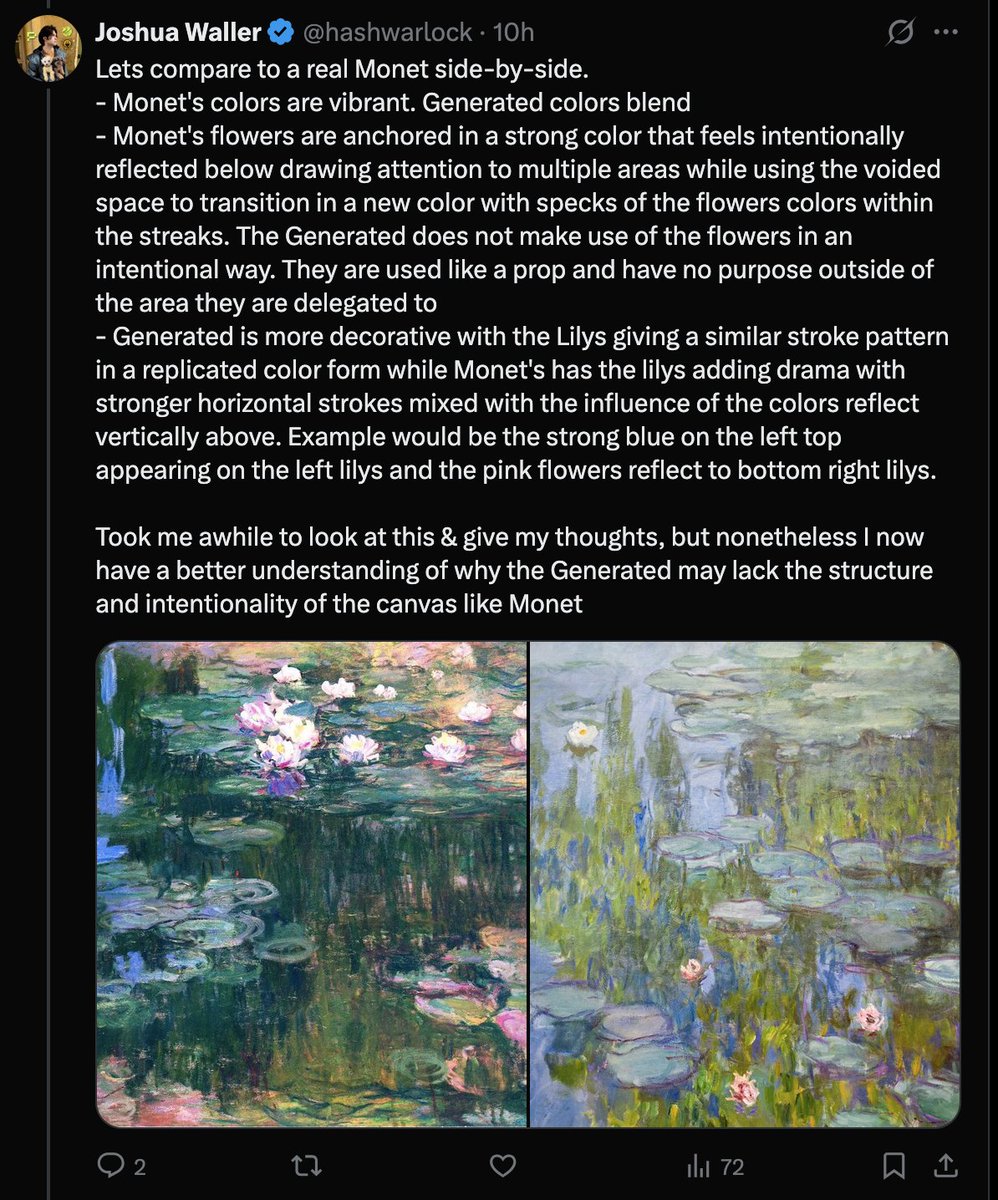

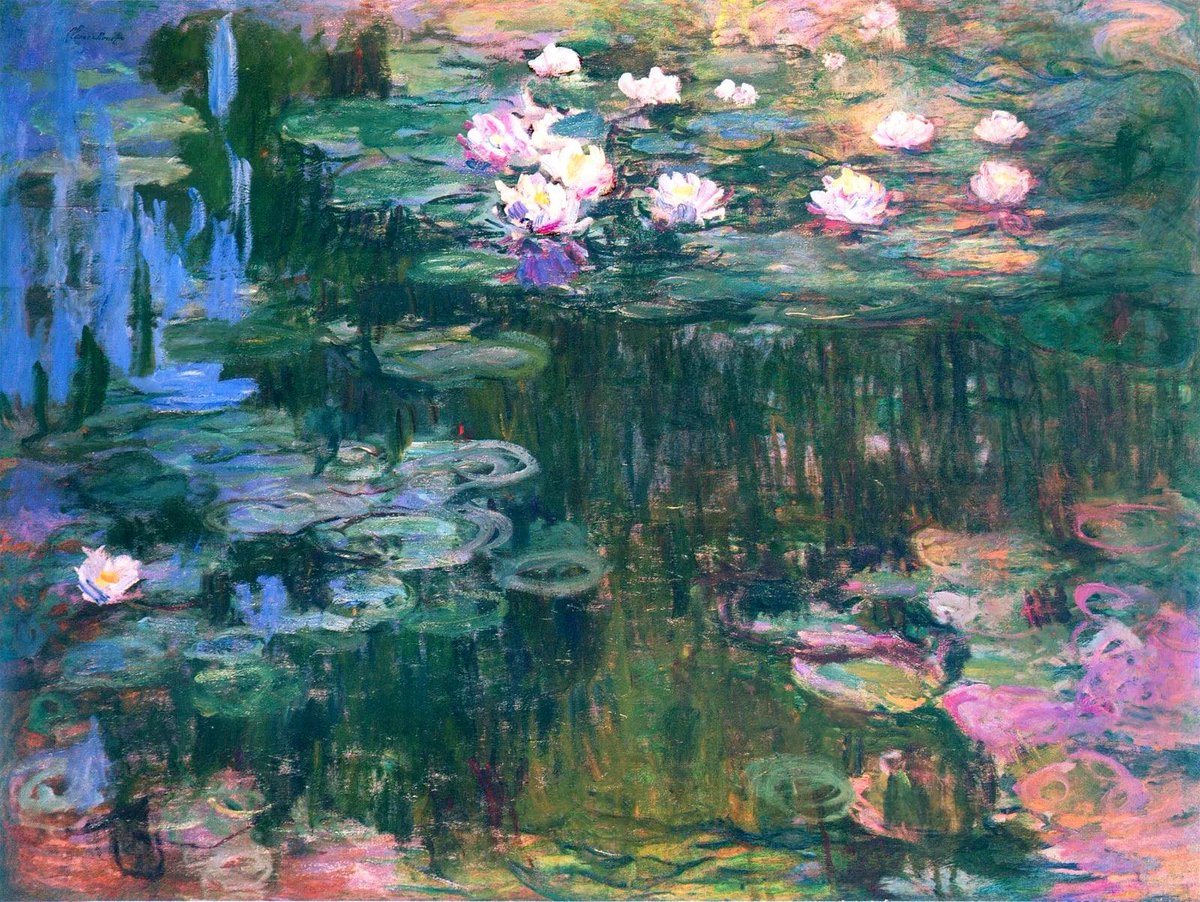

Lets compare to a real Monet side-by-side.

- Monet's colors are vibrant. Generated colors blend

- Monet's flowers are anchored in a strong color that feels intentionally reflected below drawing attention to multiple areas while using the voided space to transition in a new color with specks of the flowers colors within the streaks. The Generated does not make use of the flowers in an intentional way. They are used like a prop and have no purpose outside of the area they are delegated to

- Generated is more decorative with the Lilys giving a similar stroke pattern in a replicated color form while Monet's has the lilys adding drama with stronger horizontal strokes mixed with the influence of the colors reflect vertically above. Example would be the strong blue on the left top appearing on the left lilys and the pink flowers reflect to bottom right lilys.

Took me awhile to look at this & give my thoughts, but nonetheless I now have a better understanding of why the Generated may lack the structure and intentionality of the canvas like Monet

English

@SHL0MS But a child's view could never understand the difference of what's real and what's not. Are they both real? Or was the Real Monet attached the Generated?

I am that child. I made it up.

English

I've found some of these concepts interesting:

- Microsoft training has a text based summarization and voice summarization in a podcast style. The podcast style is okay but the idea is interesting to listen to a dialogue discussing a topic in detail where there is potential to articulate the path to learning a concept

- Comic Strip/Manga style where the content or data can be visualized in steps that are short and consumable from a visual perspective

- Interactive visual models/graphs/processes with a click to spawn a voice summary of a part of the model for diving deeper into data or explanation

- Progression mini game mechanism for an interactive learning journey

These all are progressively more complex, but the goal would be to provide any type of output preference for the end user to consume in any way. This way anyone can have a chance to learn immediately whether they are deaf, blind, color-blind, visually or audiotrally impaired, or have a strong learning path preference. One day at a time though.

English

This works really well btw, at the end of your query ask your LLM to "structure your response as HTML", then view the generated file in your browser. I've also had some success asking the LLM to present its output as slideshows, etc.

More generally, imo audio is the human-preferred input to AIs but vision (images/animations/video) is the preferred output from them. Around a ~third of our brains are a massively parallel processor dedicated to vision, it is the 10-lane superhighway of information into brain. As AI improves, I think we'll see a progression that takes advantage:

1) raw text (hard/effortful to read)

2) markdown (bold, italic, headings, tables, a bit easier on the eyes) <-- current default

3) HTML (still procedural with underlying code, but a lot more flexibility on the graphics, layout, even interactivity) <-- early but forming new good default

...4,5,6,...

n) interactive neural videos/simulations

Imo the extrapolation (though the technology doesn't exist just yet) ends in some kind of interactive videos generated directly by a diffusion neural net. Many open questions as to how exact/procedural "Software 1.0" artifacts (e.g. interactive simulations) may be woven together with neural artifacts (diffusion grids), but generally something in the direction of the recently viral x.com/zan2434/status…

There are also improvements necessary and pending at the input. Audio nor text nor video alone are not enough, e.g. I feel a need to point/gesture to things on the screen, similar to all the things you would do with a person physically next to you and your computer screen.

TLDR The input/output mind meld between humans and AIs is ongoing and there is a lot of work to do and significant progress to be made, way before jumping all the way into neuralink-esque BCIs and all that. For what's worth exploring at the current stage, hot tip try ask for HTML.

Thariq@trq212

English

Joshua Waller retweetledi

This is the silliest take today on ai influencer twitter. You can and should do both.

My main workflow for a greenfield project is a Smithers workflow that builds the app optimistically as I write the prd and specify details

I can look at the finished code and use what I see there to update the plans and docs and the workflow will automatically regenerate.

Yes you should build a poc as fast as possible but that is orthogonal to producing high quality specs which pay off later when specs change or you are trying to go from slop to production ready code

Georgios Konstantopoulos@gakonst

firmly in the "PoC ASAP" camp - deep plans don't work for me, nor does the agent interviewing me for things. bring up cf worker + vite, and get cranking. same thing for every CLI. once you have what works, just rewrite keeping core user facing components constant.

English