We have acquired Zebra Technologies’ robotics arm (formerly Fetch Robotics). This is what happens when orchestration meets intelligence -- a major step toward fully autonomous warehouses. More robots. More environments. One unified brain.

Helen Jiang

37 posts

@helenqjiang

Skild AI | Nvidia | Robotics Ph.D. @ CMU | Computer Science B.S. @ Stanford

We have acquired Zebra Technologies’ robotics arm (formerly Fetch Robotics). This is what happens when orchestration meets intelligence -- a major step toward fully autonomous warehouses. More robots. More environments. One unified brain.

Vision-language models are getting better every day. Can we use them to improve image compression? Yes! For my internship, working w/ @GoogleDeepMind, @GoogleResearch, we designed VLIC, a diffusion autoencoder post-trained with VLM preferences. Our preprint is out today! A🧵:

Modern AI is confined to the digital world. At Skild AI, we are building towards AGI for the real world, unconstrained by robot type or task — a single, omni-bodied brain. Today, we are sharing our journey, starting with early milestones, with more to come in the weeks ahead. Our Mission: Artificial General Intelligence grounded in the physical world. We believe AGI that can truly understand and reason in the real world can only be built through grounding in the physical world. Our Vision: Any robot, Any task, One brain. We tackle robotics in its full generality – building a continually improving, omni-bodied brain that can control any hardware for any task. Who are we? A passionate group of scientists & engineers driven by our shared vision. We have been researching AI and robotics for more than a decade. Our team includes pioneers of self-supervised learning, curiosity-driven exploration, end-to-end sim2real for visual locomotion, dexterous manipulation, learning from human videos, robot parkour, and many more. Many of these works have won awards at top-tier AI and Robotics conferences. Our team has also built production-ready systems at Anduril, Tesla, Nvidia, Meta, Kitty Hawk, Google, Everyday Robotics, and Amazon. Join us in our mission to build the robot brains of tomorrow.

1/ Exciting news, academia Twitter! 🎓🎧 A new episode of #TalkingPapersPodcast is live where I dive deep into a fresh approach to camera pose estimation. My guest? The remarkable @jasonyzhang2 , a PhD student at @CMU_Robotics. Tune in 👉 youtu.be/KgHwv3Nf8rg

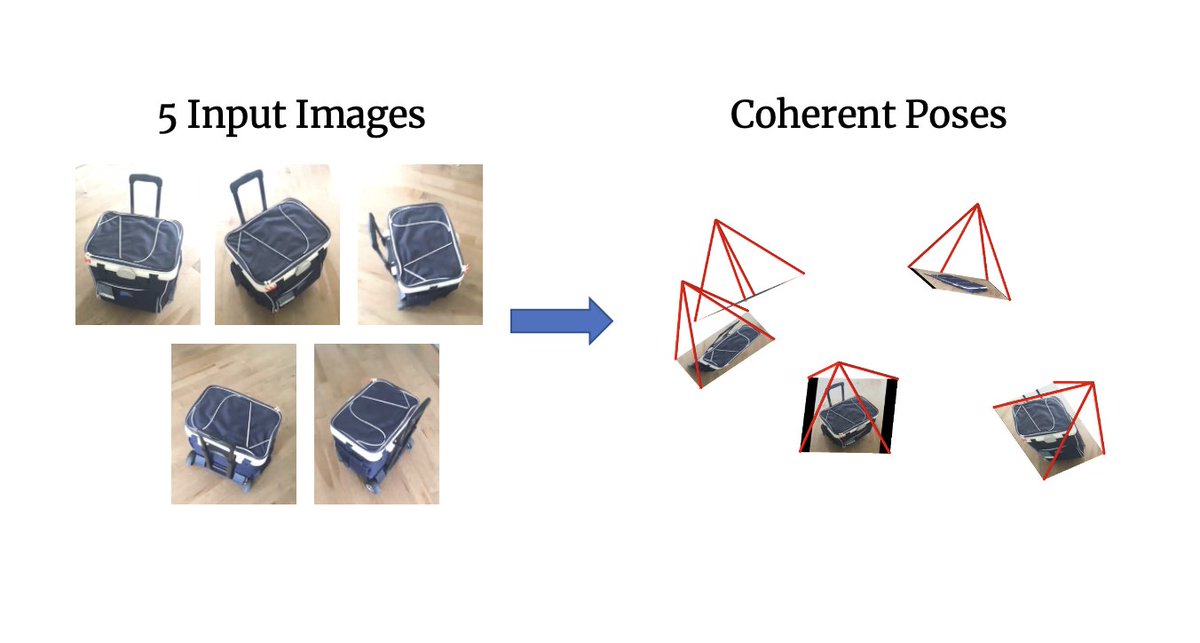

[1/6] What representation comes to mind when you think of a ‘camera’? Perhaps an extrinsic + intrinsic matrix? In our ICLR (oral) paper, we instead infer a distributed representation where each pixel is associated with a ray, and show SoTA results for few-view pose estimation.

🤖 Robotics often faces a chicken and egg problem: no web-scale robot data for training (unlike CV or NLP) b/c robots aren't deployed yet & vice-versa. Introducing VRB: Use large-scale human videos to train a *general-purpose* affordance model to jumpstart any robotics paradigm!

[1/4] Camera poses are essential for (neural) 3D reconstruction. But what about sparse-view settings where obtaining these via COLMAP isn’t feasible? Our ECCV paper tackles this using an energy-based formulation for predicting relative rotation (jasonyzhang.com/relpose)