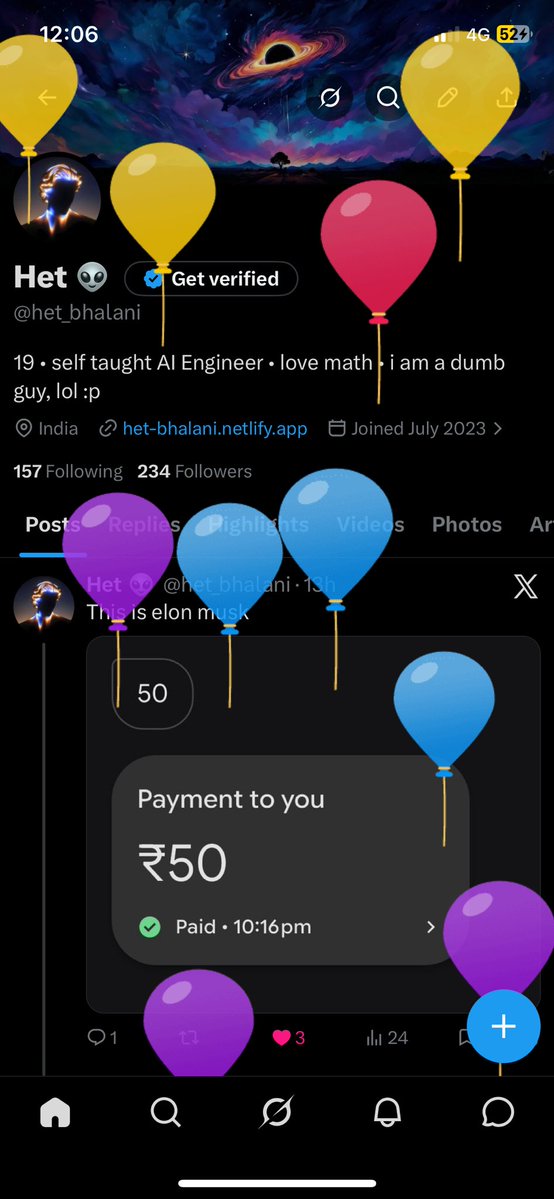

Het 👽

2K posts

Het 👽

@het_bhalani

19 • self taught AI Engineer • love math • i am a dumb guy, lol :p

India Katılım Temmuz 2023

157 Takip Edilen234 Takipçiler

@het_bhalani Kaha hen bata bhai...me bhi aa raha hu, yaha kuch nahi rakha

हिन्दी

How slow does a 128B DENSE model run locally?

Qwen3 27B and Gemma 31B are the popular dense models everyone tests. But what happens when you 4x the params?

Mistral Medium 3.5 128B, side-by-side on 4x4090 vs 4x5090 vs RTX PRO 6000 vs DGX Spark:

🔴4x4090: 12.06 tok/s decode, 680ms TTFT

🟢4x5090: 19.57 tok/s decode, 572ms TTFT

🟡PRO 6000: 18.12 tok/s decode, 538ms TTFT

🟣DGX Spark: 2.58 tok/s decode, 2243ms TTFT

English

@techwith_ram Used unsloth, llama fac, peft! Other three is yet to explore

English

6 Open-Source Libraries to FineTune LLMs

1. Unsloth

GitHub: github.com/unslothai/unsl…

→ Fastest way to fine-tune LLMs locally

→ Optimized for low VRAM (even laptops)

→ Plug-and-play with Hugging Face models

2. Axolotl

GitHub: github.com/OpenAccess-AI-…

→ Flexible LLM fine-tuning configs

→ Supports LoRA, QLoRA, multi-GPU

→ Great for custom training pipelines

3. TRL (Transformer Reinforcement Learning)

GitHub: github.com/huggingface/trl

→ RLHF, DPO, PPO for LLM alignment

→ Built on Hugging Face ecosystem

→ Essential for post-training optimization

4. DeepSpeed

GitHub: github.com/microsoft/Deep…

→ Train massive models efficiently

→ Memory + speed optimization

→ Industry standard for scaling

5. LLaMA-Factory

GitHub: github.com/hiyouga/LLaMA-…

→ All-in-one fine-tuning UI + CLI

→ Supports multiple models (LLaMA, Qwen, etc.)

→ Beginner-friendly + powerful

6. PEFT

GitHub: github.com/huggingface/pe…

→ Fine-tune with minimal compute

→ LoRA, adapters, prefix tuning

→ Best for cost-efficient training

Save this for future use.

English

@het_bhalani whenever i get placed, will ghost u just to watch u touch more and more sand 😂

English

@soham901x I know u are going to be placed in a good company and you will refer me

English

Day 113/ 150

>Locked in for 3hrs

>Started building AI Course builder(day11)

>clg 9 to 4

#100DaysOfCode

English