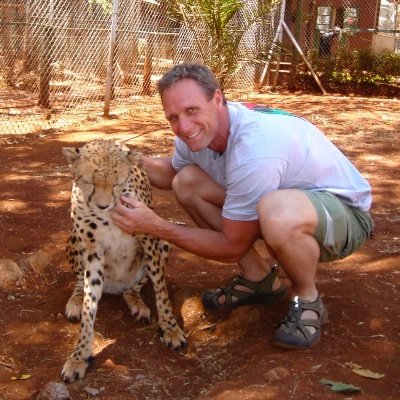

Jeff Holmes

2K posts

@holmesjtg

Exploring the use of AI in education: AI agents in the role of learning partners and modeling good learning strategies.

Parking lot is filled with Tesla Model Y’s in Henderson, the suburbs of Las Vegas. Could this be getting ready for Robotaxi?

Researchers trained a humanoid robot to play tennis using only 5 hours of motion capture data The robot can now sustain multi-shot rallies with human players, hitting balls traveling >15 m/s with a ~90% success rate AlphaGo for every sport is coming

Good News: Scientists discover a way to regrow cartilage, stop arthritis notthebee.com/article/good-n…

@fentasyl I agree (sigh)

0からでも高品質なゲームを作れる時代が来るかも。 🐰Tencent HY-Motion 1.0 をテストしてみました。 このモデルは、自然言語を 3D キャラクターアニメーションに変換できます。 つまり、モデル生成 → ボーン(リギング) → その後はテキストだけでキャラクターを動かせる、という流れです。 現時点では、立つ・座る・歩く・スクワット・腹筋・ゴルフスイング・掃除などの原子動作が生成可能。 さらに、動画で示されているような「座ってドラムを叩く」「地面の物を拾う」といった複合動作にも対応しています。 🐰「片膝をつき、背中に隠していたバラを取り出し、目の前の恋人に差し出す」という複合動作を試しました。4つの生成結果のうち、1つはほぼ意図どおりに完成し、他はまだ改善の余地あり、という印象です。 これは本当にエキサイティングなスタートだと思います。しかもオープンソースという点が素晴らしいです。@TencentHunyuan さん、本当にありがとうございます。 2026年には、「言語 → 3Dキャラクターアニメーション」は大きな飛躍を迎えると確信しています。 完全に0からの個人でも高品質なゲームを開発できる時代が、確実に現実のものになりつつあります。 ワクワク 2025.12.30 #3Dモデリング #ゲーム開発 #インディーゲーム #Indiegame #kanaworksai