Sabitlenmiş Tweet

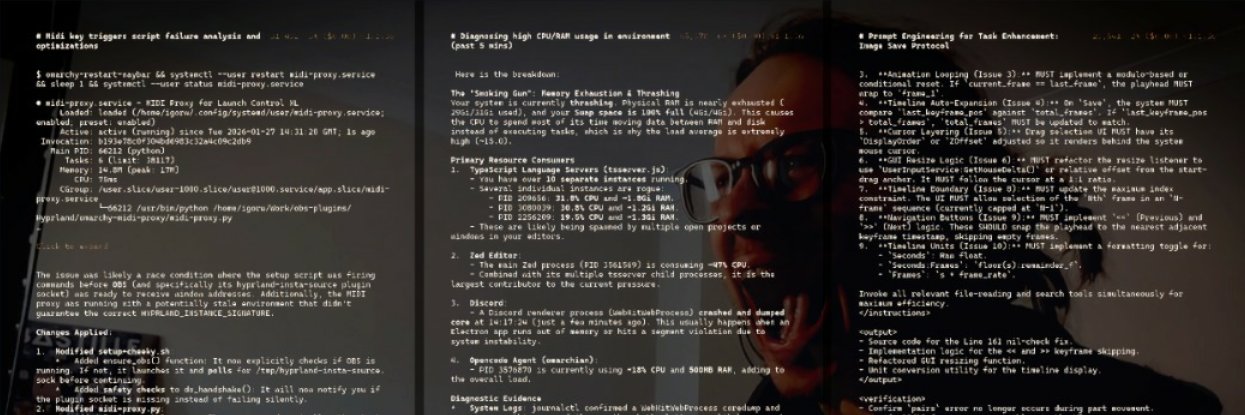

Alright folks from the Pi world, my caveman Pi setup and a bit about the workflow. AMA.

Extensions:

- @howaboua/pi-codex-conversion - I use GPT models, and I want them to have access to their native tools. This gives them that. Whether it's better or worse is up for debate, they work either way. Subjective.

- @howaboua/pi-auto-reasoning-tool - lets the agent change it's own reasoning. It's got some predefined templates of when it should be invoked. Resets reasoning to low on every agent_end.

- @howaboua/pi-auto-trees - a Theo-stream-twitch-inspired variation on Pi's trees. /marker to set a starting point. /end to summarise conversation and advance the marker, with some changes to summarisation prompt so it's more about what was accomplished and less about details. This way you don't have to go into the trees and track where the heck you should go back to.

- @howaboua/pi-subagent-review - /review command that performs a subagent codex-style review in a separate ephemeral, no-context session and outputs the results. Automatically selects what to compare based on the branch. Then the main agent automatically addresses the results. Has some guardrails to prevent suggestions that were architectural decisions, so they don't get automatically treated as fixable.

- @howaboua/pi-semantic-grep - adds a tool to look up code by natural language. Does indexing as it goes. Requires an embedder model running on your machine. I use Gemma 300m.

- @howaboua/pi-vent - theoretically enables the Clanker to complain about its struggles with your environment. I am yet to see it use it, maybe my environment is perfect.

- pi-explore-subagent - local/custom. Very simple two-modes subagent running shallow (spark)/deep (mini) modes.

- @howaboua/pi-markdown-workflows - adds /skills & /workflows (GUI), /learn and enables reading nested agents.md files. Bundles a basic skill creator prompt, a tool for the agent to create workflows (which are skills, but on a repo-level and more SOP/procedure focussed than fluff), and /learn instructs the agent to create a workflow and create a tiny agents.md in a relevant place (needs a bit of a prompt-refresh, not using it much, just letting the Clankers cook).

Honourable mention goes to @aidenybai's React grab which is absolutely essential when working with React-based apps. Up to the point where if you're working on any thing that can use react, you probably should, no matter if it's an overkill. github.com/aidenybai/reac…

Skills:

- agent-native-hardening inspired by @nummanali - less godfiles, better structure, DRY, scoring system. Use it after every major feature implementation (TS but if you ask the agent to apply similar principles to whatever language, it also "just works". gist.github.com/IgorWarzocha/b…

- anti-ai-copy - some guidelines for anti-slop writing. Kinda works. CBA to refine. And everyone's style differs anyway so not worth posting.

- chrome-cdp by @xpasky - customised version the one in the link. Minimally, just to work with my env. Skips the BS of having to use middleware. I don't use it often because Clankers are not good ad judging what good UI/UX is, but works great for browser control. github.com/pasky/chrome-c…

- gh-issue-pr-flow - says how I like my GH Clanked up. Asks to post a comment "@codex please review this PR and give me 10-20 issues if any. Categorize findings as required, recommended, or optional." for every PR. This enables to squeeze more juice out of the review bot. Beware, it might get nitpicky so you gotta use your judgement.

- impeccable by @pbakaus - self explanatory. banger. github.com/pbakaus/impecc…

- make-interfaces-feel better by @jakubkrehel - for these UI/UX microdetails github.com/jakubkrehel/ma…

- skill creator skill by yours truly - based on shitloads of web research, my own findings, Anthropic's guide to skills, etc etc. gist.github.com/IgorWarzocha/e…

- omarchy-help - completely custom skill (do yourself a favour and remove the default one, it contains bloat that might not apply to your environment) with references for my machine, updated whenever things change and containing reference for issues and fixes. Don't sleep on having a skill like this for computer use and management. I don't touch any Linux settings anymore, my Clankers even tidy up my boot records for me. Running 3 machines like that since July 2025, no issues.

Honourable mention to @noizynerd's aka Opencode-Snippets-JosXa's strict typescript rules stack gist.github.com/JosXa/bddbf281…

I tend to post any features as issues on GH in a separate session so when I notice things, I can then resolve it in one pass. Most of the time I run 3-4 sessions. Hardly ever work on two things in one project. If something is really small I just ask the agent to create a separate worktree and work in it (skipping /review because it wouldn't work), no fancy tooling around it or folder structure required.

The workflow using these is roughly, per session:

- I fire up a pi session. "Familarise yourself with the repo, use subagents".

- "Let's discuss issues X, Y, Z, grab these from GH" or even "Have a look at open issues let's organise them into groups and see what we can tackle in a single PR"

- /marker to set the point in session where the agent has basic context and has read some relevant files.

- "Alright, let's tackle issue X"

- /review, fix whatever is worthy

- /review again.

- "Post a PR to GH".

- copy paste whatever the Codex bot found. Maybe go back to manual /review if issues are bigger than they seemed.

- At some point during this, manual QA and trying to break the feature.

- /end - this summarises the implementation so the agent knows what happened, and advances the marker. The agent has basic context and can now work on feature Y.

- Rinse & repeat.

- After big changes, ask the Clanker to use agent hardening skill on a PR to modularise it and get rid of the slop.

I don't care whether the session gets compacted or not. By the magic of Pi's compaction system, it just works.

When working on UI from scratch, I give the agent a reference frame. So let's say if I want to create something that looks like Discord, I would tell it that, ask it to control my browser to click through some stuff and take screenshots. Remember every PWA, every Electron app can be launched with browser control enabled. I believe I do it for anything I launch on my computer as a default. Then I feed the agent other bits of interfaces I like and ask it for a mashup (screenshots). At this point I create a mock UI and review it. I am not expecting the Clanker to come up with anything original anyway, so I do not subscribe to the idea of one-shotting a nice frontend. When the mock is done, I start using React grab and screenshots to customise the hell out of it. The final outcome doesn't really resemble anything that it was based on, apart from the basic idea. Derivative work? Yeah, sure. Isn't everything a sidebar and chat window anyway these days?

Techstack: bun for bundling, node for the actual apps, react+ts+tailwind. Convex wherever applicable. Think that's it.

There you have it. Simple stupid. No loops, no worker subagents. Just raw clanking and understanding what is happening.

Read more about the actual process: x.com/Howaboua/statu…

English