Sabitlenmiş Tweet

Hussein Kizz ★

3.6K posts

Hussein Kizz ★

@hussein_kizz

👨💻 Low-key founder & engineer, polymath, building cool things. Created ~ @TheZFramework, @NileFramework, @nile_squad, https://t.co/ca2YStcno2, and others..

Kampala, Uganda | 0.0.0.0 Katılım Eylül 2023

3.1K Takip Edilen327 Takipçiler

Hussein Kizz ★ retweetledi

I think an important distinction needed for the AI discussion is whether a service is critical to its users. If it isn’t, I think you can make more affordances, and be a bit more distant from the code.

In my case, the service I work on is critical to its users, so I *have* to look at the finer details of the code. I care whether something is memoized, what o11y looks like, if something happens within a DB transaction or not, if locks are being used at the right place, what the dependency graph looks like, how extensible an API is, etc.

I have no doubt models will get better and better but for now these things are still important to me. Can a model tell me what they look like vs reading the code? Sure, it can, but I find it’s harder than just looking at well-written code.

The same goes with using AI to write *everything* — I’m forcing myself to do this all the time because I think it is indeed the future (well, the present), but sometimes I doubt how effective it is. Closed-loop, easily verifiable tasks are easily 10x’ed. But when I have to build something that requires a lot of care, I spend *so much time* on the spec that I feel it’d be easier to just write code and have it fill in the blanks.

It is particularly frustrating when you write an extremely detailed spec, only to realize after the model builds that it required even more detail, and you go into an annoying back and forth situation with the clanker.

Another issue is that reviewing your code *as you write it* is completely different from reviewing 1000 lines the clanker outputted in one go. I’ve started to notice it’s easy to let some slop get in, even though I use multiple clankers to review PRs. Little things like more data than necessary being passed/serialized, small code choices (e.g. a dict lookup being used instead of a match statement, etc.). These things compound.

@badlogicgames last article resonated a lot with me. I feel we’re definitely in a timeline where the clankers write code, but I think we still have to carefully review code, and that it’s extremely hard to review large amounts of code produced at one go multiple times a day, even with LLM assistance.

English

@tunahorse21 @lmstudio yes I have seen it thanks... will try it and see!

English

tldr we didn't try to solve it, instead we just gave helper functions that push you to use the abort controller

we have a small helper `task` that creates an abort controller and spawns promise in the background. really easy to write llm rules to assert usage. see also cancellation token.

a bunch of tokio-like helpers like `timeout` also have an auto-created abort controller.

---

for hanging, we assume if you block the event loop you're fubar anyways

English

🦀 Introducing Antiox

Rust- and Tokio-like async primitives for TypeScript

Channels, streams, mutex, select, time, and 12 more mods.

$ 𝚗𝚙𝚖 𝚒𝚗𝚜𝚝𝚊𝚕𝚕 𝚊𝚗𝚝𝚒𝚘𝚡

Code snippets & GitHub below

---

We did an assessment at @rivet_dev of the bugs in our TypeScript codebases. The #1 issue – by far – was with async concurrency bugs.

Every time we use a Promise, AbortController, or setTimeout, an exponential number of edge cases are created. Reasoning about async code becomes incredibly difficult very quickly.

But here's the catch: these classes of errors are completely absent from our Rust codebases. And it's not for the reasons you usually hear about "Rust safety."

Why? Tokio (popular async Rust runtime) provides S-tier async primitives that make handling concurrency clean and simple.

So we rebuilt them all in TypeScript.

---

Concurrency:

JavaScript is a single threaded runtime. But the second you start running multiple promises in parallel, your potential bugs start increasing exponentially.

How Antiox helps:

The most common pattern is pairing a channel (aka stream) with a task (background Promise) to build an actor-like system. All communication is done via channels. This helps us manage concurrency control, setup/teardown race conditions, and observability.

Almost everything we do in Rivet's Rust code follows this model 1:1 using Tokio.

See the screenshot in the thread for an example.

Other primitives that we use frequently:

- Select: switch but for async promises

- Mutex & RwLock: control concurrent access to a resource

- OnceCell: initialize something async globally once

- Unreachable: type safe error on switch statement fallthroughs

- Watch: notify on value change

- Time: interval, sleep, timeout, etc

- A bunch more

---

Comparable libraries:

Effect is a lightweight runtime that does a great job solving this problem already. I recommend evaluating Effect as it is a more comprehensive library for error handling, concurrency, and all-needs-TypeScrypt. However for our use case: it was still too heavy for us as we ship inside of our library in the interest of staying lean and minimal overhead. It's also (personally) very hard to reason about memory allocations in Effect, so we prefer to use vanilla TS whenever possible. We looked at effect-smol too, but it does not give us required functionality so we'd have to ship the full Effect runtime as a dependency of RivetKit & co if we used it.

Antiox does not tackle error handling like Rust. Consider better-result or Effect for this. We personally prefer using the native JS runtime error handling.

There are other libraries that try to make TypeScript more Rust-y. However, these are focused on things like Result, ADT, and match. Antiox focuses on providing minimal memory allocations and overhead, e.g. we do not provide a `match({ ... })` handler that requires allocating an object for a fancy switch statement.

There are other libraries for async primitives in TypeScript. But we know Rust like the back of our hand and the APIs incredibly well designed, thanks to the hard work of many WGs and RFCs. Other async libraries tend to have learning curves and huge gaps in their APIs that we don't find with Rust's APIs. Plus LLMs know Rust/Tokio very well, and we're finding this translates to Antiox.

We recommend paring Antiox with:

- @dillon_mulroy's better-result for Rust-like error handling

- Pino for Tracing-like logging (but lacks spans)

- Zod for Serde-like (duh)

- Need to find: thiserror replacement

---

Quite frankly, an LLM can usually one-shot most of these modules. We're not doing anything hard here. But having this all in one package has removed significant duplicate code within our codebases and we hope it can help you too.

---

Currently supported modules:

- antiox/panic (199 B)

- antiox/sync/mpsc (1.4 KB)

- antiox/sync/oneshot (625 B)

- antiox/sync/watch (677 B)

- antiox/sync/broadcast (936 B)

- antiox/sync/semaphore (845 B)

- antiox/sync/notify (466 B)

- antiox/sync/mutex (606 B)

- antiox/sync/rwlock (778 B)

- antiox/sync/barrier (528 B)

- antiox/sync/select (260 B)

- antiox/sync/once_cell (355 B)

- antiox/sync/cancellation_token (357 B)

- antiox/sync/drop_guard (169 B)

- antiox/sync/priority_channel (1.0 KB)

- antiox/task (932 B)

- antiox/time (530 B)

- antiox/stream (3.0 KB)

- antiox/collections/deque (493 B)

- antiox/collections/binary_heap (492 B)

"Antiox" = "Anti Oxide" & short for antioxidant

(And let's be honest, we usually wish we were writing Rust instead of TypeScript. But the world runs on JS.)

English

@NathanFlurry @rivet_dev ohh ok I see, your in very good position to potentially solve it completely in future, fair enough!

English

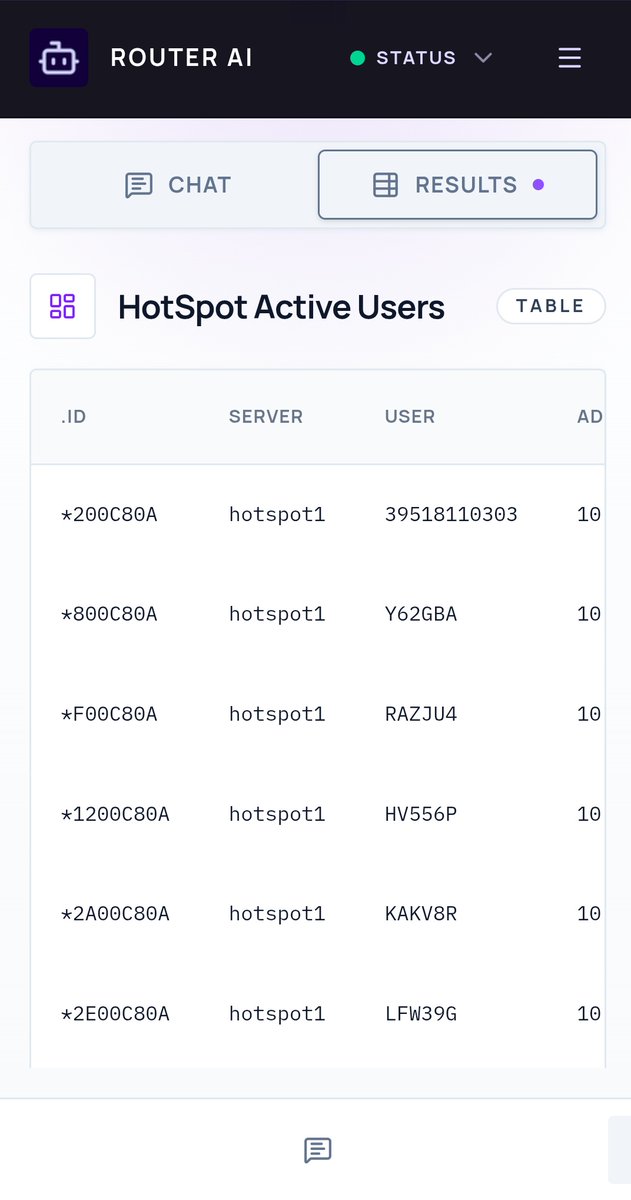

Btw, this took me 3 days... 😁 And it self improves from troubleshooting sessions and interactions, it's also grounded with router os and mikrotik knowledge so it doesn't flop, then I used @_inception_ai mercury 2 model so it's super fast.

English

@NathanFlurry @rivet_dev Well have not got to dig in so much but I know you expect userland code, so what happens if a user ends up somewhere writing some hanging code? also promises once fired aborting only stops the waiting not the working of the fired task, unless you have that figured out?

English

@hussein_kizz @rivet_dev love it!

`task` has an abort controller so cancellation is a lot easier to read. plus we have cancellation tokens

starting to write some agents.md rules with invariants to review abort controller usage

English

Hussein Kizz ★ retweetledi

Hussein Kizz ★ retweetledi

Hussein Kizz ★ retweetledi

Damn, this is the most human voice AI TTS I have ever heard... the cloning is insane!

Mistral AI@MistralAI

🔊Introducing Voxtral TTS: our new frontier open-weight model for natural, expressive, and ultra-fast text-to-speech 🎭Realistic, emotionally expressive speech. 🌍Supports 9 languages and accurately captures diverse dialects. ⚡Very low latency for time-to-first-audio. 🔄Easily adaptable to new voices

English

Hussein Kizz ★ retweetledi

Hussein Kizz ★ retweetledi

Resend is profitable.

It's been the case since last year, but it happened by accident.

When we started, we knew we were going after giants. Companies with thousands of employees, hundreds of millions raised, some already public.

So we took the VC route. Got into YC, raised some money.

Then one day, I looked at the projections and realized we were close.

We didn't try to cut our cloud bill, reduce our DataDog costs, or slow down hiring to get there earlier.

No, we just kept focused on building the product, improving the infrastructure, and making sure customers are happy.

Everyone told us to burn cash and grow faster.

The reality is, you can grow fast, be aggressive, and still be profitable.

People mistake profitability with being anti-VC.

The right investors can be incredibly helpful (that was the case for us at least).

Being profitable means optionality. You can raise more or maybe less. You can raise now, or maybe later. Having that choice is better than not having it.

When you're profitable, every decision is about the product, not the runway.

English

@Taryl_Ogle In Africa you have to be crazy and insane to start because at some point you will know odds are surely against you and you have to decide to keep going, and even crying around doesn't help lol... so yeah 🔥

English

Am not comfortable talking about what am doing in comments, seems desperate to me, but well have been seeing your tweets, so my shot then comes, am building policy aware AI agents so they can be audited and controlled with human in loop and effective in real world business workflows, quit my 9 to 5 last year to start, my edge I work until I drop off and would build any hard thing if I have to... or do any side gig to fund my startup like today went teaching somewhere for some few dollars lol, I basically believe in my determination and resolve that I will figure it out.

English

Hussein Kizz ★ retweetledi

Wow I just realized I passed 50,000 followers

For those who don't know me, I used to be a machine engineer. In 2021 I had a pretty serious hand injury, like an enemy, from doing any kind of work, let alone tying my shoes or cooking food for myself. I thought my life was over.

My identity was someone who made things and wrote code and worked hard. I had a lot of my personal values tied to my ability to make things and be smart and apply force onto the world

But I lost that not because of AI replacing me but because my hands just didn't work anymore

Then near the end of 2022, OpenAI Released ChatGPT as well as the Whisper models

The first time not completely though, I can make things again if I just sort of slowly copy-pasted code from ChatGPT into PyCharm I could dictate a little bit better and almost get some work done

The next AI wave was happening but I still knew that I couldn't really join a startup. I would turn down recruiting calls and calls with founders because I knew that this wasn't enough

I still wouldn't be able to work even four or five hours a day if I had to use a computer the way that I did then.

But in many ways coding agents change that

I went from someone thinking that my technical career was over to starting a consulting business, finding leverage, teaching people, and helping get businesses but there is no more proof that coding agents work than the fact that I literally was able to join OpenAI to help others see what is possible

I literally went from thinking my career was over not because I was being replaced by my AI but because the fact that my body is failing to use ChatGpt to re-enable myself to make things and now using Codex literally at OpenAI

I feel like I already did the hard work of not tying my identity to my intelligence or to my ability to write code

And that way I got lucky. I've adopted voice coding and AI coding sooner than everyone else not because I was some kind of trailblazer but because it was the only thing I could do

Now I gotta do that here on such a huge stage.

Only getting started

English