Sabitlenmiş Tweet

iFadedTooth

3.6K posts

iFadedTooth

@iCryptooth

All things are possible through our Lord and Saviour. Crypto and Tooth enthusiast 🦷 / no Risk, no Story

Katılım Ocak 2025

360 Takip Edilen1.2K Takipçiler

Aped $Poke at 12k mc

The concept of the tek is similar to the World Cup Coin with the different countries being deployed as micro tokens except this one is pokémon narrative. The $WorldCup one is currently at 3.2m mc, so do as you will with this information👀

Bullish on the tek

Dex Paid ✅

@PokefunPF

CA: FADNLNo4xc8ot3bcgbHFQuuSKg93jKfpR8pcCR76pump

POKEFUN ON PumpFUN@PokefunPF

Did you catch them all? ⚡🌱🔥💧 Pikachu, Bulbasaur, Charmander, Squirtle… the OG squad finally showed up 👀

English

@FIFAWorldCup 12.8m followers shilling our ticker on their profile

OG We are $26

Don’t be sidelined anon.

CA: Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

English

We are 23 days out from the @FIFAWorldCup

The Slogan is “We are 26”

You will hear about it every single day as the whole world watches and listens.

Easiest front run

CA: Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

English

You will be hearing “We are 26” before, during, and after every single game this World Cup.

OG Ticker

We await on @Pumpfun to approve CTO

CA: Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

English

@Rick0xZk We are 26!!! Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

Nederlands

iFadedTooth retweetledi

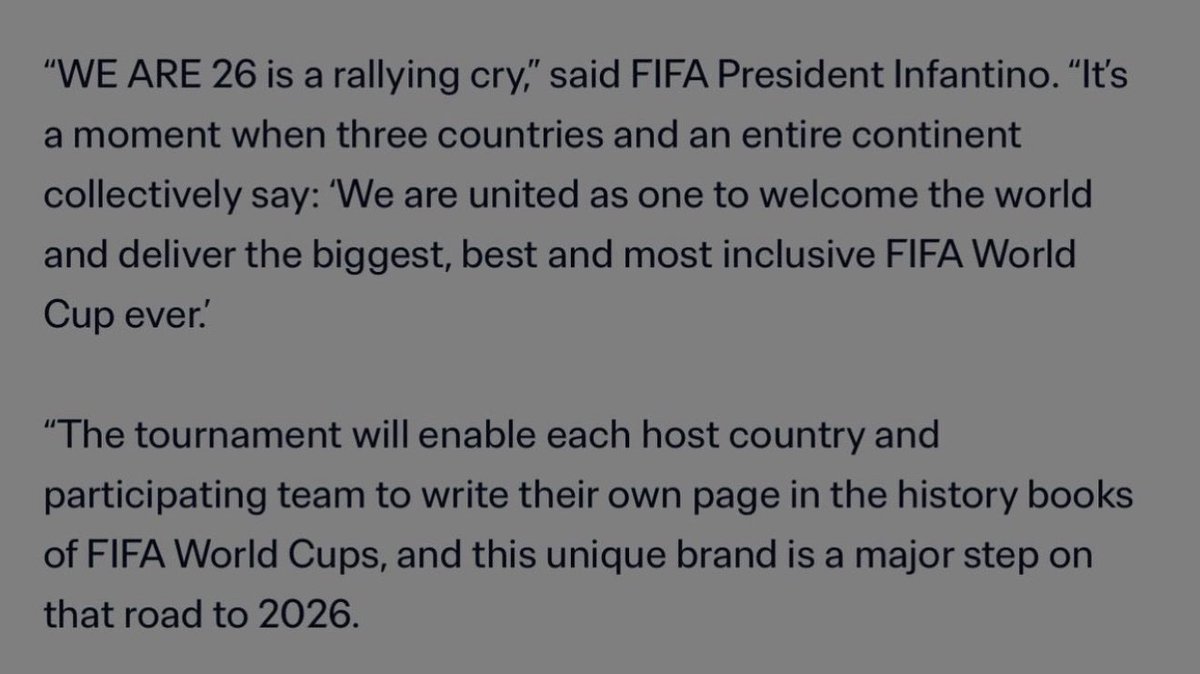

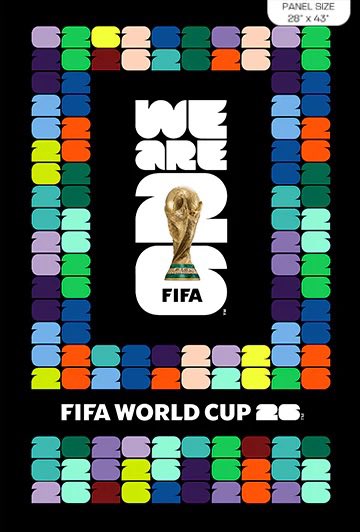

The Official Slogan for the 2026 FIFA World Cup is "WE ARE 26"

It was revealed alongside the official brand and emblem to capture the unique stories, diversity, and vibrancy of the host nations Canada, Mexico, and the United States.

OG We Are $26

CA: Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

English

iFadedTooth retweetledi

iFadedTooth retweetledi

This will be the next major runner $FIFA Official Slogan 👀

“We Are 26” ⚽️🏟️

Crime It!

Fqvo9cQQXVGvWCQBNsqwLkh1xdfGCPb8Ww4NNfzXpump

On @FIFAcom @FIFAWorldCup site as well go confirm yourself 🫡

English

iFadedTooth retweetledi

iFadedTooth retweetledi