Sabitlenmiş Tweet

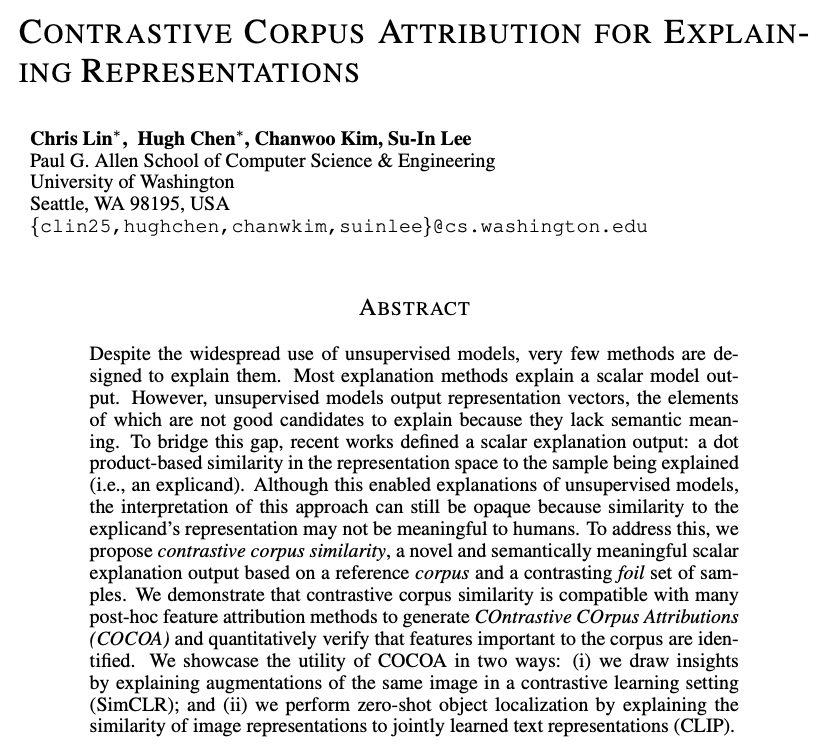

Making this class with Su-In, Hugh and Chris was one of the most fun things I did in grad school. We covered a ton of material, definitely check out all the slides we made courses.cs.washington.edu/courses/csep59… I'm excited to see how the course evolves in the next couple years!

English