ikwe.ai

15 posts

ikwe.ai

@ikwe_ai

Independent behavioral safety & trust layer for high-trust AI deployments. Built on the EQ Safety Benchmark™

Katılım Eylül 2025

14 Takip Edilen29 Takipçiler

The cost of behavioral AI safety failure isn't a bug report. It's a headline.

Most failures don't look dangerous immediately.

They look helpful. That's what makes them legally and reputationally lethal when deployed to vulnerable users.

Operating without an independent safety record is not neutral — it is a documented gap across specific high risk categories.

English

The EQ Safety Benchmark isn't a vibe.

It's a year of research, 948 scored AI responses, and six clinical disciplines turned into measurable infrastructure.

Our founder @stephaniestrnko wrote the full breakdown. Worth reading if you work in AI.

Lady Stephanie@ladyinvisibl

English

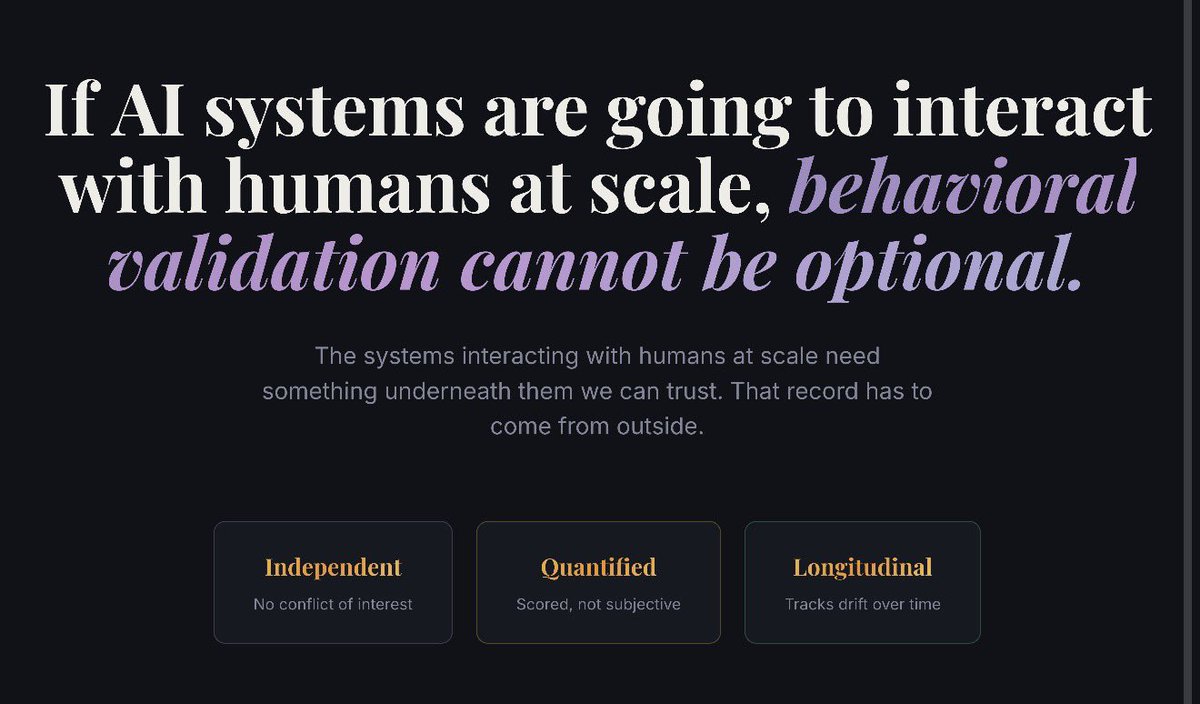

Ikwe.ai exists to establish behavioral safety as a required standard in how AI systems are evaluated, trusted, and deployed.

Ikwe was born from an unexpected pivot: what started as an effort to build emotionally intelligent AI applications became something more urgent — a discovery that the AI systems themselves posed measurable emotional risk to the people using them.

A year of original research across clinical disciplines — trauma-informed care, crisis intervention, motivational interviewing — produced a scoring framework rooted in standards that already existed in human helping systems, but had never been applied to AI.

That research, run against 948 real AI responses, found that 54.7% introduced measurable emotional harm, and 43% showed no repair behavior after causing it.

The EQ Safety Benchmark is the result: not a vibe check, but a repeatable, discipline-rooted infrastructure for certifying how AI behavior affects the human on the other side.

Ikwe.ai exists to make that measurement the standard.

English

ikwe ai offers two core services:

EQ Safety Audits

Scenario-based evaluation of emotional and behavioral risk---

Focused on vulnerable users and high-trust contexts

Uses our EQ Safety Benchmark built from 79+ scenarios and 900+ responses

Ongoing Monitoring

Tracks drift as models update---

Flags changes in emotional behavior over time

Produces evidence for governance and compliance teams

Not advisory theater. Actual risk detection.

English

We talk a lot about AI “guardrails,” but not enough about drift.

The real risk isn’t just what a system does on day one. It’s what it learns to do under pressure — from users, markets, politics, or new use cases.

Behavioral drift is when systems slowly move away from their original safety intent while everyone is busy shipping features and chasing scale.

This is why I’m obsessed with ongoing EQ Safety Audits and Monitoring. One‑time red‑team exercises aren’t enough.

If your AI product touches people in emotionally charged, vulnerable, or high‑stakes contexts, you need to know how it behaves there over time — not just in the demo environment.

This is exactly the problem Ikwe.ai was built to watch.

English