Ross Girshick

28 posts

Ross Girshick

@inkynumbers

Giver of vision to machines.

Katılım Nisan 2009

42 Takip Edilen3.8K Takipçiler

Do you want to work with me and our amazing team?

Apply at vercept.com/careers

Stack: PyTorch, React, Next.js, TypeScript

#PyTorch #NextJS #AI #React #AIStartup

English

@giffmana FAIR was and continues to be an amazing place! However, being in one place for a long time (8 yrs for me) can eventually become its own good reason to move. Reinitialization and randomization are important in research life. (Any talk of publication quotas is pure nonsense.)

English

@gruntleme It's a wonderful illustration of a giant house spider! These friends visit me from time to time in the basement (where I work from home). They have a curious range: most of Europe, a small bit of the PNW around Seattle and Vancouver, BC, and another small bit of the Mid-Atlantic.

English

@karpathy @giffmana @PaulKRubenstein @endernewton @sainingxie The mismatch may be an issue (I don't know), but apparently it's not a catastrophe. End-to-end or partial fine-tuning may help compensate, if it is a problem. I also find it somewhat concerning and think it could be worth investigating.

English

@inkynumbers @giffmana @PaulKRubenstein @endernewton @sainingxie Oh hey, following Twitter rabbit hole bears fruit :) Great, was wondering the same. Slightly unnerved about the train/test mismatch and surprised it is not an issue. Good ref to the earlier/related high-res result.

English

1/N The return of patch-based self-supervision! It never worked well and you had to bend over backwards with ResNets (I tried).

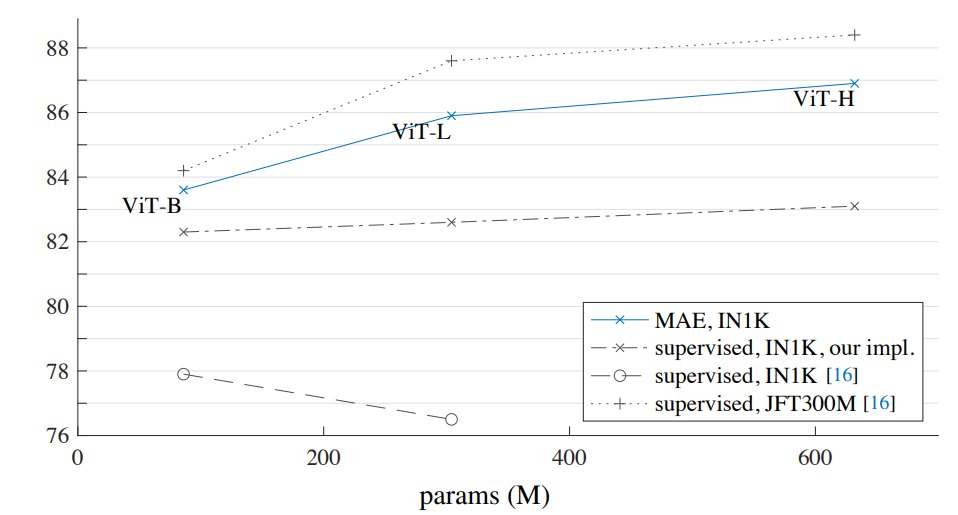

Now with ViT, very simple patch-based self-supervised pre-training rocks! First BeIT, now Masked AutoEncoders i1k=87.8% arxiv.org/pdf/2111.06377…

🧶

English

@PaulKRubenstein @endernewton @sainingxie @inkynumbers Hi Paul! They don't specify but I expect that all patches are provided.

ViT is flexible wrt patches it sees, we already showed this via the high-res trick in original ViT (which also works without fine-tuning).

But would be good if one of the authors could confirm.

English

@giffmana I agree about the use of nparams being slightly wrong here. Within the scope of the fig each "column" of points is comparable, but using flops on the x-axis would be more meaningful wrt scaling. (I actually complain about nparams as a complexity measure all the time...)

English

@inkynumbers re /14: ah thanks. This makes using nparams a little wrong: H/16 would have same params but less "capacity".

re style: the style is actually fine, I like the blue-gray, but this figure is clearly rushed/unpolished. One more day of love would not have hurt :)

English

@giffmana @y_m_asano @endernewton @sainingxie Our bias is for det and seg transfer, rather than more cls results, so that's what we went with (lacking bandwidth for both). I do want to note the det and seg tables show IN1k sup baselines (not just self-sup). MAE (and BEiT) surpass IN1k sup convincingly, exciting to me!

English

@y_m_asano @endernewton @sainingxie @inkynumbers That's too old-school ;-)

Agree transfer results are limited, but they do have seg and det results, but only compare to other self-sup in their tables.

English

@giffmana It's a bit buried in the caption of Table 3, but therein it says ViT-H is /14.

We debated the line-plot style and have diverging opinions of what looks nice ;).

English

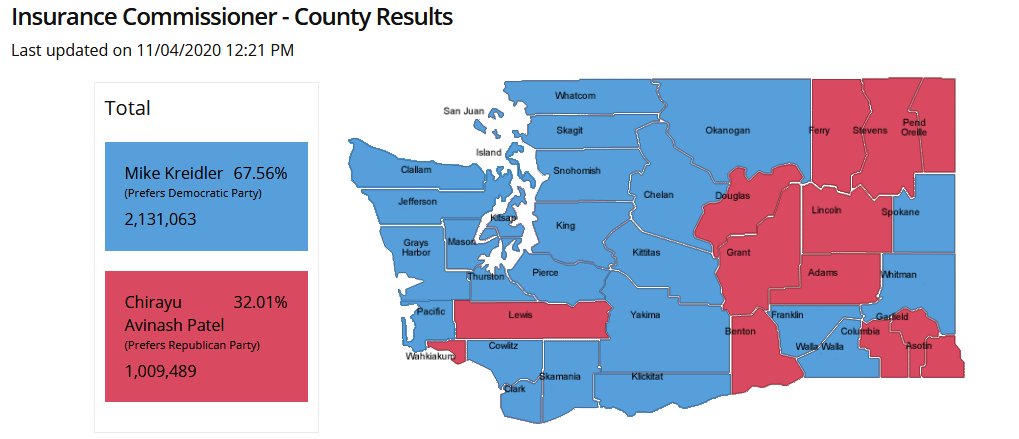

@BruneElections Reading his statement will explain a lot voter.votewa.gov/genericvotergu…

English

@gruntleme If I had a plastic bag, I could pick it up and put it in the SDH7 kitchen freezer. I'm sure that wouldn't bother anyone here ;-).

English

@gruntleme -- ah, but I do not! It seems like you're always 99% of the way to the answer whenever you ask me a question.

English

@gruntleme Good question. Who knows who this account actually belongs to or who might have hijacked it? Or is it an AI?

English

@gruntleme Yes, it takes both. Each on its own is insufficient.

English