Ashish

1.7K posts

Ashish

@inqusit

Cognition, Retrieval Research at IIT, iOS dev for 10 years, Building Agentic Products, harness engineering

idk if its good or bad for his career, but Dwarkesh’s willingness to challenge powerful people on his pod is certainly commendable.

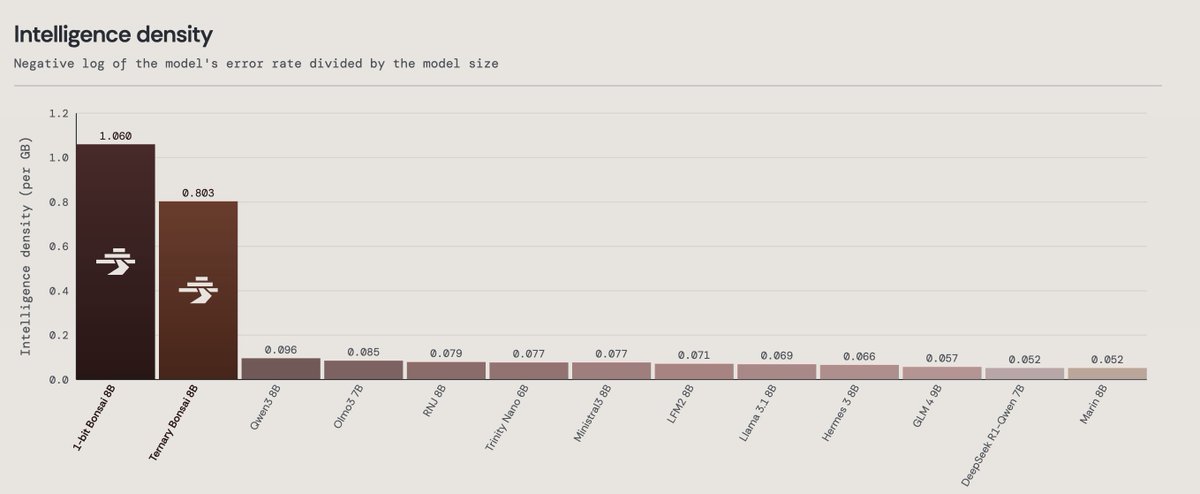

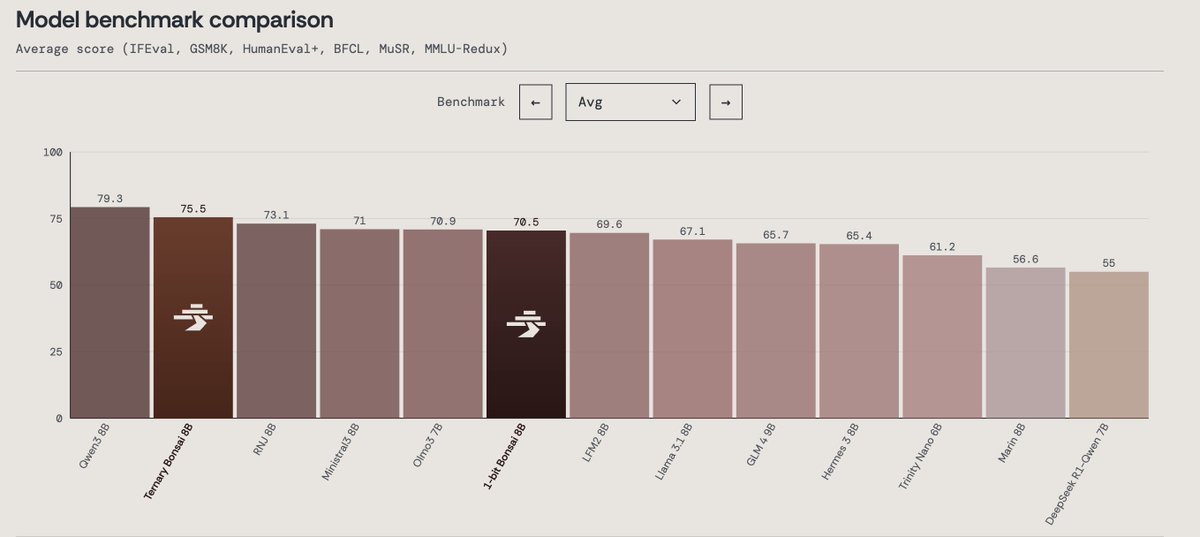

Today we’re announcing Ternary Bonsai: Top intelligence at 1.58 bits Using ternary weights {-1, 0, +1}, we built a family of models that are 9x smaller than their 16-bit counterparts while outperforming most models in their respective parameter classes on standard benchmarks. We’re open-sourcing the models under the Apache 2.0 license in three sizes: 8B (1.75 GB), 4B (0.86 GB), and 1.7B (0.37 GB).

Today we’re announcing Ternary Bonsai: Top intelligence at 1.58 bits Using ternary weights {-1, 0, +1}, we built a family of models that are 9x smaller than their 16-bit counterparts while outperforming most models in their respective parameter classes on standard benchmarks. We’re open-sourcing the models under the Apache 2.0 license in three sizes: 8B (1.75 GB), 4B (0.86 GB), and 1.7B (0.37 GB).

Opus 4.7 uses more thinking tokens, so we've increased rate limits for all subscribers to make up for it. Enjoy!