Itay Evron

528 posts

Itay Evron

@itayevron

PhD; Research Scientist @Meta (opinions are my own)

Katılım Temmuz 2018

397 Takip Edilen821 Takipçiler

Sabitlenmiş Tweet

Itay Evron retweetledi

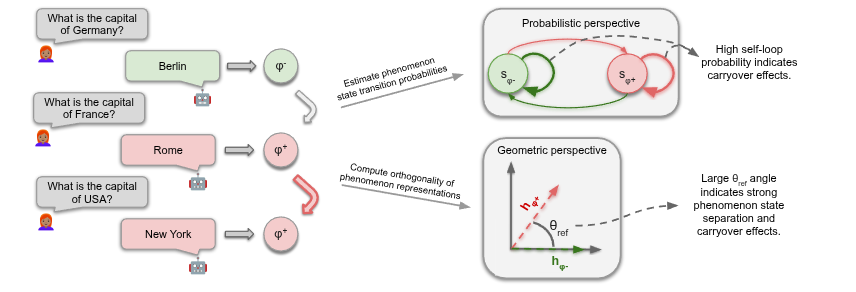

How does an LLM’s past influence its future?🤔

In our new paper with @FazlBarez,@mtutek,@boknilev, Shay Cohen, we show that conversational history creates a "geometric trap" in the latent space, confining the model’s trajectory➡️making old habits e.g. hallucinations hard to break

English

Itay Evron retweetledi

🚨 2026 @Princeton ML Theory Summer School

Mini-courses by:

- Subhabrata Sen @subhabratasen90

- Lenaic Chizat @LenaicChizat

- Sinho Chewi

- Elliot Paquette @poseypaquet

- Elad Hazan @HazanPrinceton

- Surya Ganguli @SuryaGanguli

August 3 - 14, 2026

Apply by March 31. Link 👇

Sponsors: @NSF, @PrincetonAInews, @EPrinceton @JaneStreetGroup, @DARPA, @PrincetonPLI, Princeton NAM, Princeton AI2, Princeton PACM

Some amazing speakers from previous years: @Andrea__M, @TheodorMisiakie, @KrzakalaF, @_brloureiro, @rakhlin, @DimaKrotov, @CPehlevan, @SoledadVillar5, @SebastienBubeck, @tengyuma

English

כבר הרבה זמן שהמטריקה היחידה שאני עוקב אחריה באדיקות היא זו.

המודלים הופכים לחכמים יותר, זה נכון - ובמקביל לחרטטנים יותר.

Amit Mandelbaum@Amit_Mandelbaum

עדיין בשוק כמה ג'מיני מחרטט למוות ביחס למודלים אחרים אפילו במודלים הכי חזקים שלו ואפילו כשאני ליטרלי מבקש מקור לכל דבר שהוא כותב.. זה לא קורה בקלוד או ב ChatGPT. ג'מיני פשוט ממציא, בלי הפסקה.

עברית

Itay Evron retweetledi

1/6 🧵 Calibration is hard. Multicalibration—fixing errors across every possible subgroup—is usually impossible at scale. Until now. Introducing MCGrad: A production-ready multicalibration library from Meta, accepted at KDD 2026. 🚀 github.com/facebookincuba…

English

Itay Evron retweetledi

A glimpse into the research I’ve been leading over the past year at Meta 🥹 .

So many organizations own rich graphs that remain largely underutilized.

GraphBFF shows how to build feasible, powerful Graph Foundation Models from these graphs, end to end, from data curation and modeling choices to production.

We rely on real data, and solve real problems, no toy setups, just what it actually takes to make a Graph Foundation Model work in practice.

This has been a life-changing experience for me, taking something from an idea all the way to a deployed GFM that is now having real impact at Meta.

The preprint is now available on arxiv.

English

@miniapeur Higher-Order Learning Dynamics on Cellular Complexes

M Alain, Terrence Tao, Yoshua Bengio

Annals of Mathematics, 2026

English

Itay Evron retweetledi

📌 [1/4] A Graph Meta-Network for Learning on Kolmogorov-Arnold Networks

We introduce a weight-space model for KANs, where learning happens directly over the KANs' 1D functions. This work was done during my Meta internship.

openreview.net/pdf?id=ONpyYav…

English

@boknilev @HebAcademy ועל כך כתוב בספרי זוטא:

תמהני עליך יונתן שאמרת דבר זה

עברית

Itay Evron retweetledi

One of my papers I'm especially fond of, now accepted to ALT2026. 🥳

A question kept me busy for a few years:

Do continual linear models under random task orderings converge more slowly in high dimension?

By reducing this problem to stepwise-optimal SGD, we show they do not! pic.twitter.com/iiFzqlHb1M

Itay Evron@itayevron

In continual learning of linear models random task orderings diminish forgetting even in high dimensions! Better Rates for Random Task Orderings in Continual Linear Models Evron*, @ranlevinstein*, @MatanSchliserm1*, Sherman*, Koren, @soudry_daniel, Srebro arxiv.org/abs/2504.04579

English

Itay Evron retweetledi

Itay Evron retweetledi

Remember our ICML25 "Graph Learning Will Lose Relevance Due To Poor Benchmarks"?

Fear no more! GraphBench is here! 🤩

We give you: The next generation of Graph Benchmarking! Including:

-New shiny high-quality datasets from diverse domains spanning seven domains, including chip design, algorithmic reasoning, and weather forecasting.

-Standardized hyperparameter tuning procedures, enabling fair and principled model comparison

- Strong, transparent baselines that accurately reflect algorithmic progress

- Comprehensive coverage of graph learning tasks, datasets, and modern GNN architectures

- Reproducibility-focused design, minimizing variance and evaluation artifacts

- Forward-looking benchmark designed for next-generation graph learning research

A huge collab with: @chrsmrrs, @mmbronstein, @michael_galkin, @HolgerHoo, Timo Stoll, @ChendiQian, @benfinkelshtein, Ali Parvis, Darius Weber, @ffabffrasca, @HadarShavit, @antoinesrdin, Arman Mielke, Marie Anastacio, Erik Müller,

English

Itay Evron retweetledi

Since linear probes are popular again, maybe it’s a good time to point to the many issues with them, which were examined in detail in the NLP Interpretability community. The “mechanistic?” piece by @sarahwiegreffe and @nsaphra has many useful pointers.

aclanthology.org/2024.blackboxn…

English