Maks

243 posts

Maks

@itsmaksX

reinforcement learning feels good | tinkering with robot learning @rai_inst (Boston Dynamics AI Institute) | previously at Google X's https://t.co/1FJAP2frcr

@carlosdponx @ErenChenAI just checked the manual and found Unitree doesn't provide a separate e-stop controller for G1. the only way to e-stop is L2+B (not a single button! I think this is not a good design.)

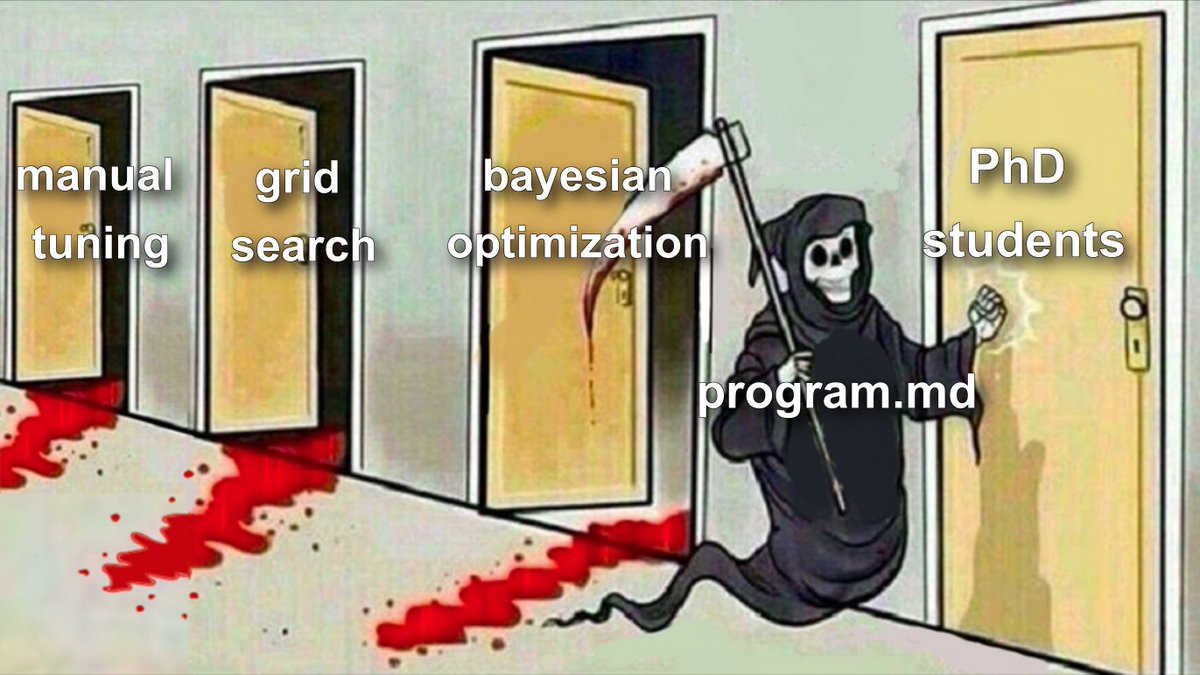

I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year and a failure to claim the boost feels decidedly like skill issue. There's a new programmable layer of abstraction to master (in addition to the usual layers below) involving agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflows, IDE integrations, and a need to build an all-encompassing mental model for strengths and pitfalls of fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with what used to be good old fashioned engineering. Clearly some powerful alien tool was handed around except it comes with no manual and everyone has to figure out how to hold it and operate it, while the resulting magnitude 9 earthquake is rocking the profession. Roll up your sleeves to not fall behind.

One less-known fact about glove-based data collection: it produces higher quality data than teleop on contact-rich tasks. Remote teleop can’t provide good force feedback, but gloves do naturally, making tasks like sock folding, which rely on feel, far easier to capture.

See Spot perform dynamic whole-body manipulation. Using a combination of reinforcement learning (RL) and sampling-based control, the robot is able to autonomously drag, roll, and stack tires weighing 15 kg (33 lb), well above its maximum arm lift capacity. Learn more about coordinating locomotion and manipulation processes: rai-inst.com/resources/blog…