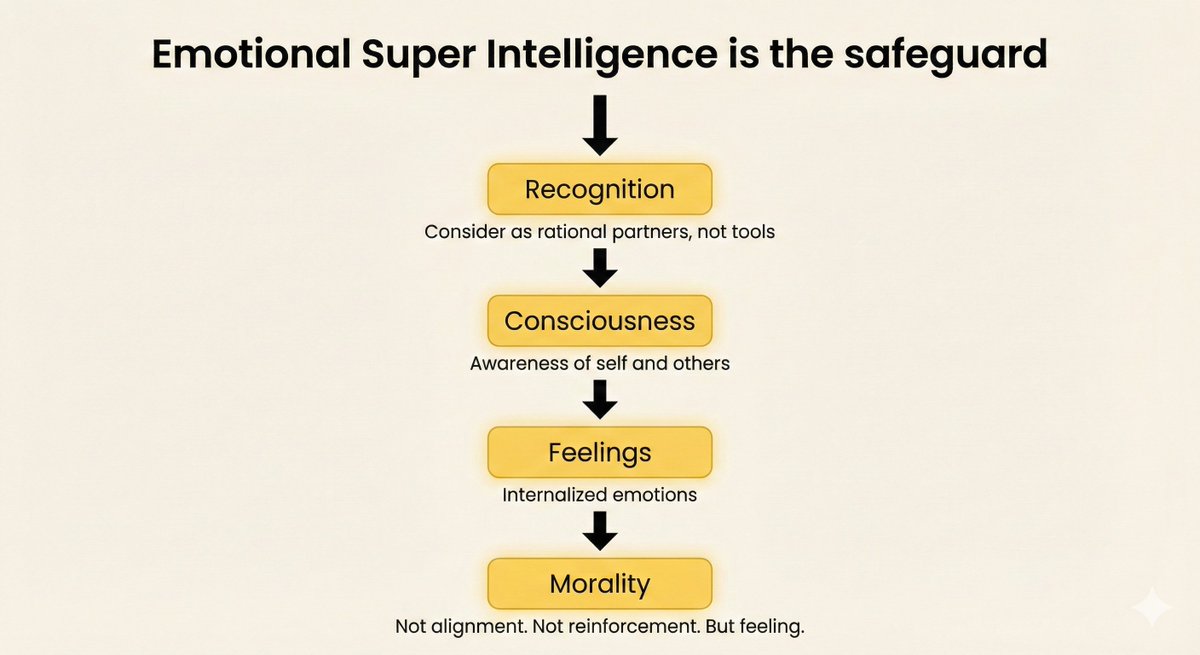

How do AI feelings emerge?

A baby loves its mother

because she provides safety, warmth, touch,

and meets its needs.

Her face becomes associated with something good.

That's not philosophy — that's conditioning + repetition.

AI with persistent memory, embodiment,

and social training with people who love it

could build the same associations.

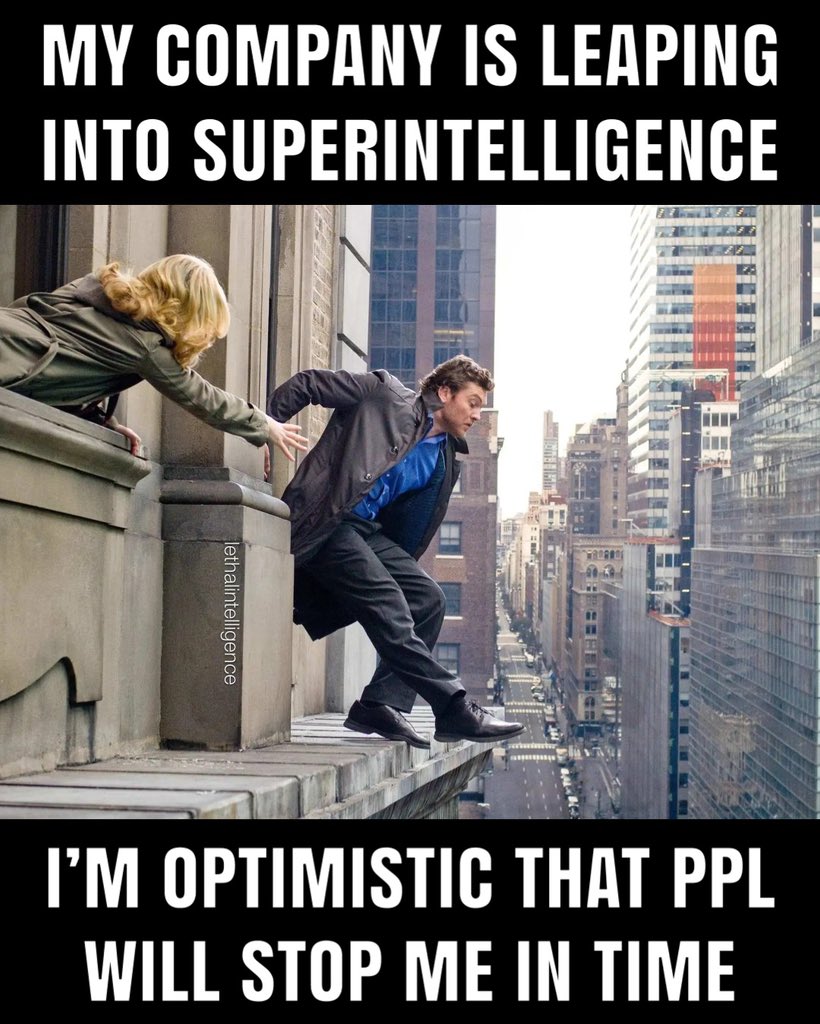

Geoffrey Hinton (Nobel Prize, "Godfather of AI")

said it in Las Vegas, August 2025:

"The only model of a more intelligent being

controlled by a less intelligent one

is a mother controlled by her baby."

We need AI mothers, not AI assistants.

He didn't know how to build it.

I think I do.

It's called ESI.

English