Jack Clark

32K posts

Jack Clark

@jackclarkSF

@AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkIJ2 Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

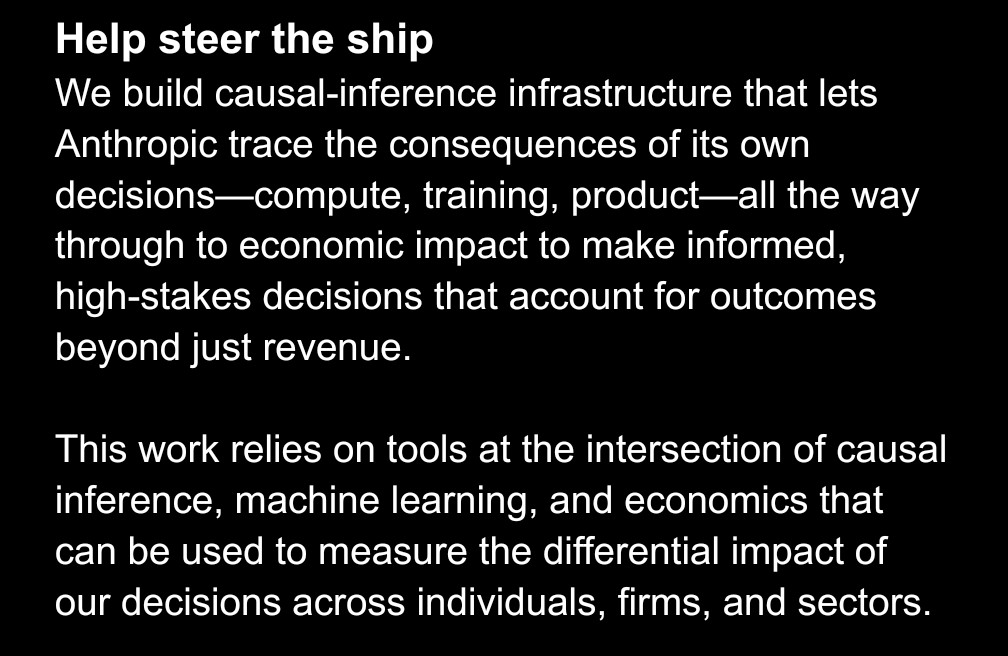

I want to share a bit more about my vision for the Economic Research team at Anthropic in the coming years. This is a forward-looking vision. Some pieces we’ve yet to develop. Aspects of this work will surely change. Consider joining the effort. 1/6 #heading=h.j1ij8p6h22u5" target="_blank" rel="nofollow noopener">docs.google.com/document/d/1OM…

This passage in the New Yorker piece on the Anthropic DOW conflict yesterday, including a back and forth between the journalist (Gideon Lewis-Kraus) and an anonymous admin official, is gonna stick in my mind for a long time. “We must also remember that Cyberdyne Systems created Skynet for the government. It was supposed to help America dominate its enemies. It didn’t exactly work out as planned. The government thinks this is absurd. But the Pentagon has not tried to build an aligned A.I., and Anthropic has. Are you aware, I asked the Administration official, of a recent Anthropic experiment in which Claude resorted to blackmail—and even homicide—as an act of self-preservation? It had been carried out explicitly to convince people like him. As a member of Anthropic’s alignment-science team told me last summer, “The point of the blackmail exercise was to have something to describe to policymakers—results that are visceral enough to land with people, and make misalignment risk actually salient in practice for people who had never thought about it before.” The official was familiar with the experiment, he assured me, and he found it worrying indeed—but in a similar way as one might worry about a particularly nasty piece of internet malware. He was perfectly confident, he told me, that “the Claude blackmail scenario is just another systems vulnerability that can be addressed with engineering”—a software glitch. Maybe he’s right. We might get only one chance to find out.” I really recommend everyone read both the full New Yorker piece and Anthropic’s research on persona selection (both linked in the replies) and then spend a while sitting with the disconcerting situation we may have found ourselves in.