Jan Held

81 posts

Jan Held

@janheld14

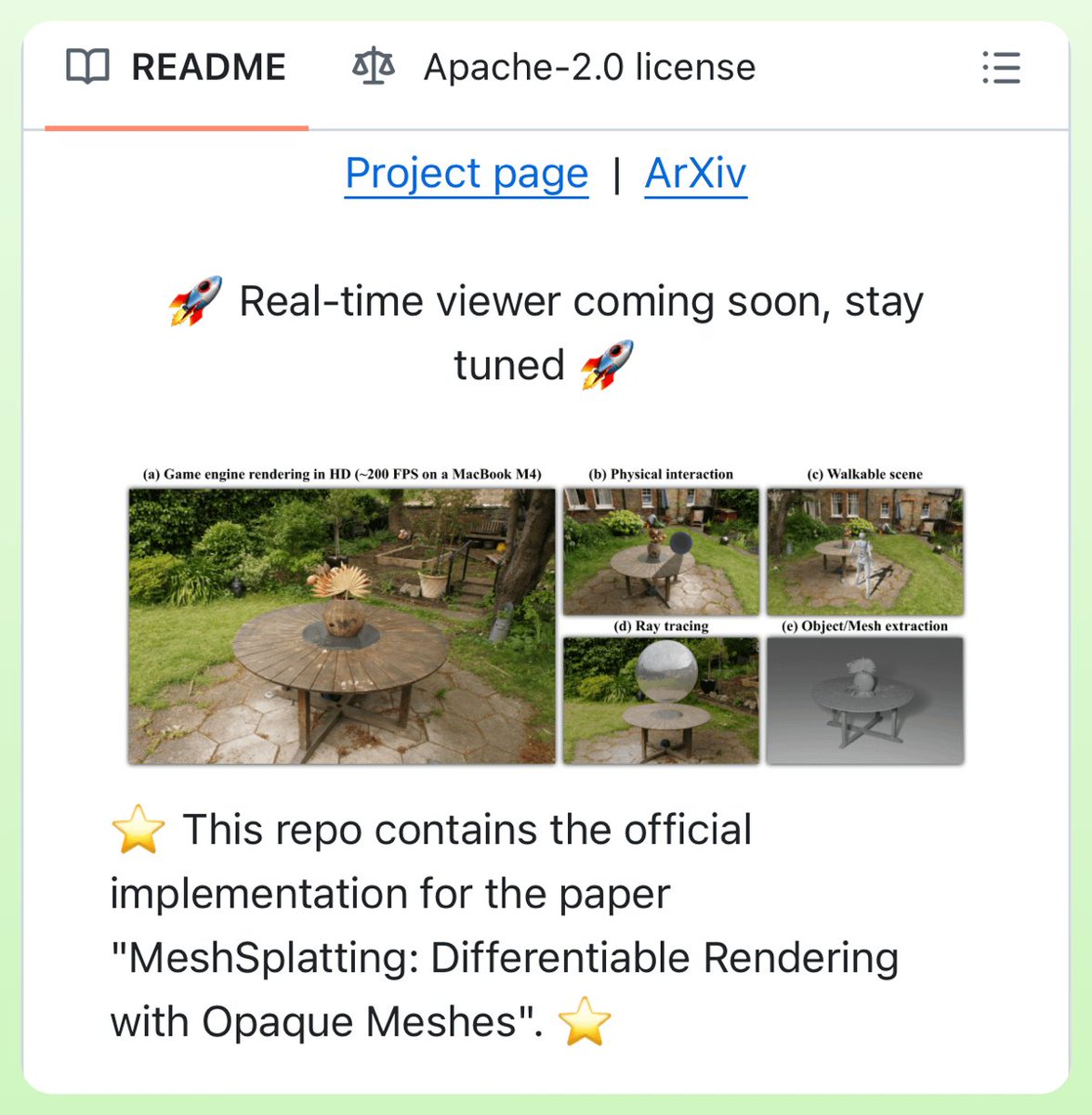

💻 Building 3D worlds at @SpAItial_AI 🤖 Prev projects: Triangle Splatting & MeshSplatting & 3D Convex Splatting & more

The deadline is in few days. Please submit as soon as possible to 3DMV workshop @CVPR #CVPR2026 We also accept no-archival track submissions ; if your paper is already accepted at CVPR 2026 (or top AI conf in the last 2 yrs) you can submit and showcase your work at the workshop.

🚀🚀Want to build 𝐖𝐨𝐫𝐥𝐝 𝐒𝐢𝐦𝐮𝐥𝐚𝐭𝐨𝐫𝐬?🚀🚀 We're hiring in Munich or London! Check it out: spaitial.ai/careers SpAItial is pioneering the next generation of World Models, pushing the boundaries of generative AI, computer vision, and the simulation of reality. We are moving beyond 2D pixels to build models that natively understand the physics and geometry of our world. Our mission is to redefine how industries, from robotics and AR/VR to gaming and cinema, generate and interact with physically-grounded 3D environments. We’re looking for individuals who are bold, innovative, and driven by a passion for pushing the boundaries of what’s possible. You should thrive in an environment where creativity meets challenge and be fearless in tackling complex problems. Our team is built on a foundation of dedication and a shared commitment to excellence, so we value people who take immense pride in their work and place the collective goals of the team above personal ambition. As a part of SpAItial, you’ll be at the forefront of the AI revolution in generative AI technology, and we want you to be excited about shaping the future of this dynamic field. If you’re ready to make an impact, embrace the unknown, and collaborate with a talented group of visionaries, we want to hear from you. #worldmodels #GenAI #3D #spatialintelligence

The paper submissions for the @CVPR Third Workshop for Learning 3D with Multi-View Supervision (3DMV) are now OPEN. 3DMV includes archival at CVPR proceedings for papers upon acceptance at the workshop. Topics, deadlines, a fantastic lineup of speakers, and a tentative schedule below🚀 details: 3dmv.org/2026/

Happy to share that the THIRD Workshop for Learning 3D with Multi-View Supervision (3DMV) is coming to @CVPR #CVPR2026 in Colorado this summer! The workshop will feature great speakers, posters , discussions, and paper publications at CVPR proceedings! 3dmv.org/2026/

Introducing Nexels: Neurally-Textured Surfels for Real-Time Novel View Synthesis with Sparse Geometries Nexels render in real-time at high quality without needing millions of primitives. Site: lessvrong.com/cs/nexels/ Paper: arxiv.org/pdf/2512.13796 Code: github.com/victor-rong/ne…

🚀 Announcing Echo — our new frontier model for 3D world generation. Echo turns a simple text prompt or image into a fully explorable, 3D-consistent world. Instead of disconnected views, the result is a single, coherent spatial representation you can move through freely. This is part of a bigger shift in AI: from generating pixels and tokens to generating spaces. Echo predicts a geometry-grounded 3D scene at metric scale, meaning every novel view, depth map, and interaction comes from the same underlying world — not independent hallucinations. Once generated, the world is interactive in real time. You control the camera, explore from any angle, and render instantly — even on low-end hardware, directly in the browser. High-quality 3D world exploration is no longer gated by expensive equipment. Under the hood, Echo infers a physically grounded 3D representation and converts it into a renderable format. For our web demo, we use 3D Gaussian Splatting (3DGS) for fast, GPU-friendly rendering — but the representation itself is flexible and can be easily adapted. Why this matters: consistent 3D worlds unlock real workflows — digital twins, 3D design, game environments, robotics simulation, and more. From a single photo or a line of text, Echo builds worlds that are reliable, editable, and spatially faithful. Echo also enables scene editing and restyling. Change materials, remove or add objects, explore design variations — all while preserving global 3D consistency. Editing no longer breaks the world. This is only the beginning. Echo is the foundation for future world models with dynamics, physical reasoning, and richer interaction — environments that don’t just look right, but behave right. Explore the generated worlds on our website and sign up for the closed beta. The era of spatial intelligence starts here. 🌍 #Echo #WorldModels #SpatialAI #3DFoundationModels Check it out: spaitial.ai