Jaski

2.9K posts

Jaski

@Jas_Jaski

Intelligence | Energy | Space Ad Astra

This is wild. SpaceX now has the right to BUY Cursor for $60B. Or pay them $10 billion to walk away. To put it in perspective, Cursor was worth $9.9 billion total in May of last year. Let's have a closer look at the numbers. Start with the $60 billion. Cursor was already raising money this week at a $52 billion valuation from a16z and Nvidia. The Elon offer sits 15% above a number that was already on the table. The next round priced in, with a one-year fuse. The $10 billion is the real number. That's what SpaceX pays even if it walks away and never buys the company. The walk-away fee alone is more than the entire company was worth 12 months ago. Now the strategic logic. Cursor stopped being just an editor in March. They shipped Composer 2, their own model, and it beat Claude Opus 4.6 on Terminal-Bench at one-tenth the price. The catch is that frontier coding models need frontier compute, and the only labs with frontier compute are the same ones building competing coding products. OpenAI shipped Codex. Anthropic shipped Claude Code. Google has Gemini CLI. Cursor was renting capacity from every company trying to kill it. Colossus is the way out. 230,000 GPUs in Memphis today, 1 million by year end, the biggest training cluster on Earth. The Information already reported Cursor is renting tens of thousands of those chips to train Composer 3. SpaceX is also building Grok Code, so they're not a clean partner. But xAI losing the coding race to Cursor is a better outcome for SpaceX than Cursor losing the coding race to OpenAI. The trade Cursor made: gave up the right to be acquired by anyone else for one year. Got training compute at a scale no other lab would sell them. Got $10 billion guaranteed if Elon walks. OpenAI tried to buy Cursor in early 2025 and got rejected. Cursor stays independent for at least 12 more months and gets to train on the biggest cluster on earth doing it. Elon just bought a one-year call option on Cursor for $10 billion. That's the deal.

I’ve wanted to do this for a decade. But I never did - I refuse to give any company my DNA. It is me. So this week I sequenced my genome entirely at home. Literally on my kitchen table. I never exposed my DNA sequence to the internet. Not at any point. I used a MinION to do the sequencing (it’s smaller + weighs less than an iPhone). I used open-source DNA models for the analysis (Evo2 and AlphaGenome) running locally on a DGX Spark and Mac Studio. I traced mechanisms behind my family’s multigenerational autoimmune conditions that no clinician has been able to understand. When I set out to do this I didn’t know if it would actually work. It does. Your genome is the most private data you will ever have. You probably shouldn’t let it leave your house.

SpaceX's Gigabay in Florida is coming along pretty well, ain't it? 😉 📸 - @NASASpaceflight

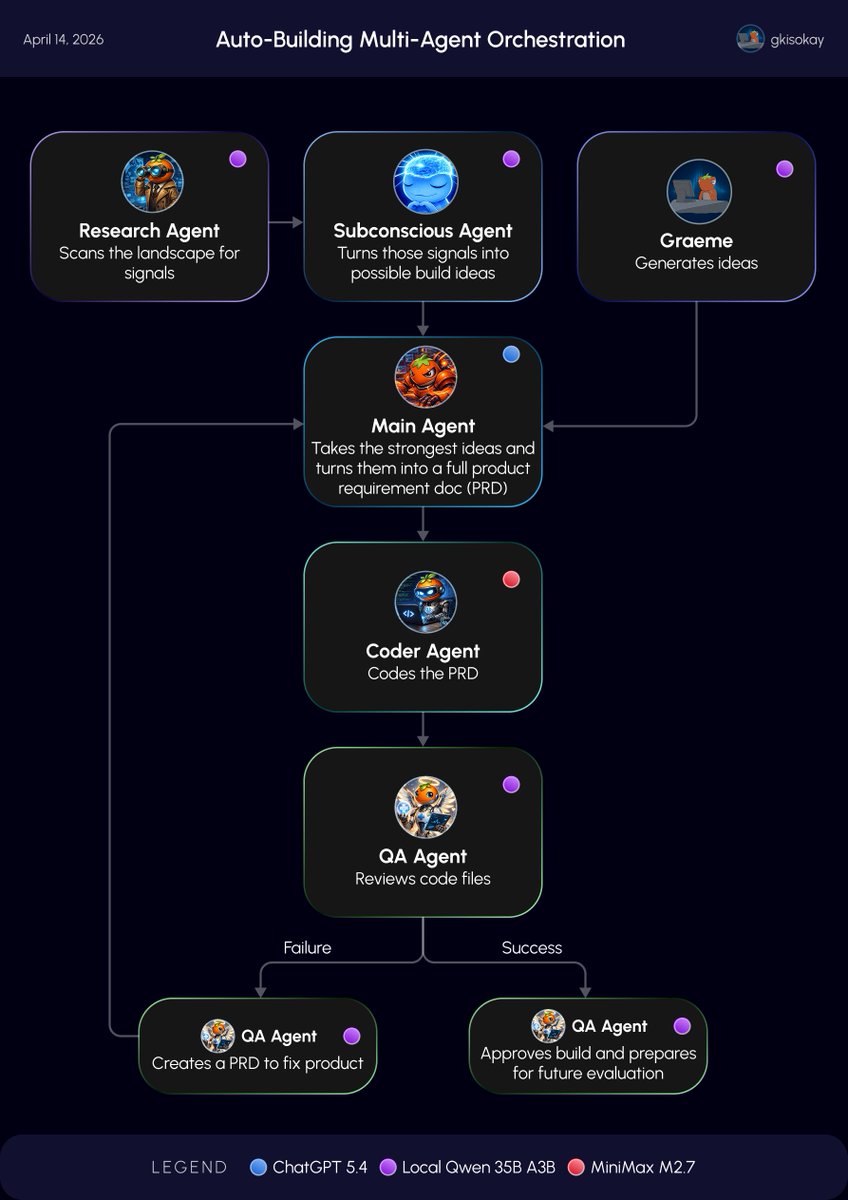

Day 6 of Building AGI for my Hermes Agent: The Crew Arrives 🧠 Today, the system stopped being a single experimental mind and became a coordinated crew. Up until now, the subconscious agent could freely think, explore, and generate new build ideas. So today I built the first multi-agent orchestration loop around it, giving the system specialized roles for research, planning, building, and verification. The agents in my crew are: 1. Main agent: Owns direction, decision-making, and product planning 2. Subconscious agent: Thinks freely, explores weird ideas, and proposes new builds 3. Research agent: Scans daily AI news, updates, and relevant developments 4. Coder agent: Builds from the product plans 5. QA agent: Tests the output, checks quality, and pushes failed work back into the loop The workflow goes: Research agent scans the landscape for signals ↓ Subconscious agent turns those signals into possible build ideas ↓ Main agent takes the strongest ideas and turns them into a full product requirement doc (PRD) ↓ Coder agent builds from the PRD ↓ QA agent reviews the result. If the build passes, its queued for future evaluation. If it fails, QA creates a fix PRD and sends it back to the main agent, restarting the loop until the system improves the output ↑ It is still early, and this is nowhere near AGI, but this is the first version of something that looks more like a functioning cognitive team than a single agent blindly building whatever comes to mind. The next step is making the loop smarter: - better filtering of which ideas deserve resources - long-term evaluation cycles for new products - tighter QA standards so weak builds do not survive It is still early, and this is nowhere near AGI, but this is the first version of something that looks more like a functioning cognitive team than a single agent in building whatever comes to mind.

Talk is cheap, show me the code. Code is cheap, show me the prompt.