John D. Patterson: [email protected]

332 posts

John D. Patterson: [email protected]

@jdpttrsn

AI Engineer @ Hupside, Prev. Research Professor @ Penn State University—cognitive science, computational modeling, category learning, creativity, education.

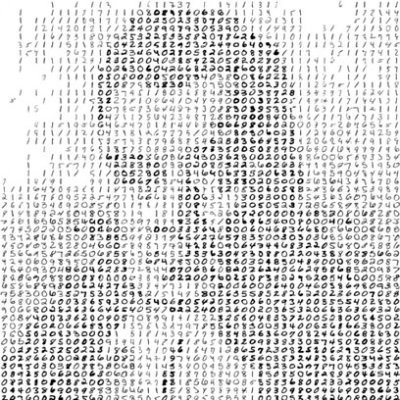

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

Postdoc opportunity! Join the Cognitive Neuroscience of Creativity Lab @PennState for NSF-funded projects on AI, creativity assessment, & neuroimaging. Send CV & research interests to rebeaty@psu.edu. Job ad coming soon. Come do creativity science with us!

Congrats to Psych Grad Student Paul DiStefano for being awarded "people's choice" in the Three Minute Thesis (3MT) competition for his entry: "Is a Hotdog a Sandwich?: Measuring Overinclusive Thinking and Creativity” gradschool.psu.edu/career-and-pro…

Here's our latest paper on automated creativity assessment, led by CNCL grad student @PaulVDiStefano3. We trained Large Language Models to predict human ratings of metaphor creativity, extending AI creativity scoring to figurative language. doi.org/10.1080/104004…