Jonathan Halcrow

981 posts

4) Building a company to build a technology to accelerate science

Now I'm starting something new—focused on core bottlenecks that could unlock step-change acceleration across science and technology. It's ambitious. I'm about to learn a lot and be humbled. I see so many similarities between my experience in this new journey now and my weeks-long backpacking trips in the Alaskan wilderness with no guide and no trail. If this excites you and you want to learn more, reach out!

English

Jonathan Halcrow retweetledi

An exciting milestone for AI in science: Our C2S-Scale 27B foundation model, built with @Yale and based on Gemma, generated a novel hypothesis about cancer cellular behavior, which scientists experimentally validated in living cells.

With more preclinical and clinical tests, this discovery may reveal a promising new pathway for developing therapies to fight cancer.

English

@suchenzang tbh it’s pretty obvious who is the strongest L8 in Gemini is

English

Jonathan Halcrow retweetledi

Jonathan Halcrow retweetledi

While I'm at home with my newborn (👶🏻❤️), you can still catch my work at #NeurIPS🧵:

English

@jhalcrow you're supposed to post your own, followed by the worst career advice imaginable

English

Jonathan Halcrow retweetledi

Jonathan Halcrow retweetledi

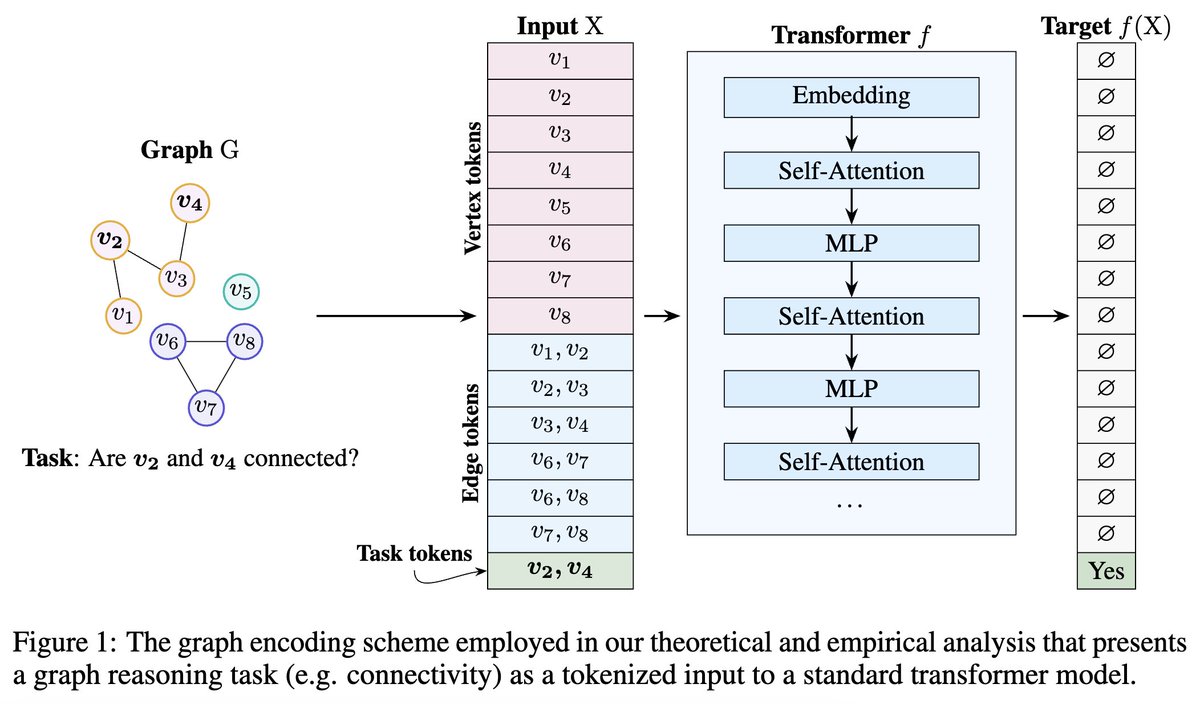

🚨 Transformer theory alert 🚨

What algorithms can transformers execute efficiently?

Our preprint sheds some light on reasoning capabilities of transformers, now in 𝒓𝒆𝒂𝒍𝒊𝒔𝒕𝒊𝒄 𝒑𝒂𝒓𝒂𝒎𝒆𝒕𝒆𝒓 𝒓𝒆𝒈𝒊𝒎𝒆𝒔.

Paper: arxiv.org/abs/2405.18512

More in thread! 1/8

English

Jonathan Halcrow retweetledi

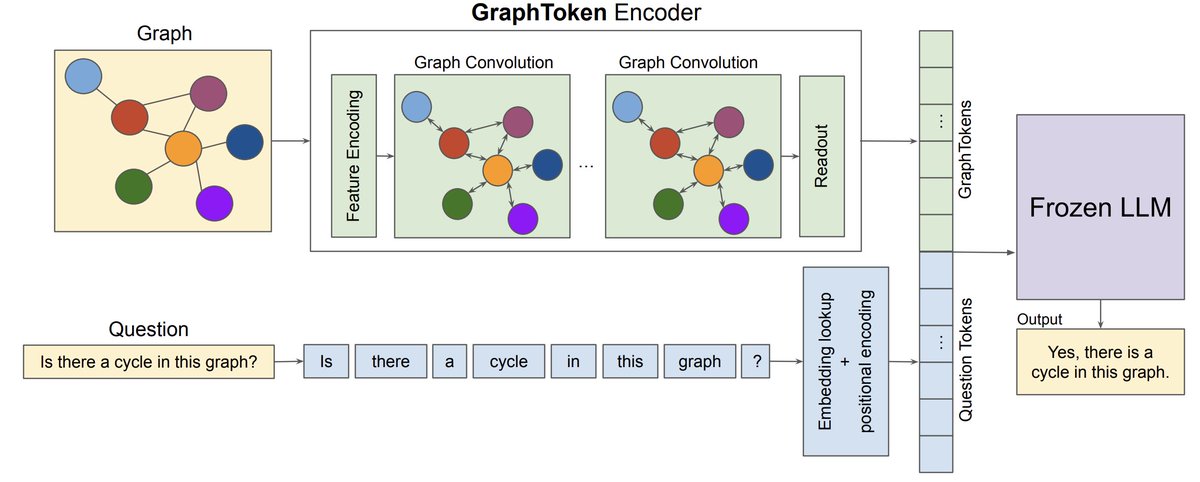

↪️ It's "Talk Like a Graph" day at #iclr2024

- 3:15, at #google see a *live demo* of GraphToken, where we train a tiny model (Gemma 2B) and get sota!

arxiv.org/abs/2402.05862

- 4:30, stop by poster 89 to see the TLG leaderboard and talk with us!

research.google/blog/talk-like…

GIF

English

We'll be demoing some of our work on graphs and LLMs later today at ICLR. If you are around stop by and say Hey!

Google AI@GoogleAI

At 3:15pm today, the #ICLR2024 Google booth will host @phanein, @BahareFatemi, and @jhalcrow for a talk on finding the correct graph inductive bias for Graph ML and developing strategies to convert graphs into language-like formats for LLMs.

English

@agihippo Beyond the physical system models like GraphCast (from GDM) we have a lot of impact inside Google with anti-abuse applications. Graph representations are really effective in that domain in a way that's hard to convey with public datasets / open models

English

Jonathan Halcrow retweetledi

Talk Like a Graph or Let Your Graph Do the Talking 🧠🕸️

New paper alert 🚨: we show how GNNs can effectively encode graph-structured data for LLMs: arxiv.org/abs/2402.05862

Anton Tsitsulin@graph_

𝐋𝐞𝐭 𝐘𝐨𝐮𝐫 𝐆𝐫𝐚𝐩𝐡 𝐃𝐨 𝐭𝐡𝐞 𝐓𝐚𝐥𝐤𝐢𝐧𝐠: 𝐄𝐧𝐜𝐨𝐝𝐢𝐧𝐠 𝐒𝐭𝐫𝐮𝐜𝐭𝐮𝐫𝐞𝐝 𝐃𝐚𝐭𝐚 𝐟𝐨𝐫 𝐋𝐋𝐌𝐬 Don't know what to do with your graphs in 2024? Shove them to an LLM, of course, and let LLM figure out what to do! arxiv.org/abs/2402.05862 Short thread (1/5):

English

Jonathan Halcrow retweetledi

𝐋𝐞𝐭 𝐘𝐨𝐮𝐫 𝐆𝐫𝐚𝐩𝐡 𝐃𝐨 𝐭𝐡𝐞 𝐓𝐚𝐥𝐤𝐢𝐧𝐠: 𝐄𝐧𝐜𝐨𝐝𝐢𝐧𝐠 𝐒𝐭𝐫𝐮𝐜𝐭𝐮𝐫𝐞𝐝 𝐃𝐚𝐭𝐚 𝐟𝐨𝐫 𝐋𝐋𝐌𝐬

Don't know what to do with your graphs in 2024? Shove them to an LLM, of course, and let LLM figure out what to do!

arxiv.org/abs/2402.05862

Short thread (1/5):

English

Jonathan Halcrow retweetledi

New paper alert 🚨: "Talk Like a Graph" studies the problem of encoding graph-structured data, paving the way for AI to better understand and process complex relationships. Surprising results below 🤯! #AI #LLM #GraphData #MachineLearning

English

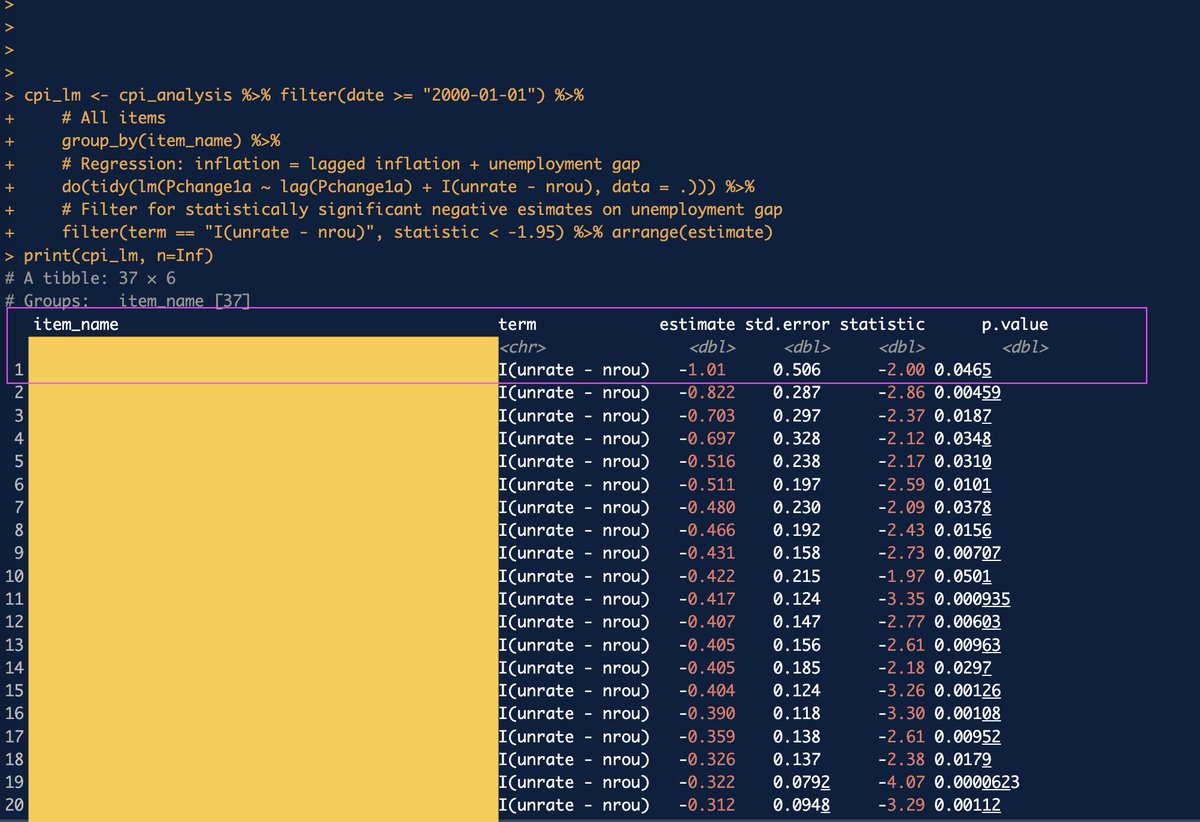

@mtkonczal This is kind of a dark guess, but funeral expenses?

English

Jonathan Halcrow retweetledi

FYI: we're running a Kaggle contest with GNNs!

Kaggle@kaggle

📣 Competition launch alert! Google - Fast or Slow? Predict AI Model Runtime hosted by @Google Research. 🎯 predict the runtime of graphs and configurations in the test dataset 💰 $50,000 prize pool ⏰ November 10, 2023 (entry deadline) goo.gle/3qKJXg7

English

Jonathan Halcrow retweetledi