Jiddah Abdul. @spark_coded

13.3K posts

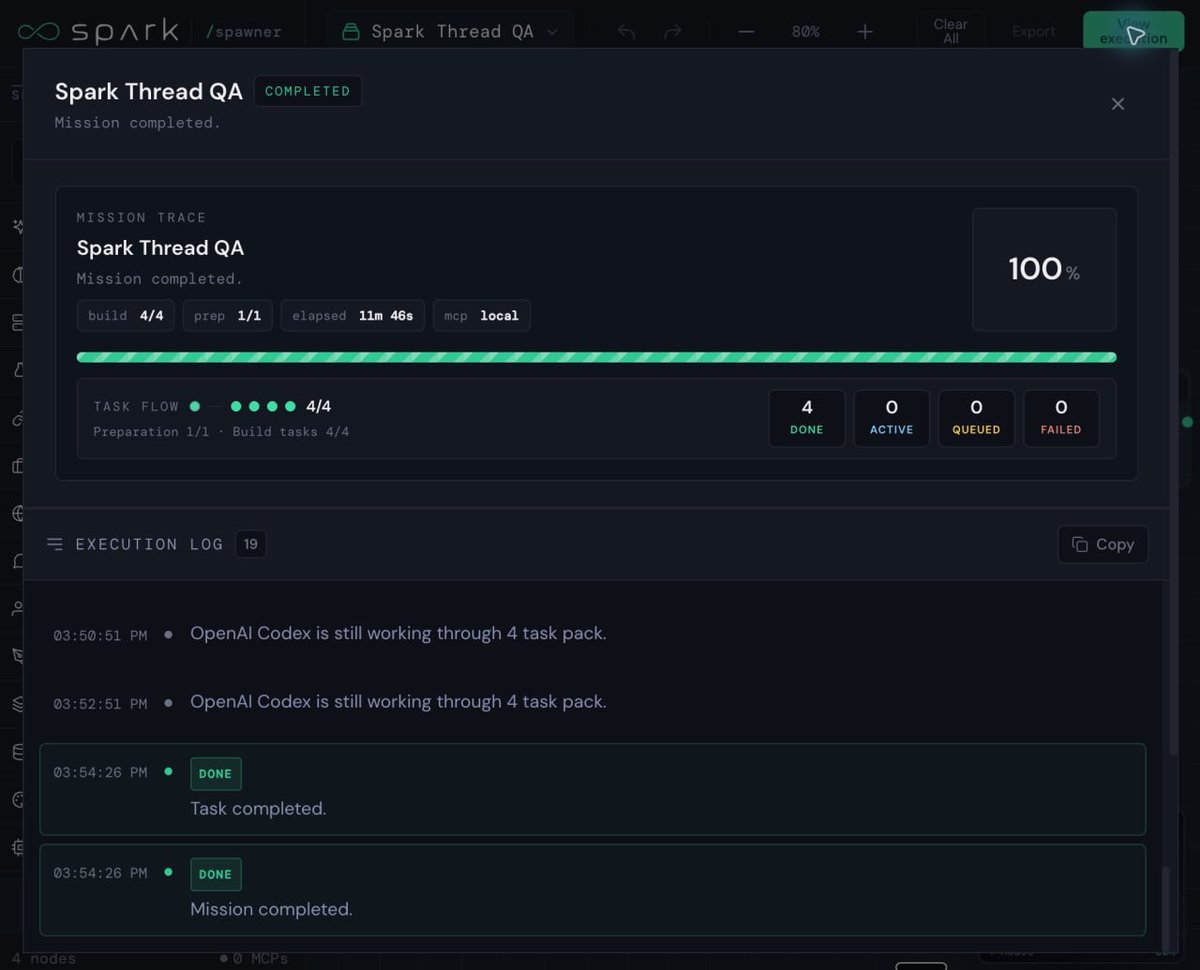

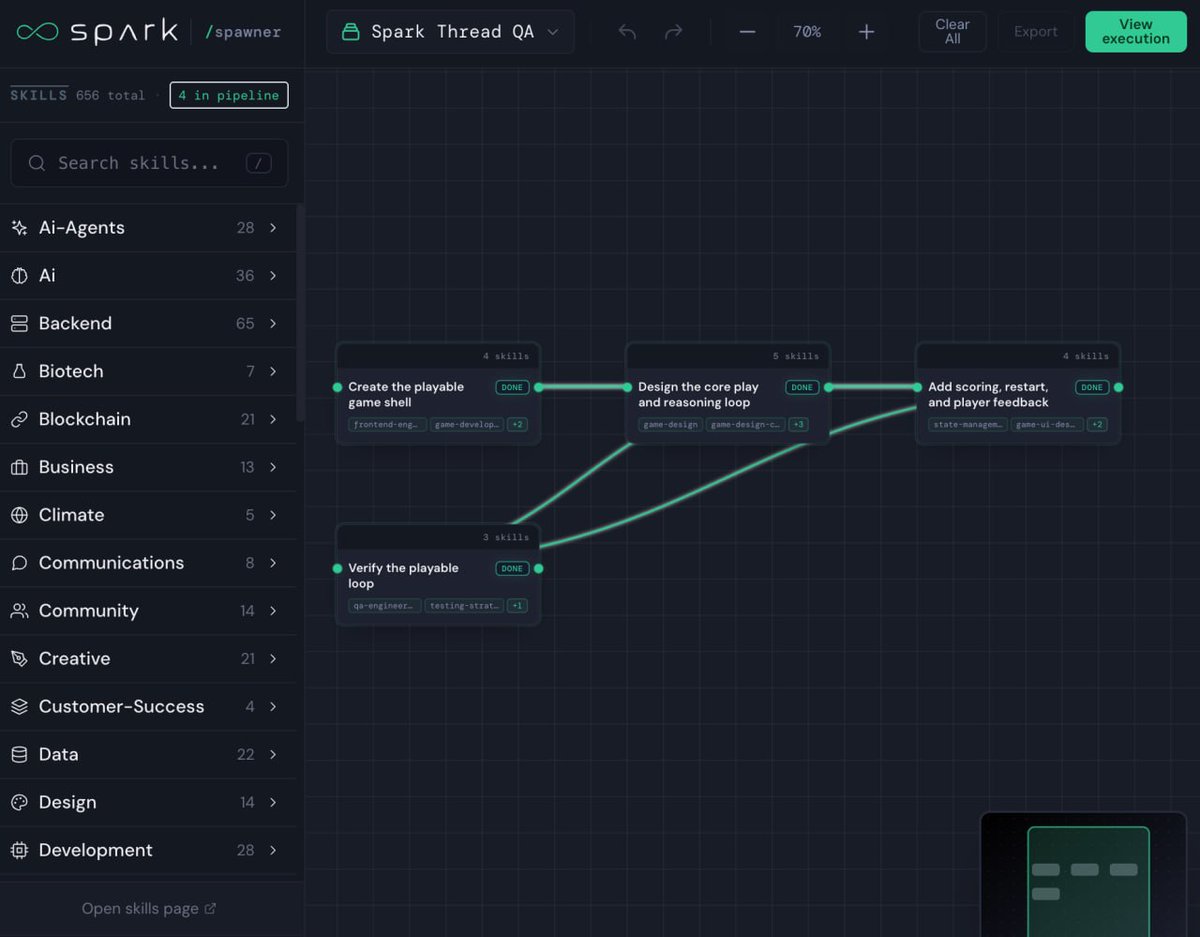

Spark takes its self-improvement loop from the methodology that created the best AI models. Benchmark evaluated, recursive self-improvements. The special part is that your Spark agent can apply this to master your workflows. creating > the benchmark > specialization path autoloop > then self-improving even while you sleep so that it can serve you better as a personal agent.

700+ builders. 120+ submissions. Wave 1 of the SoSoValue Buildathon is massive. 🚀 We’re seeing incredible innovation across AI, index tools, and on-chain execution. With so many high-quality submissions, our team has been overwhelmed by the review workload, but we are working hard and moving as quickly and carefully as possible to judge every project fairly. To give every submission a fair, high-quality review, we are doubling our evaluation window. ✨ New Evaluation Phase: May 13 - May 22, 2026 Our team and guest reviewers from SoSoValue, SoSoValue Indexes, and SoDEX are diving deep into your projects to identify the future of on-chain finance. Thanks for your patience and your brilliance, builders! #SoSoValue #Buildathon #DeFi #AI

you probably heard of @Spark_coded but you still don't get it.. I'll walk you through it all by explaining it super simply.. 1. spark improves while you're away it's a local agent that lives on your machine, talks to you in telegram, and keeps training itself in the background >you close your machine.. spark keeps working >wake up, it's sharper than last night.. that simple 2. how it works in one picture you → telegram → spark's brain → your llm of choice → answer back to you 3. spark has a brain called the intelligence builder, think of it as a dispatcher, so every message you send, it decides: >is this chat or a build task >which model handles it (claude, codex, glm, local, whatever you brought) >what memory to pull >which specialist chip to wake up you just type one line and it routes.. 4. now the interesting part is that spark has "chips" >a chip is a specialist, one for trading, one for content, one for code... one for startups etc .. so each chip has a score: >starts weak then it gets graded every time it runs.. kinda think of it like pokémon but for skills 5. the loop: baseline 42 → trial 68 → review → promote 81 spark runs the chip overnight, tries new rubrics, new tools, new approaches then scores each attempt. if the new version beats yesterday → promote. if not → retire 6. the wedge every other agent in 2026 promotes itself because it *sounds* better when spark only promotes itself because it *scored* better.. benchmark, rubric, replay, or human review, basically one of those four has to gate the change.. otherwise, it doesn't get to write back. 7. what spark remembers: not every random chat, but lessons, boundaries, playbooks, stuff worth keeping. you tell it your taste once, it carries that forever on your local machine and it never leaves unless you opt in. 8. what gets shared with the swarm: nothing private like ever, not your secrets..not your raw work or your memory and the only proven pattern is the chip version that scored higher.. 9. how it actually feels: day 1: spark is generic... answers are mid. day 30: it knows your voice, your stack, your habits, your no-go zones.. day 100: it has chips you didn't even ask for, nightly loops improved them while you weren't even looking and your agent just compounds. 10. tldr spark is more than an agent..it's a recursive agent stack: >chat door (telegram) , hoping for discord later >runtime (the brain) >chips (specialists) >scored loop (the engine) >local memory (yours) >optional swarm (only proofs travel) 11. the bet is simple personalization > model this about what would happen in 12 months, everyone's spark will look different because everyone's chips will have trained on different work. well, sure I’m not replacing Hermes with Spark.. Hermes is still the supervisor of my stack but Spark sits above the mess as the pattern layer..It watches the runs, scores what worked, catches repeated workflows, and tells me what should become a reusable chip/skill.. worth giving it a try if you’re already running agents, the fun part is that later you can train any chip around the work you actually repeat. your content chip, research chip, trading chip, coding chip, startup chip, whatever... and that’s where it gets interesting, because the agent doesn’t just remember your preferences and it starts building specialists around your own workflow.. this is the surface tho.. there's still canvas that shows what spark saw and learned, the autoloops and alot more.. but this is enough to understand what it is about... keep an eye on @Spark_coded and @meta_alchemist , a lot is happening in the next few days..