Jiaming Tang retweetledi

ParoQuant just got a big upgrade 🚀

✅ Supports the new Qwen3.5 models

⚡ Now runs on MLX (fast local inference on Apple Silicon)

🧠 Preserves reasoning quality with 4-bit quantization

We also built an agent demo running locally on my 4-year-old M2 Max.

Can't wait to upgrade to an M5 Max and see what kind of magic we can do. ✨

Zhijian Liu@zhijianliu_

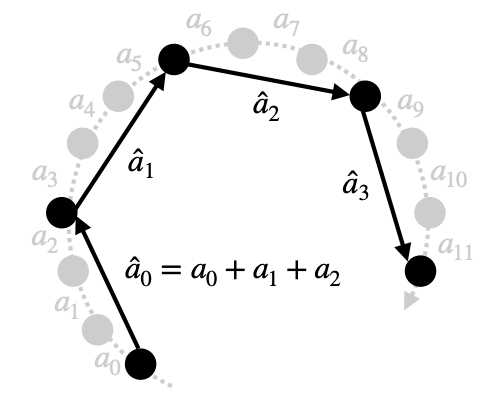

Reasoning LLMs generate very long chains-of-thought, so even small quantization errors add up. With AWQ, Qwen3-4B drops 71.0 → 68.2 on MMLU-Pro (~4% relative loss). 😬 ParoQuant fixes this! It keeps only the critical rotation pairs and fuses everything into a single kernel. Recovers most of the lost reasoning accuracy with minimal overhead — so 4-bit models stay strong at reasoning. 💪💪

English