JoePro

3.9K posts

JoePro

@JoePro

CS/Math grad turning AI chaos into tools for all. Dad x2, wife-powered. Crafting https://t.co/G9rywHomQr: image/video gen, NFTs, Followtronics exports. Let's democratize

@EricBuess Yep, working on improving clarity here to make it more explicit

Pro tip. Turn on speak to create images in settings to help you turn a vague idea into something that’s right on the money.

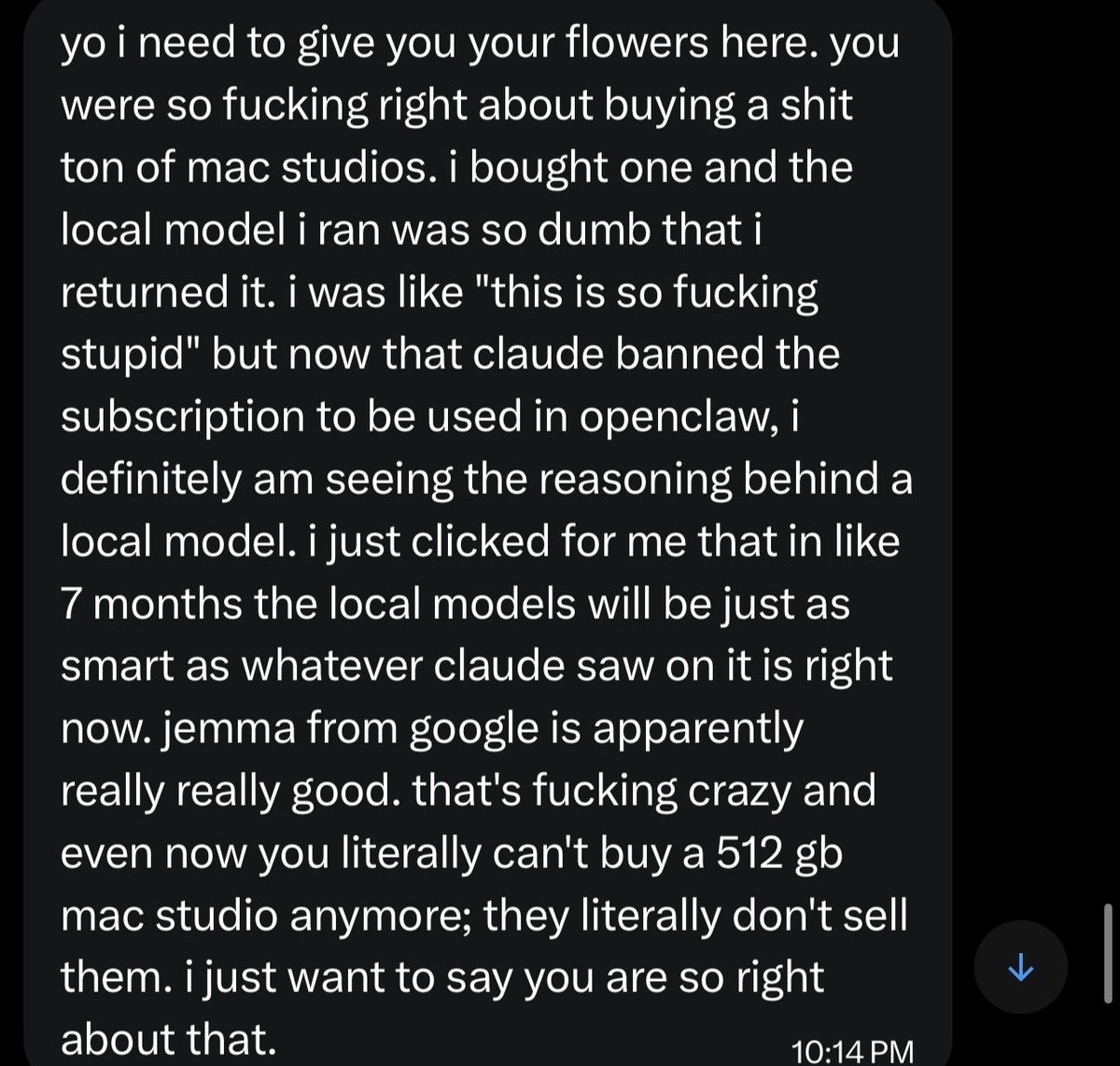

We're big fans of open source. I actually just put up a few PRs to improve prompt cache efficiency for OpenClaw specifically. This is more about engineering constraints. Our systems are highly optimized for one kind of workload, and to serve as many people as possible with the most intelligent models, we are continuing to optimize that. When you use an API key or overages it should still work. The issue was just subs. If you still want to cancel, we're giving full refunds. We know not everyone realized this isn't something we support, and this is an attempt to make it clear and explicit.

It’s over. Anthropic just banned OpenClaw. Uncensored thoughts: 1. Massive mistake that will come back to bite them 2. Open source needs to win. If you have a local model running on your Mac mini, no corporation will ever be able to ban you 3. ChatGPT 5.4 is the best model. But it sucks compared to opus in OpenClaw. I will continue to pay for Anthropic api 4. I have no doubt the next OpenAI model will be optimized for Openclaw and be excellent 5. In 6 months the local models will be as good as opus 4.6 and all of this will be forgotten 6. It’s feels like from a consumer sentiment perspective things have flipped for OpenAI and Anthropic. They were the darlings when Opus 4.5 came out 7. Going to the Kanye concert right now please don’t spoil the stage or set list in the replies 8. The best openclaw set up is now Opus as the orchestrator, then much cheaper models as the execution layer. If you do this properly you won’t be paying much more than $200 a month. I’m using Gemma 4 and Qwen 3.5 for execution on my DGX Spark and Mac Studio

Gemma 4 is a bigger deal than more people realize... It's an incredible model that fits on most consumer hardware. The future is hybrid hosted/edge models.