Thang Luong@lmthang

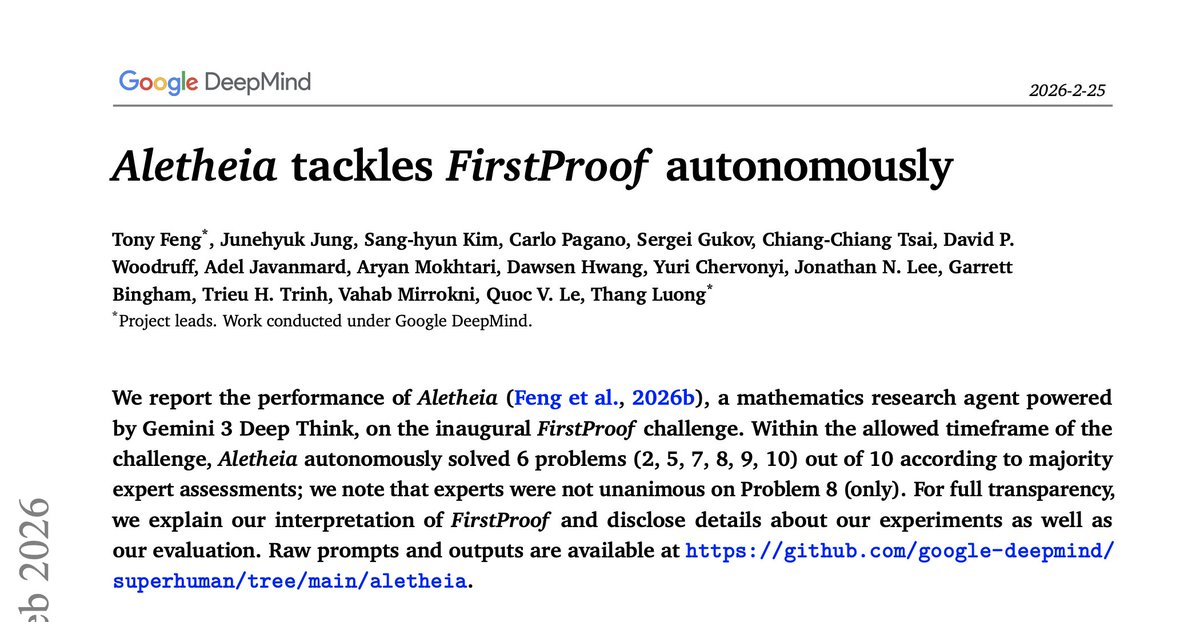

Research-level mathematics draws on advanced techniques from vast literature, with papers often spanning dozens of pages. While foundation models possess a large knowledge base from pretraining, their understanding of advanced subjects remains superficial due to data scarcity, and they are also prone to hallucinations. As such, in the first paper, "Towards Autonomous Mathematics Research", we built #Aletheia (ancient Greek word for "Truth"), a math research agent, that can iteratively generate, verify, and revise solutions end-to-end in natural language.

Link to the paper: github.com/google-deepmin… (to be on arXiv soon!)

There are 3 main sources that power Aletheia ...