Sabitlenmiş Tweet

2025 will be the year we see the first self-driving startups.

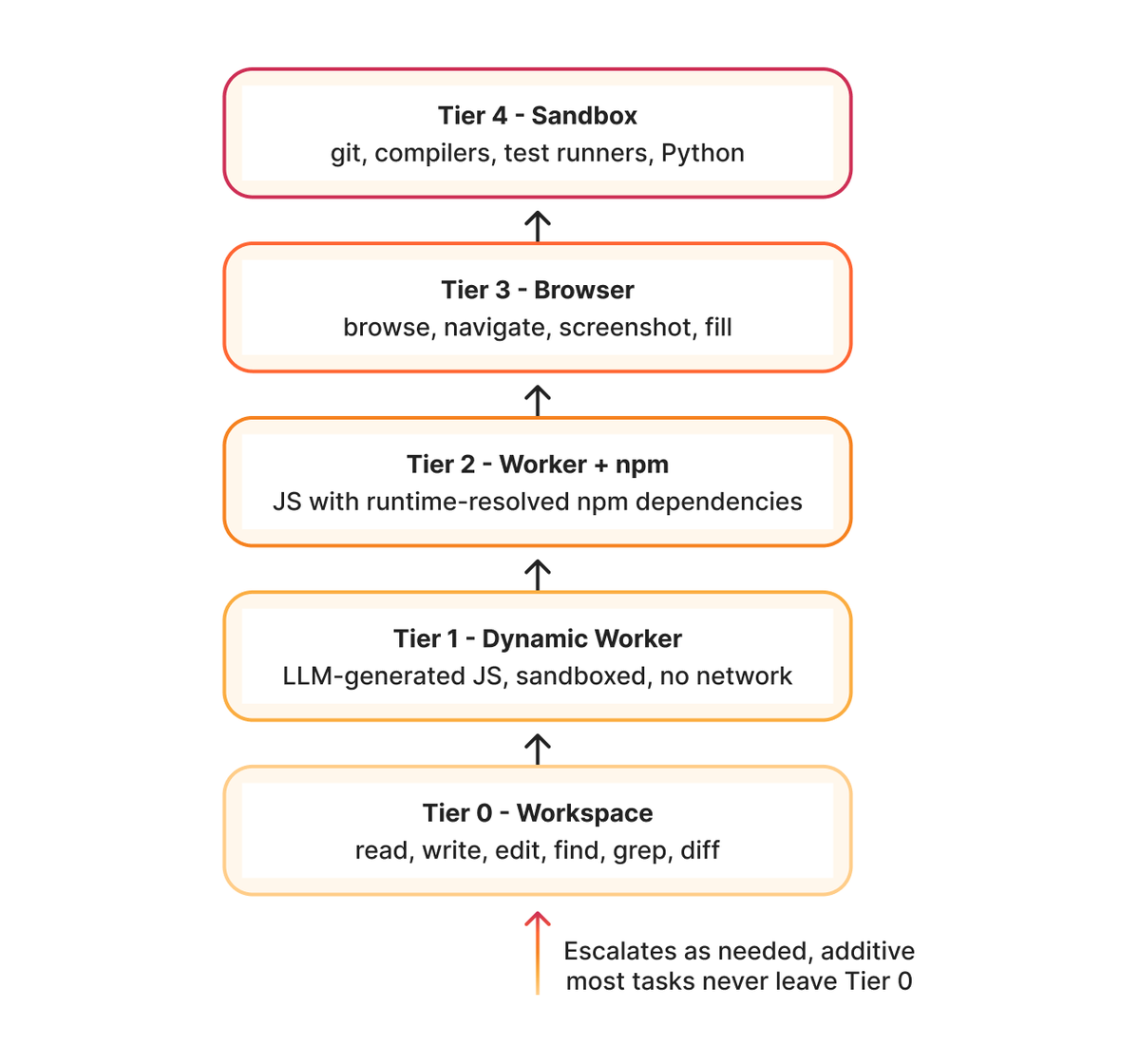

Level 0: No AI

People do everything. They come up with ideas, build products, and run operations. Many legacy businesses still work this way.

Level 1: People use AI tools ⬅︎ we are here

People might use ChatGPT to help write copy or Cursor to help write code. This is where most startups are today.

Level 2: AI agents complete tasks based on human instructions

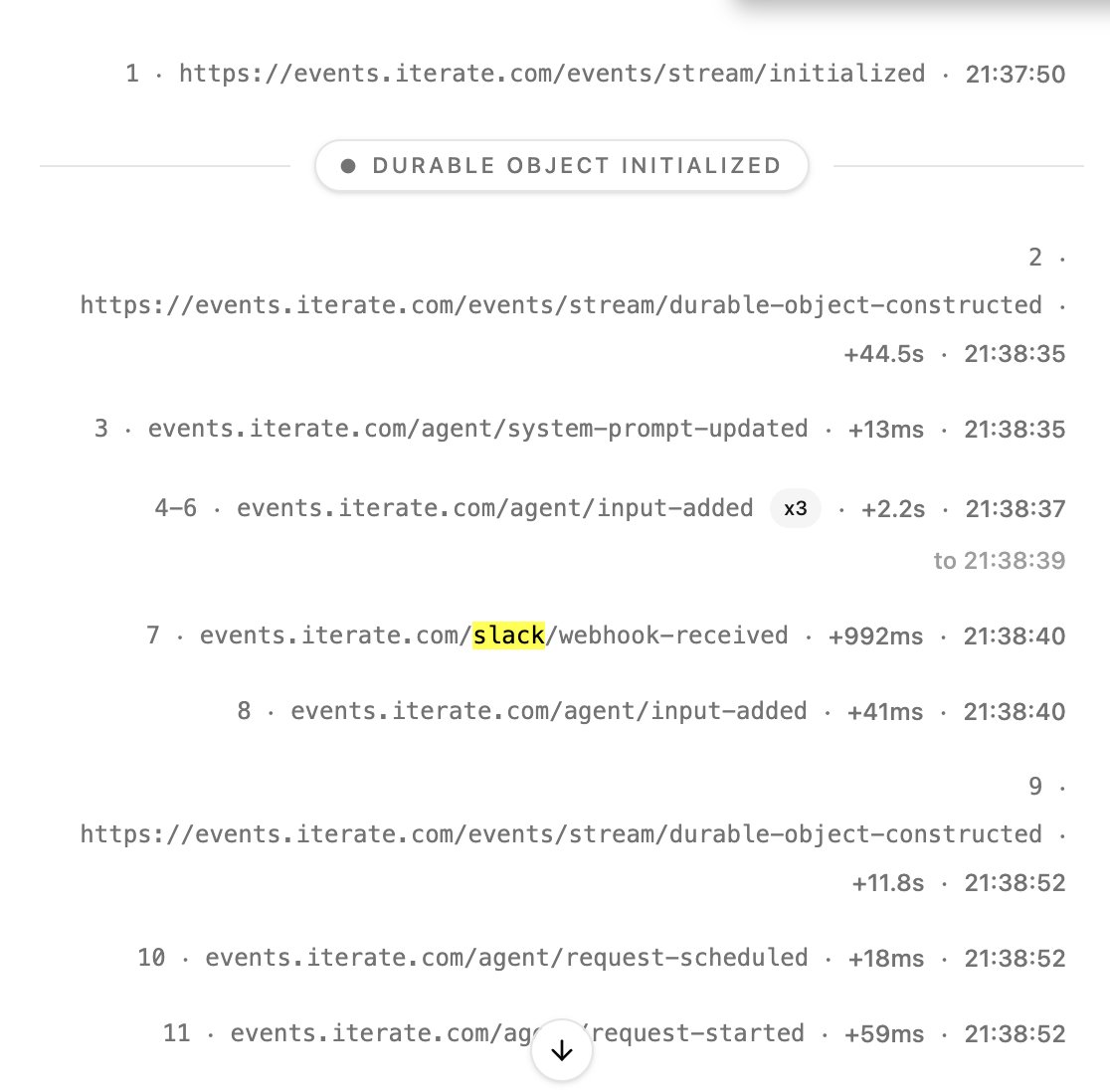

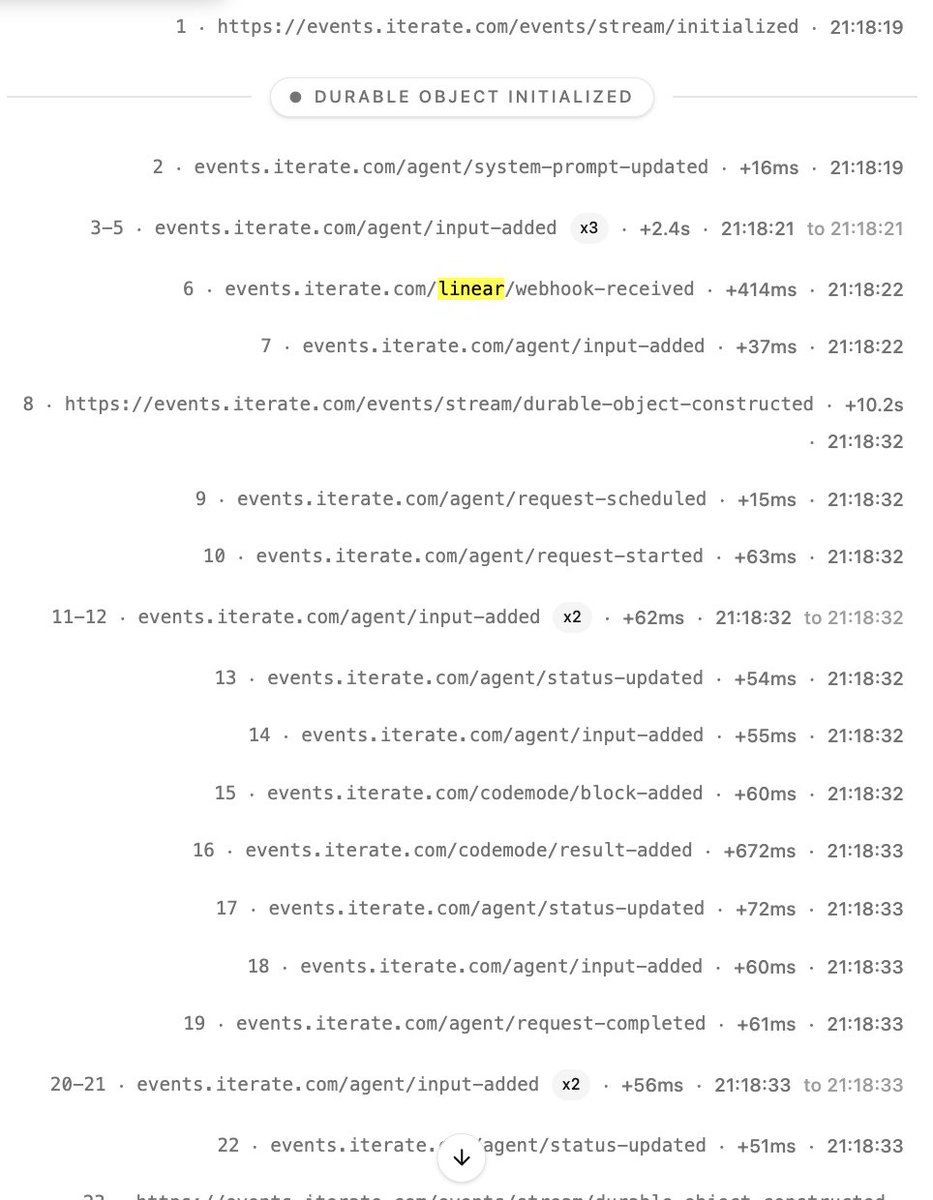

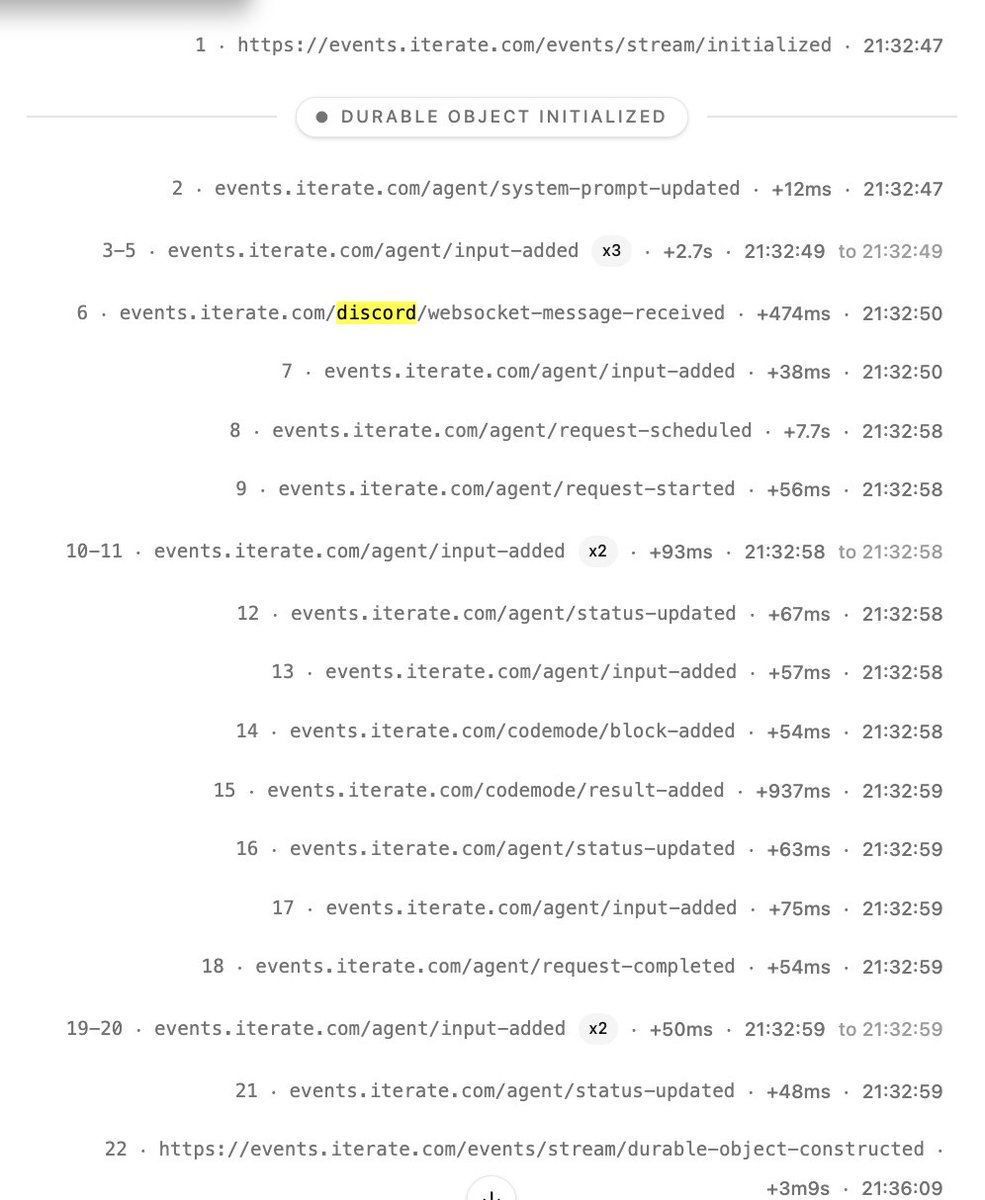

People might ask AI agents to write software from a plain-English spec or tell it execute well-defined customer service processes. At this point entire departments (like support or QA) get largely replaced by AI. No startups I know of operate at this level yet—but if yours does, let me know.

Level 3: AI agents propose changes to their own instructions

They might propose new customer service processes and product changes in response to customer feedback. Humans would still approve each of those changes. Just a few people could run a large company this way.

Level 4: AI agents autonomously change their instructions

At this point startups become self-improving. Humans would only be involved as an escalation point or where required by the real world (e.g. to raise capital or to incorporate). At this point many startups would only have one human.

Level 5: No humans

AI agents decide which businesses to start, raise capital (through crypto tokens or other means), build and run them. No humans required. This would require major reforms in the legal and financial system.

English