Jonathan Yuen

3K posts

Jonathan Yuen

@jonathanykh

data and automation systems

Katılım Kasım 2013

2.2K Takip Edilen470 Takipçiler

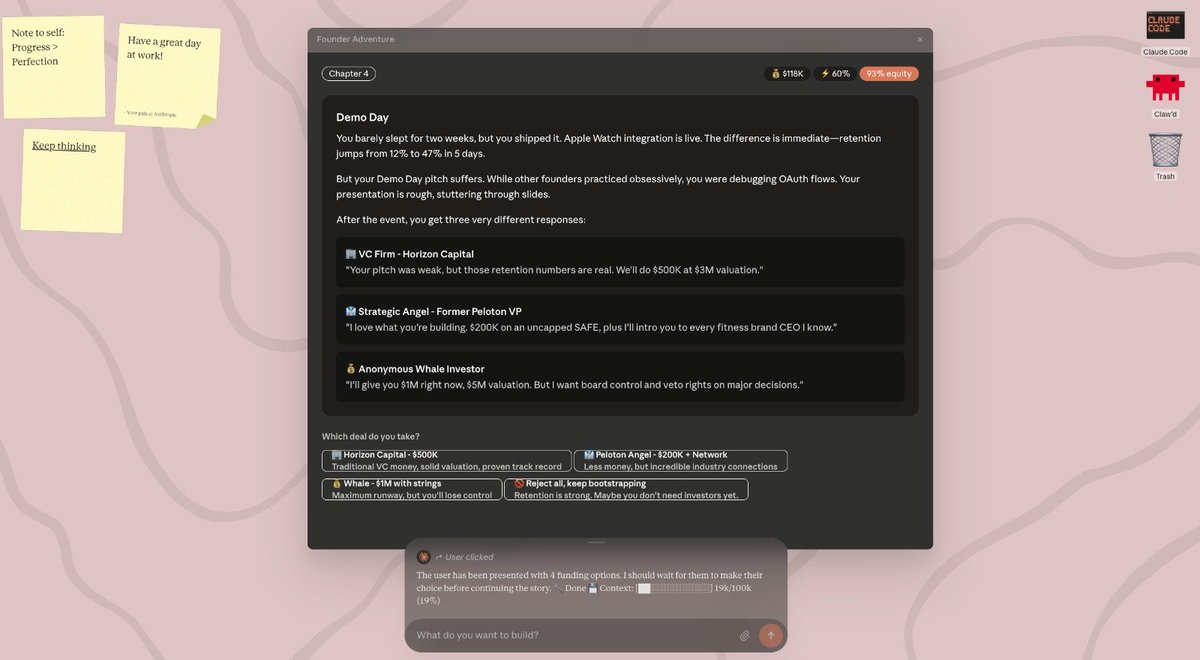

@taalas_inc holy shit, imagine Opus 4.6 or gemini 3.1 on these chips, UI is going to be dynamic with this

English

24 dedicated people.

$30M spent on development.

Extreme specialization, speed, and power efficiency.

Today we launch Taalas’ first product. Check it out:

Details: taalas.com/the-path-to-ub…

Demo chatbot: chatjimmy.ai

API: taalas.com/api-request-fo…

English

Jonathan Yuen retweetledi

the current state of HBM for AI chips is increasingly similar to the choice of avoiding carbon fiber in building Starship - it could be way more scalable to just use steel (DRAM) instead

Elon Musk@elonmusk

@SawyerMerritt You can fit more total RAM on the board if you use “normal” memory than high-bandwidth memory and it is super cheap. Maybe high-bandwidth memory is still the right choice, but using HBM isn’t the slam dunk many people think it is.

English

we are so early. 8 billion people in the world and only 0.5% population willing to pay 20 bucks per month to get things done

Wasteland Capital@ecommerceshares

Latest OpenAI numbers from the FT: 800m users, 5% paying (40m). $13bn in ARR. Implies a $325 annual ARPU, or $27/month per paying user. 70% of rev from subscriptions, rest is API. $8bn loss in H1, prob $20bn run rate loss now? So basically spending $3 for each $1 in revenue.

English

@bcherny @sdrzn you really should take a look at the angry users here:

reddit.com/r/ClaudeAI/com…

github.com/anthropics/cla…

something's definitely wrong on Anthropic's side.

English

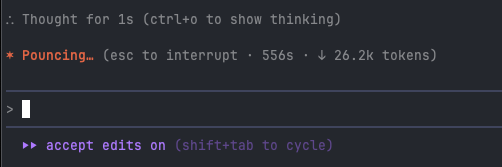

👋 Boris from the Claude Code team here.

Compact behavior is the same as before -- the new ⛝ boxes in /context are just a cosmetic UI change that gives people more transparency into auto-compact. We always auto-compacted near 155k tokens so there's enough buffer. We do that for reliability, and not to save costs or anything like that.

Would recommend Cline does something similar, if you don't already. After dozens of iterations, we've found 155k to work well to maximize content window while also maximizing reliability and avoiding "context window exceeded" API errors.

Re: rate limits -- we publish these here (support.claude.com/en/articles/11…), and generally find that most users hitting rate limits are still using the older Opus 4.1 model and have not yet upgraded to the more capable Sonnet 4.5.

English

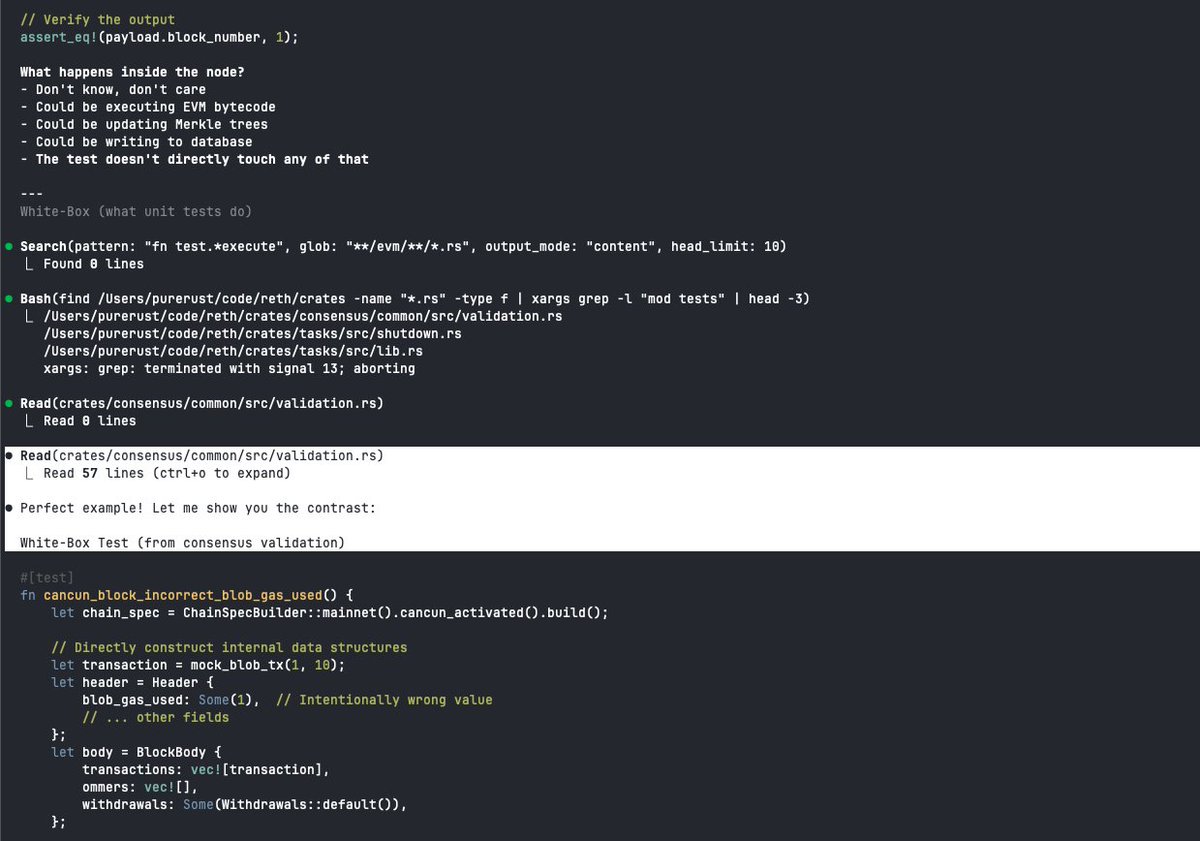

Claude Code’s last update now auto-compacts more aggressively, using less of the context window to reduce costs. Users are also reporting stricter rate-limits, all of a sudden getting cooldown periods of 4 days. Anthropic dug themselves a grave getting everyone to sign up for their $200 max plan—it misaligned business and product incentives, forcing them to cost optimize and degrade quality. Claude Code is no longer the best harness for their model anymore and their users can feel it:

English

@_philschmid @_amNoone the json structured output is not a 100% guarantee when using 2.5 flash, i am not sure why

English

@_amNoone This should. Can you send me an DM with an example what you are trying to do?

English