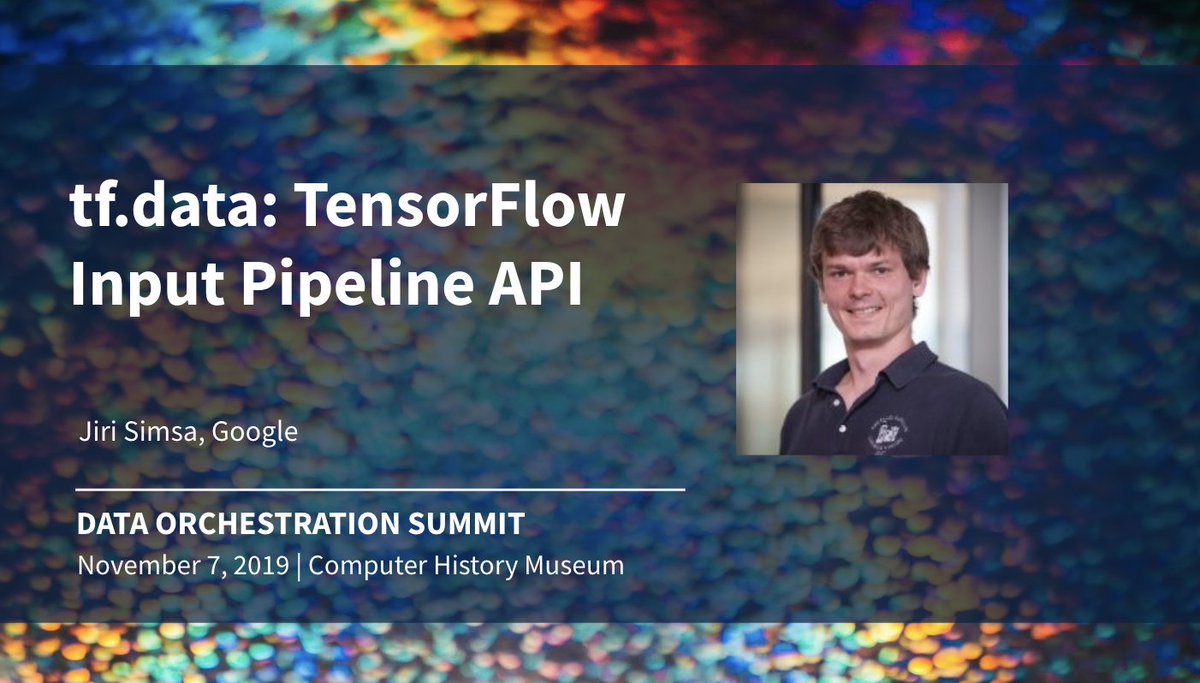

Jiri Simsa

145 posts

Jiri Simsa

@jsimsa

Working on data processing and analysis infrastructure for ML @ Google.

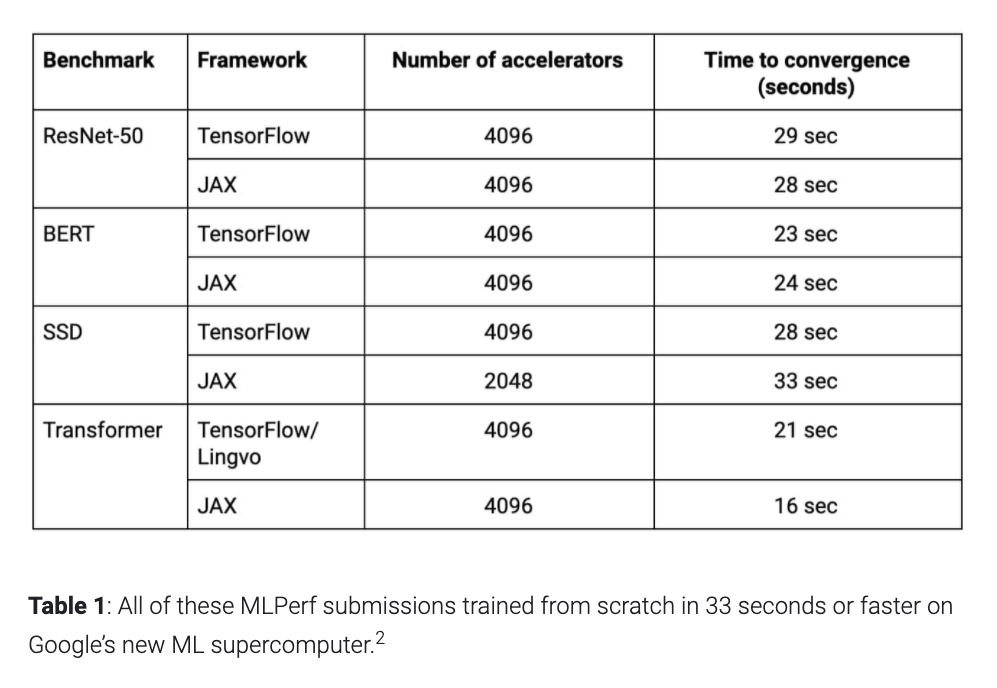

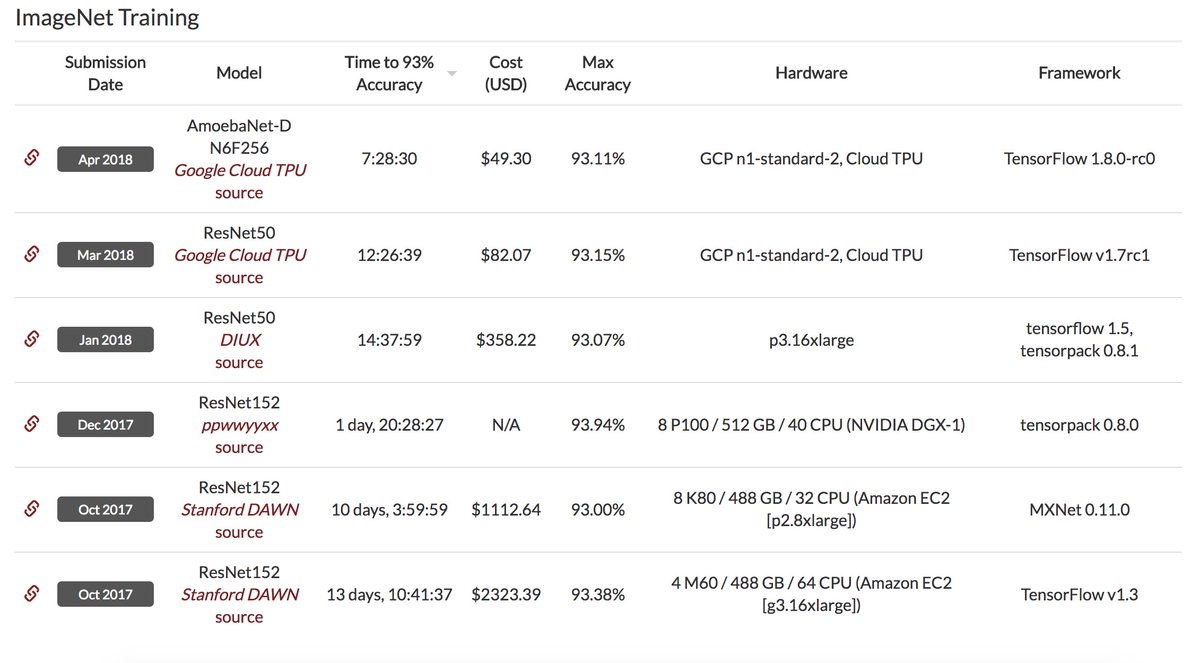

Very excited to see the MLPerf 0.7 results released today, where Google TPUs set records in six of the eight benchmarks! We need bigger benchmarks, because we can now train the ResNet-50, BERT, Transformer, & SSD benchmarks each in under 30 seconds. cloud.google.com/blog/products/…

1. Start with TF Data 2. Enable non-deterministic ordering 3. Cache data 4. Turn on experimental optimizations 5. Autotune parameter values --> >10% performance improvement! 🤯 #EuroPython

Loving the "Inside Tensorflow" series. The latest release on the TF data API highlights just how much effort the @TensorFlow team has invested in making highly performant pipelines accessible to the end user. Major kudos. 👏👏👏 @mrry @jsimsa et al youtube.com/watch?v=kVEOCf…

Our Cloud TPUs are designed with energy efficiency in mind, specifically to accelerate deep learning workloads at higher teraflops per watt compared to general purpose processors → blog.google/topics/google-… #EarthDay

TensorFlow Data Pipeline Performance Guide #TFDevSummit @mrry tensorflow.org/performance/da…

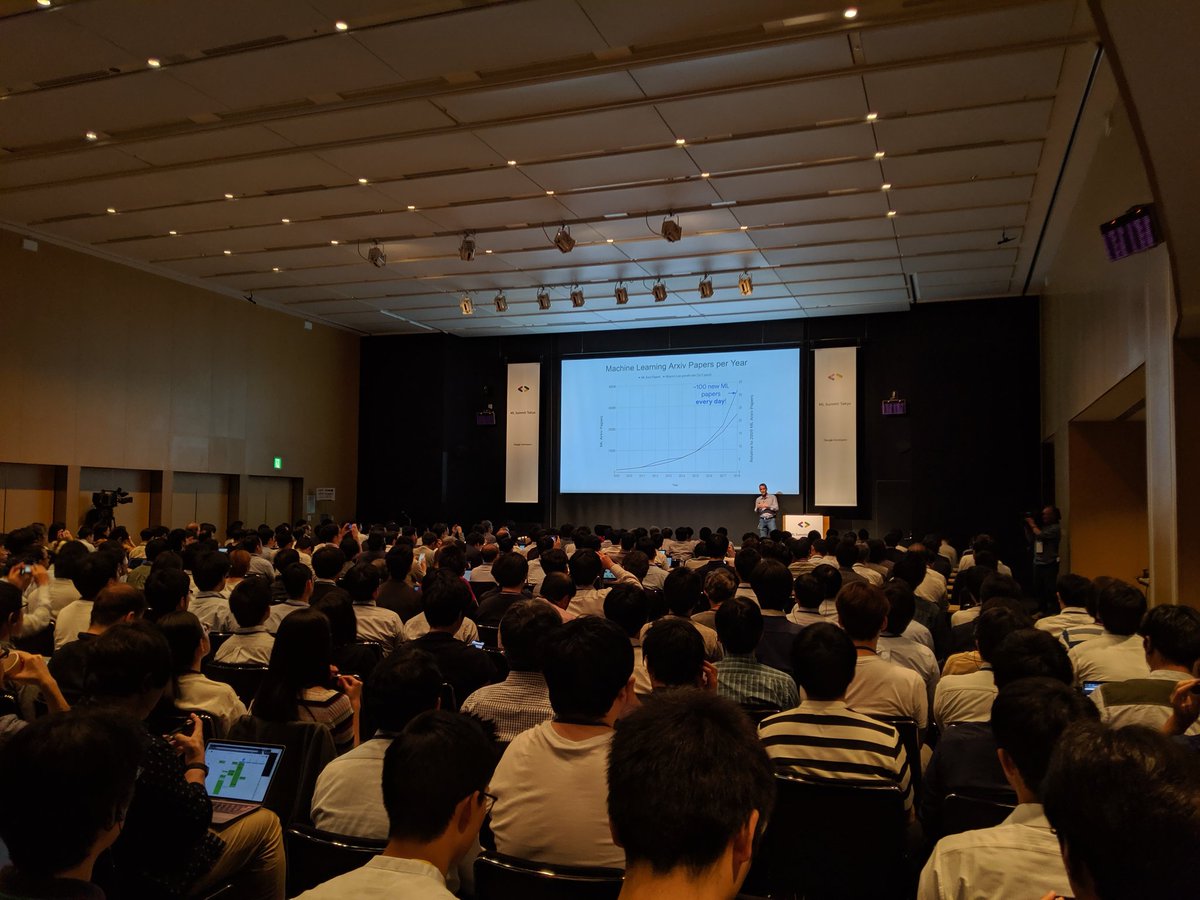

Hundreds of researchers, developers & TensorFlow enthusiasts arrive in Mountain View CA for the #TFDevSummit! We kick things off live in ~45 minutes. You can find the event livestream here → goo.gl/sxFLxD pscp.tv/TensorFlow/1dj…